## Neural Network Architecture Diagram

### Overview

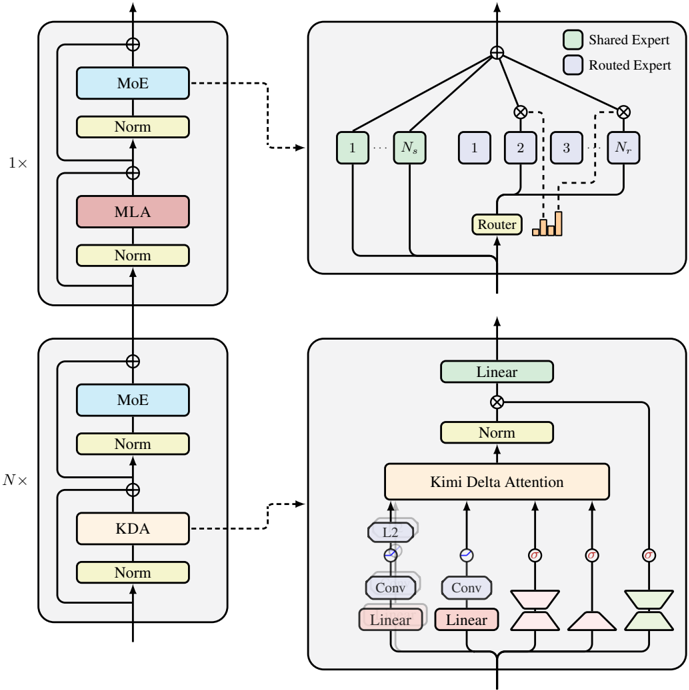

The image presents a neural network architecture diagram, illustrating the flow of data through various layers and components. The diagram highlights the use of shared and routed experts, attention mechanisms, and normalization layers. The architecture appears to be a hybrid design, incorporating elements of both feedforward and attention-based models.

### Components/Axes

* **Legend:** Located at the top-right corner.

* Green box: Shared Expert

* Blue box: Routed Expert

* **Blocks:** The diagram is composed of several blocks, each representing a set of operations or layers. These blocks are arranged vertically, indicating the flow of data.

* **Arrows:** Arrows indicate the direction of data flow between blocks and layers. Dashed arrows indicate a different type of connection or routing.

* **Labels:** Labels within the blocks describe the type of operation or layer, such as "MoE," "Norm," "MLA," "KDA," "Linear," "Conv," "Router," and "Kimi Delta Attention."

* **Multiplication Factors:** "1x" and "Nx" indicate the number of times a particular block is repeated.

### Detailed Analysis

The diagram can be broken down into the following sections:

1. **Top Block (1x):**

* A block labeled "1x" is located at the top-left.

* It contains the following layers:

* MoE (Routed Expert - Blue)

* Norm (Normalization - Yellow)

* MLA (Red)

* Norm (Normalization - Yellow)

* The input flows through MoE, Norm, MLA, and Norm sequentially.

* A dashed arrow connects this block to the top-right block.

2. **Top-Right Block (Routing):**

* This block represents a routing mechanism.

* It contains several nodes labeled "1," "2," "3," "Nr," and "Ns." These nodes are connected in a tree-like structure.

* Nodes "1" and "Ns" are blue, indicating "Routed Expert".

* Nodes "1," "2," "3," and "Nr" are blue, indicating "Routed Expert".

* A "Router" (Yellow) is present at the bottom of this block.

* A bar graph is present near the router.

* The output of this block flows upwards.

3. **Bottom Block (Nx):**

* A block labeled "Nx" is located at the bottom-left.

* It contains the following layers:

* MoE (Routed Expert - Blue)

* Norm (Normalization - Yellow)

* KDA (Khaki)

* Norm (Normalization - Yellow)

* The input flows through MoE, Norm, KDA, and Norm sequentially.

* A dashed arrow connects this block to the bottom-right block.

4. **Bottom-Right Block (Attention):**

* This block represents an attention mechanism.

* It contains the following layers:

* Linear (Shared Expert - Green)

* Norm (Normalization - Yellow)

* Kimi Delta Attention (Khaki)

* L2 (Blue)

* Conv (Linear - Red)

* Linear (Red)

* Several hourglass-shaped components are connected to the "Kimi Delta Attention" layer. These components are colored blue, red, and green.

* The input flows through the "Linear" and "Norm" layers. The output of the "Norm" layer is fed into the "Kimi Delta Attention" layer. The "Kimi Delta Attention" layer receives input from the hourglass-shaped components.

### Key Observations

* The architecture utilizes both shared and routed experts.

* Attention mechanisms play a crucial role in the bottom-right block.

* Normalization layers are used extensively throughout the architecture.

* The "Nx" block is repeated multiple times, suggesting a deep network.

### Interpretation

The diagram illustrates a complex neural network architecture that combines different types of layers and mechanisms. The use of shared and routed experts suggests a modular design, where different parts of the network specialize in different tasks. The attention mechanism allows the network to focus on the most relevant parts of the input. The normalization layers help to stabilize training and improve performance. The repetition of the "Nx" block indicates that the network is designed to handle complex data. The architecture appears to be designed for tasks that require both feature extraction and attention-based processing.