## Vertical Bar Chart: Tool/Function Performance Metrics

### Overview

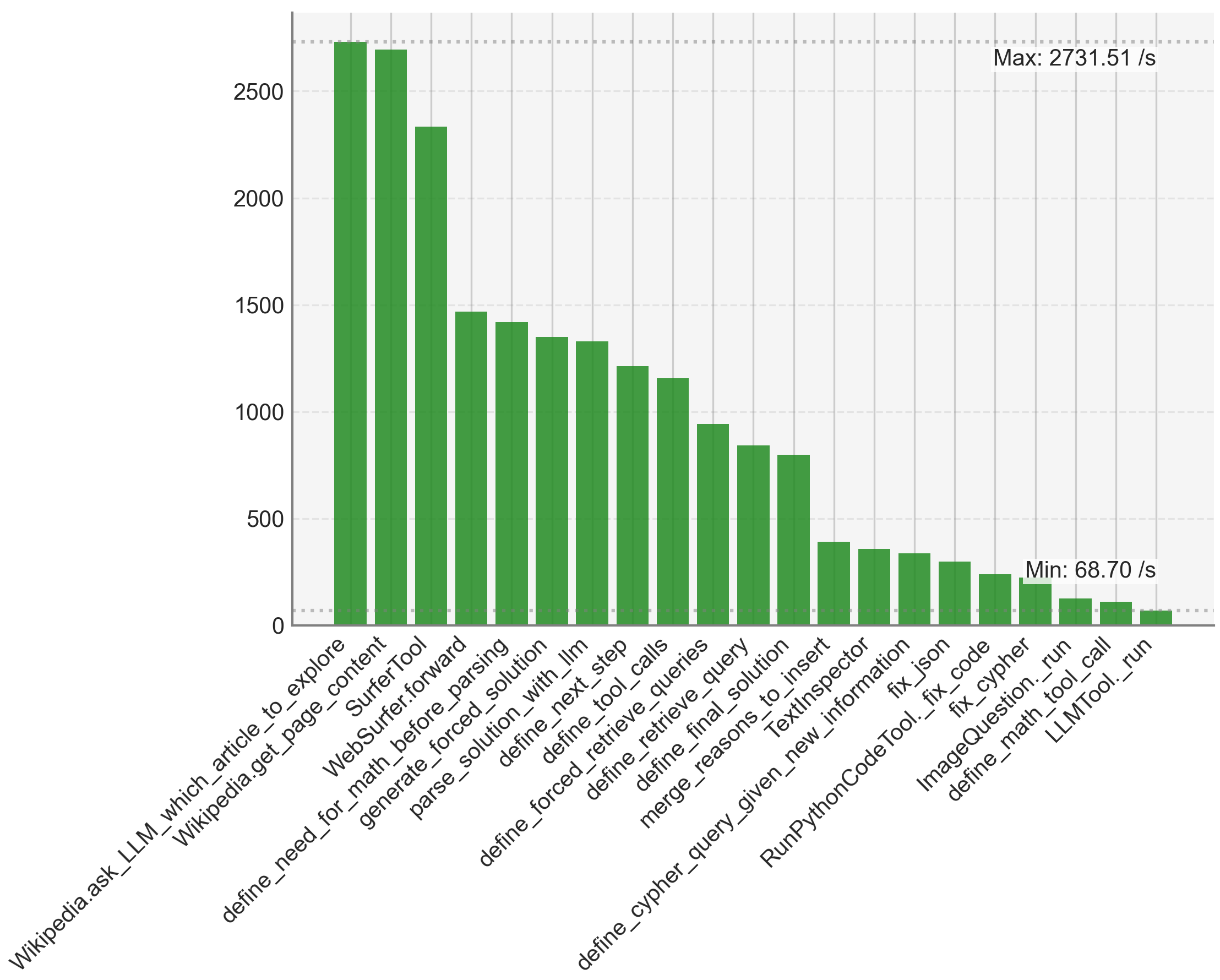

The image displays a vertical bar chart comparing the performance metrics (likely speed or throughput, measured in operations per second) of 21 distinct tools or functions. The chart is sorted in descending order of performance, from the highest value on the left to the lowest on the right. The highest and lowest values are explicitly annotated on the chart.

### Components/Axes

* **Chart Type:** Vertical Bar Chart.

* **X-Axis (Horizontal):** Lists the names of 21 tools or functions. The labels are rotated approximately 45 degrees for readability. The full list of labels, from left to right, is:

1. `Wikipedia.ask_LLM_which_article_to_explore`

2. `Wikipedia.get_page_content`

3. `SurferTool`

4. `WebSurfer.forward`

5. `define_need_for_math_before_parsing`

6. `generate_forced_solution`

7. `parse_solution_with_llm`

8. `define_next_step`

9. `define_tool_calls`

10. `define_forced_retrieve_queries`

11. `define_retrieve_query`

12. `define_final_solution`

13. `merge_reasons_to_insert`

14. `TextInspector`

15. `define_cypher_query_given_new_information`

16. `fix_json`

17. `RunPythonCodeTool._fix_code`

18. `fix_cypher`

19. `ImageQuestion._run`

20. `define_math_tool_call`

21. `LLMTool._run`

* **Y-Axis (Vertical):** Represents a numerical performance metric. The axis is labeled with major gridlines at intervals of 500, starting from 0 and extending to 2500. The unit is implied to be "per second" (/s) based on the annotations.

* **Annotations:**

* **Top-Right:** "Max: 2731.51 /s" – This annotation points to the top of the first (leftmost) bar.

* **Bottom-Right:** "Min: 68.70 /s" – This annotation points to the top of the last (rightmost) bar.

* **Legend:** There is no separate legend. All bars are the same solid green color, indicating they belong to the same data series.

* **Grid:** A light gray grid is present in the background, with horizontal lines corresponding to the y-axis ticks.

### Detailed Analysis

The chart presents a clear performance hierarchy. Below are the approximate values for each bar, determined by visual comparison to the y-axis gridlines. Values are listed in the same order as the x-axis labels (descending performance).

1. `Wikipedia.ask_LLM_which_article_to_explore`: **~2731.51 /s** (Exact value from annotation; bar extends slightly above the 2500 line).

2. `Wikipedia.get_page_content`: **~2700 /s** (Slightly shorter than the first bar).

3. `SurferTool`: **~2350 /s** (Bar ends between the 2000 and 2500 lines, closer to 2500).

4. `WebSurfer.forward`: **~1480 /s** (Bar ends just below the 1500 line).

5. `define_need_for_math_before_parsing`: **~1420 /s** (Slightly shorter than the previous bar).

6. `generate_forced_solution`: **~1350 /s**.

7. `parse_solution_with_llm`: **~1330 /s**.

8. `define_next_step`: **~1220 /s**.

9. `define_tool_calls`: **~1150 /s**.

10. `define_forced_retrieve_queries`: **~950 /s** (Bar ends just below the 1000 line).

11. `define_retrieve_query`: **~850 /s**.

12. `define_final_solution`: **~800 /s**.

13. `merge_reasons_to_insert`: **~400 /s** (Significant drop; bar ends below the 500 line).

14. `TextInspector`: **~370 /s**.

15. `define_cypher_query_given_new_information`: **~350 /s**.

16. `fix_json`: **~320 /s**.

17. `RunPythonCodeTool._fix_code`: **~250 /s**.

18. `fix_cypher`: **~220 /s**.

19. `ImageQuestion._run`: **~120 /s**.

20. `define_math_tool_call`: **~110 /s**.

21. `LLMTool._run`: **~68.70 /s** (Exact value from annotation; bar is the shortest).

### Key Observations

1. **Steep Performance Gradient:** There is a dramatic, non-linear decline in performance. The top three tools (`Wikipedia.ask_LLM...`, `Wikipedia.get_page...`, `SurferTool`) are in a class of their own, all exceeding 2300 /s.

2. **Performance Clusters:** The data naturally groups into clusters:

* **High-Performance Cluster (>2300 /s):** First 3 tools.

* **Mid-High Cluster (~1150-1500 /s):** Tools 4 through 9.

* **Mid-Low Cluster (~800-950 /s):** Tools 10 through 12.

* **Low-Performance Cluster (<400 /s):** Tools 13 through 21. The drop from tool 12 (`define_final_solution`, ~800 /s) to tool 13 (`merge_reasons_to_insert`, ~400 /s) is particularly sharp, representing a ~50% decrease.

3. **Magnitude of Difference:** The highest-performing tool is approximately **39.7 times faster** than the lowest-performing tool (2731.51 / 68.70 ≈ 39.7).

4. **Label Patterns:** The tool names suggest a workflow involving web interaction (`Wikipedia.*`, `SurferTool`, `WebSurfer`), mathematical reasoning (`define_need_for_math...`, `define_math_tool_call`), code generation/execution (`RunPythonCodeTool`, `fix_json`, `fix_cypher`), and general language model orchestration (`LLMTool._run`, `parse_solution_with_llm`).

### Interpretation

This chart likely visualizes the execution speed (e.g., API calls per second, function invocations per second) of different components within a complex AI agent or multi-tool system. The data suggests a clear architectural hierarchy:

* **Information Retrieval is Fast:** Tools that fetch or process raw information from Wikipedia are the fastest components. This makes sense as they may involve relatively simple, optimized network or parsing operations.

* **Reasoning and Planning are Slower:** Functions that involve "defining" steps, solutions, or tool calls (`define_*` functions) occupy the middle tiers. These likely involve more complex logic, prompting of an LLM, or decision-making, which are computationally heavier.

* **Code Execution and Specialized Tools are Slowest:** The lowest-performing cluster includes tools for fixing code (`fix_json`, `RunPythonCodeTool._fix_code`), handling images (`ImageQuestion._run`), and the base `LLMTool._run`. This indicates that operations requiring code interpretation, image processing, or direct, unoptimized LLM inference are the primary bottlenecks in this system.

The stark performance disparity implies that system throughput would be heavily constrained by the slowest components (`LLMTool._run`, `define_math_tool_call`). Optimizing these low-performing tools, or redesigning the workflow to minimize their use, would yield the most significant overall performance gains. The chart serves as a diagnostic tool for identifying such bottlenecks within a multi-stage AI pipeline.