## Diagram: Language CoT (Chain-of-Thought) Training Data Structure

### Overview

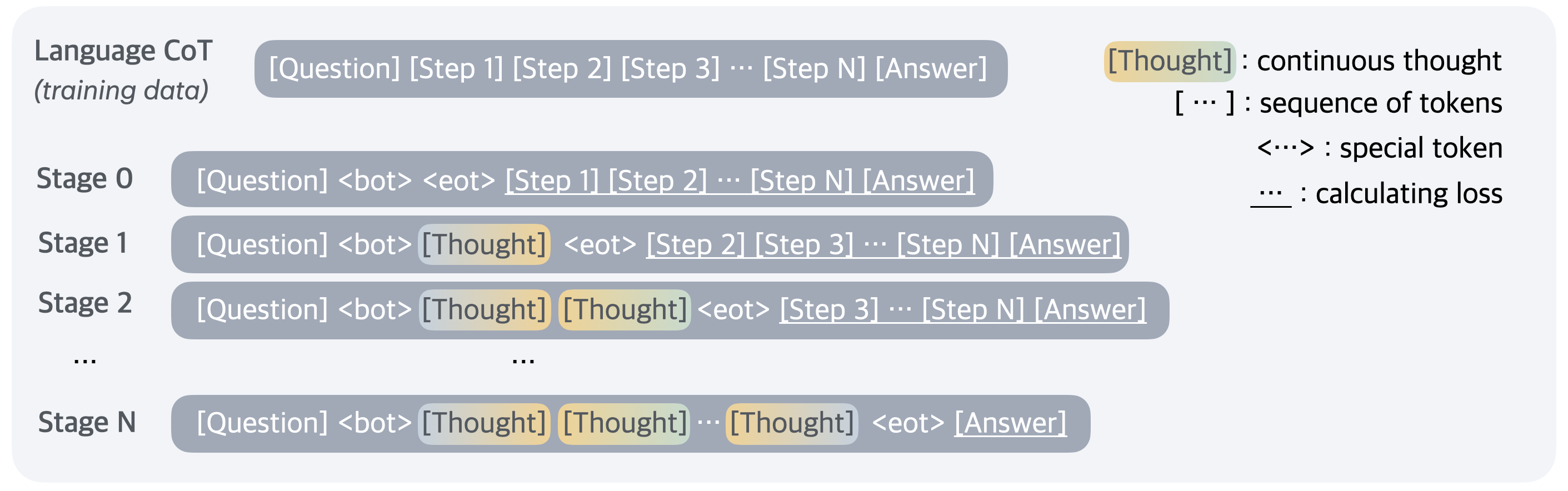

The image is a technical diagram illustrating the structure of training data for a language model using a Chain-of-Thought (CoT) approach. It depicts a progressive training methodology across multiple stages, where the model learns to generate intermediate reasoning steps ("Thoughts") before producing a final answer. The diagram is composed of a main content area on the left and a legend on the right.

### Components/Axes

**Legend (Top-Right Corner):**

* `[Thought]` : continuous thought (represented by a rounded rectangle with a yellow-to-green gradient fill).

* `[ ... ]` : sequence of tokens (represented by text in square brackets).

* `<...>` : special token (represented by text in angle brackets).

* `___` : calculating loss (represented by an underline beneath text).

**Main Content (Left and Center):**

The diagram is organized into rows, each representing a different stage in the training process. The stages are labeled on the far left.

* **Header Row:** Labeled "Language CoT (training data)". Contains a single gray rounded rectangle with the text: `[Question] [Step 1] [Step 2] [Step 3] ... [Step N] [Answer]`.

* **Stage 0:** Labeled "Stage 0". Contains a gray rounded rectangle with the text: `[Question] <bot> <eot> [Step 1] [Step 2] ... [Step N] [Answer]`. The segments `[Step 1]`, `[Step 2]`, and `[Step N]` are underlined.

* **Stage 1:** Labeled "Stage 1". Contains a gray rounded rectangle with the text: `[Question] <bot> [Thought] <eot> [Step 2] [Step 3] ... [Step N] [Answer]`. The `[Thought]` block has the yellow-green gradient. The segments `[Step 2]`, `[Step 3]`, and `[Step N]` are underlined.

* **Stage 2:** Labeled "Stage 2". Contains a gray rounded rectangle with the text: `[Question] <bot> [Thought] [Thought] <eot> [Step 3] ... [Step N] [Answer]`. Both `[Thought]` blocks have the yellow-green gradient. The segments `[Step 3]` and `[Step N]` are underlined.

* **Ellipsis Row:** Contains a single centered ellipsis (`...`), indicating intermediate stages between Stage 2 and Stage N.

* **Stage N:** Labeled "Stage N". Contains a gray rounded rectangle with the text: `[Question] <bot> [Thought] [Thought] ... [Thought] <eot> [Answer]`. Multiple `[Thought]` blocks are shown, all with the yellow-green gradient. The final `[Answer]` segment is underlined.

### Detailed Analysis

The diagram outlines a curriculum or progressive training schedule:

1. **Baseline (Language CoT Header):** The standard format is a linear sequence: Question -> multiple reasoning Steps -> Answer.

2. **Stage 0 (Initialization):** The model is trained with the question followed immediately by special tokens `<bot>` (beginning of thought) and `<eot>` (end of thought), with no generated thoughts in between. The loss is calculated on the explicit reasoning steps (`[Step 1]` through `[Step N]`) and the final answer.

3. **Stage 1 (Single Thought):** The model is now trained to generate a single continuous `[Thought]` block after `<bot>` and before `<eot>`. The loss calculation shifts: it is no longer performed on `[Step 1]` (which is replaced by the model-generated thought), but begins from `[Step 2]` onward to the answer.

4. **Stage 2 (Multiple Thoughts):** The model generates two `[Thought]` blocks. The loss calculation shifts further, starting from `[Step 3]`.

5. **Progression to Stage N:** The pattern continues. At each subsequent stage, the model generates an additional `[Thought]` block, and the supervised loss calculation on the explicit "Step" tokens begins one step later. By **Stage N**, the model generates a sequence of thoughts that fully replaces all explicit intermediate steps (`[Step 1]` to `[Step N]`), and the loss is calculated only on the final `[Answer]`.

### Key Observations

* **Spatial Arrangement:** The legend is consistently placed in the top-right. The stages are arranged in a clear top-to-bottom vertical flow, implying a temporal or sequential progression in training.

* **Visual Coding:** The `[Thought]` blocks are uniquely identified by a color gradient, distinguishing model-generated content from the static token sequences like `[Question]` and `[Step X]`.

* **Loss Calculation Migration:** The underlined segments (`___`) visually track how the objective function (loss calculation) migrates from being applied to all explicit steps to being applied only to the final answer as the model learns to internalize the reasoning process.

* **Special Token Function:** `<bot>` and `<eot>` act as delimiters, framing the section where the model is expected to generate its chain of thought.

### Interpretation

This diagram illustrates a **progressive distillation or internalization training protocol** for teaching a language model to perform chain-of-thought reasoning. The core idea is to gradually shift the model's reliance from memorizing and reproducing explicit, human-written reasoning steps (`[Step 1, 2, ... N]`) to generating its own continuous reasoning process (`[Thought]`).

* **What it demonstrates:** It's a method for moving from supervised learning on step-by-step demonstrations to a more autonomous form of reasoning. The early stages provide strong supervision on the reasoning structure, while later stages encourage the model to develop its own internal representation of the thought process.

* **Relationship between elements:** Each stage builds directly upon the previous one. The `<bot>`/`<eot>` tokens provide the structural scaffold, the `[Thought]` blocks represent the model's learned internal reasoning, and the migrating loss calculation (`___`) is the training signal that drives this internalization.

* **Notable Anomaly/Insight:** The key insight is the **decoupling of the "thought generation" task from the "answer generation" task** in the loss function. By Stage N, the model is only supervised on producing the correct final answer given its own generated thoughts, which is a form of **reinforcement of the reasoning-to-answer mapping**. This suggests the goal is not just to generate plausible thoughts, but to generate thoughts that are *useful for arriving at the correct answer*.