## Diagram: Language CoT Training Data Stages

### Overview

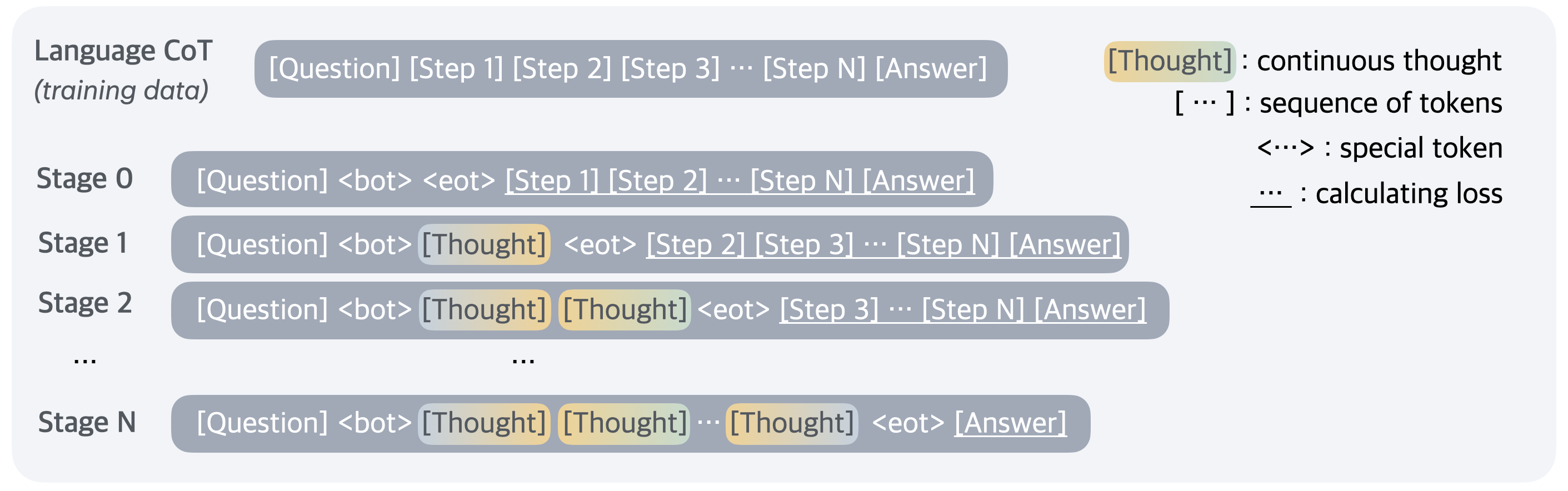

The image illustrates the process of Language Chain-of-Thought (CoT) training data across multiple stages. It shows how the model incorporates "Thought" tokens into the training sequence, progressing from no explicit thought to multiple thought tokens before the final answer.

### Components/Axes

* **Left Side**: Lists the stages of training, from Stage 0 to Stage N.

* **Center**: Shows the structure of the training data at each stage, including tokens like [Question], [Step 1], [Step 2], [Step N], [Answer], <bot>, <eot>, and [Thought].

* **Right Side**: Provides a legend explaining the meaning of specific tokens:

* `[Thought]`: continuous thought

* `[...]`: sequence of tokens

* `<...>`: special token

* `...`: calculating loss

### Detailed Analysis or Content Details

* **Language CoT (training data)**: `[Question] [Step 1] [Step 2] [Step 3] ... [Step N] [Answer]`

* This represents the initial training data format, consisting of a question, a series of steps, and the final answer.

* **Stage 0**: `[Question] <bot> <eot> [Step 1] [Step 2] ... [Step N] [Answer]`

* At Stage 0, the model receives the question, a beginning-of-turn token `<bot>`, an end-of-turn token `<eot>`, followed by the steps and the answer. No explicit "Thought" tokens are present.

* **Stage 1**: `[Question] <bot> [Thought] <eot> [Step 2] [Step 3] ... [Step N] [Answer]`

* In Stage 1, a single "Thought" token is inserted after the `<bot>` token and before the `<eot>` token, and before the remaining steps.

* **Stage 2**: `[Question] <bot> [Thought] [Thought] <eot> [Step 3] ... [Step N] [Answer]`

* In Stage 2, two "Thought" tokens are inserted after the `<bot>` token and before the `<eot>` token, and before the remaining steps.

* **Stage N**: `[Question] <bot> [Thought] [Thought] ... [Thought] <eot> [Answer]`

* In Stage N, multiple "Thought" tokens are inserted after the `<bot>` token and before the `<eot>` token, and before the answer. The number of "Thought" tokens increases as the stage progresses.

### Key Observations

* The diagram illustrates an iterative process where the model is progressively trained to incorporate its "Thoughts" into the reasoning process.

* The number of "Thought" tokens increases with each stage, suggesting a deepening or elaboration of the model's reasoning.

* The `<bot>` and `<eot>` tokens likely signify the beginning and end of a turn or a specific segment of the interaction.

### Interpretation

The diagram demonstrates a training methodology for Language CoT models, where the model is gradually exposed to its own reasoning process ("Thoughts") during training. This approach likely aims to improve the model's ability to generate coherent and well-reasoned responses by explicitly incorporating intermediate thought steps. The progression from Stage 0 to Stage N indicates an increasing emphasis on the model's internal reasoning, potentially leading to more complex and nuanced answers. The use of special tokens like `<bot>` and `<eot>` suggests a structured approach to managing the flow of information during training.