# Technical Document Extraction: FFN Architecture Diagram

## 1. Overview

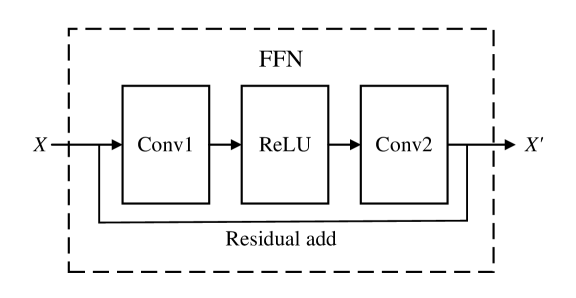

The image is a technical schematic illustrating the architecture of a **Feed-Forward Network (FFN)** block, specifically featuring a residual (skip) connection. The diagram uses standard deep learning notation to describe the flow of data through various layers.

## 2. Component Isolation

The diagram is contained within a dashed rectangular boundary, indicating the scope of the FFN module.

### Header/Title

* **Text:** `FFN`

* **Location:** Top center, inside the dashed boundary.

* **Meaning:** Identifies the entire block as a Feed-Forward Network.

### Main Processing Path (Sequential)

The central horizontal flow consists of three primary blocks connected by arrows:

1. **Conv1:** The first convolutional layer. It receives the input $X$.

2. **ReLU:** A Rectified Linear Unit activation function. It receives the output from `Conv1`.

3. **Conv2:** The second convolutional layer. It receives the output from the `ReLU` layer.

### Residual Connection (Skip Connection)

* **Label:** `Residual add`

* **Path:** A solid line branches off from the input $X$ before it enters `Conv1`. It travels underneath the three main blocks and merges with the output of `Conv2`.

* **Function:** This represents an identity mapping where the original input is added to the output of the transformation layers, a hallmark of ResNet-style architectures.

## 3. Data Flow and Variables

* **Input ($X$):** Located on the far left. An arrow indicates the entry point into the FFN block.

* **Output ($X'$):** Located on the far right. An arrow indicates the final processed data exiting the block.

* **Directionality:** The primary data flow is from left to right.

## 4. Logical Flow Summary

The mathematical operation depicted in this diagram can be transcribed as follows:

1. **Input:** $X$

2. **Transformation Path:** $T(X) = \text{Conv2}(\text{ReLU}(\text{Conv1}(X)))$

3. **Residual Addition:** The input $X$ is added to the result of the transformation.

4. **Final Output:** $X' = T(X) + X$

## 5. Textual Transcriptions

| Label | Type | Description |

| :--- | :--- | :--- |

| **FFN** | Title/Header | Feed-Forward Network identifier. |

| **$X$** | Variable | Input tensor/data. |

| **Conv1** | Component | First Convolutional layer. |

| **ReLU** | Component | Activation function layer. |

| **Conv2** | Component | Second Convolutional layer. |

| **Residual add** | Process | The operation of adding the identity input to the processed output. |

| **$X'$** | Variable | Final output tensor/data. |