## Line Graph: Computation Time vs. Sequence Length for Flash Attention and MoBA Algorithms

### Overview

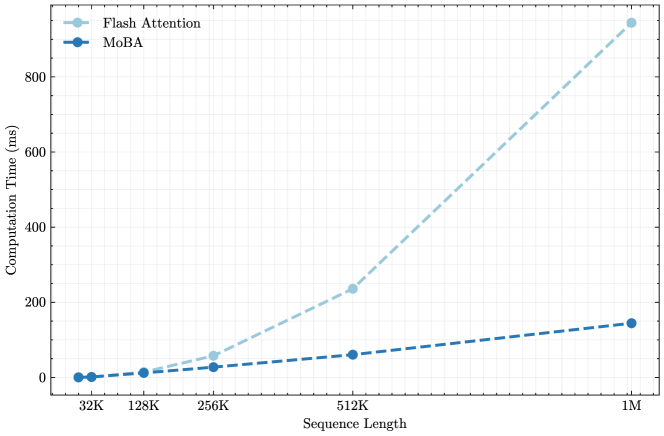

The graph compares the computation time (in milliseconds) of two algorithms—Flash Attention and MoBA—across varying sequence lengths (32K to 1M). Flash Attention exhibits a steeply increasing trend, while MoBA shows a linear increase. Both algorithms start with negligible computation time at 32K sequence length.

### Components/Axes

- **X-axis (Sequence Length)**: Labeled "Sequence Length" with markers at 32K, 128K, 256K, 512K, and 1M.

- **Y-axis (Computation Time)**: Labeled "Computation Time (ms)" with a range from 0 to 800 ms.

- **Legend**: Located in the top-left corner.

- Flash Attention: Dashed light blue line with circular markers.

- MoBA: Solid dark blue line with circular markers.

### Detailed Analysis

- **Flash Attention**:

- 32K: ~0 ms

- 128K: ~10 ms

- 256K: ~50 ms

- 512K: ~230 ms

- 1M: ~900 ms

- **MoBA**:

- 32K: ~0 ms

- 128K: ~10 ms

- 256K: ~20 ms

- 512K: ~50 ms

- 1M: ~140 ms

### Key Observations

1. **Exponential Growth for Flash Attention**: Computation time increases sharply after 512K, reaching ~900 ms at 1M.

2. **Linear Scaling for MoBA**: Time rises steadily, with a ~140 ms increase at 1M.

3. **Divergence at Scale**: The gap between the two algorithms widens significantly at 1M sequence length.

4. **Baseline Consistency**: Both algorithms start at ~0 ms for 32K sequence length.

### Interpretation

- **Algorithmic Efficiency**: Flash Attention’s steep growth suggests higher computational complexity for longer sequences, potentially due to memory or parallelism constraints. MoBA’s linear scaling indicates better optimization for large-scale tasks.

- **Practical Implications**: For applications requiring processing of very long sequences (e.g., 1M), MoBA is more efficient. Flash Attention may be preferable for shorter sequences where its initial performance is competitive.

- **Uncertainty**: Values are approximate, with potential variability in real-world implementations (e.g., hardware differences, implementation optimizations).

### Spatial Grounding

- Legend: Top-left corner, clearly associating colors/styles with algorithms.

- Data Points: Aligned with x-axis markers, confirming sequence length correspondence.

- Line Trends: Flash Attention’s dashed line slopes upward steeply; MoBA’s solid line has a gentle incline.