## Flowchart: Audio Signal Processing Pipeline

### Overview

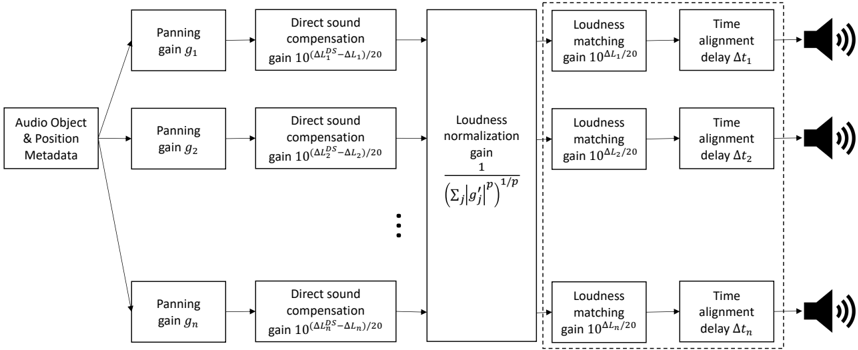

The diagram illustrates a multi-stage audio signal processing pipeline that transforms audio object metadata into spatially adjusted sound outputs. The process involves panning, direct sound compensation, loudness normalization, loudness matching, and time alignment operations. Two parallel processing paths are highlighted with dashed boxes, suggesting optional or specialized processing branches.

### Components/Axes

1. **Input**:

- "Audio Object & Position Metadata" (leftmost starting point)

2. **Processing Stages**:

- **Panning Gain**:

- Multiple instances labeled `g₁, g₂, ..., gₙ` (subscript notation indicates variable quantity)

- **Direct Sound Compensation**:

- Formula: `gain 10^((ΔL_DS - ΔL)/20)` (applied to each panning gain)

- **Loudness Normalization**:

- Formula: `1 / (Σ|g'_j|^p)^(1/p)` (central processing block)

- **Loudness Matching**:

- Multiple instances with gains `10^(ΔL₁/20), 10^(ΔL₂/20), ..., 10^(ΔLₙ/20)`

- **Time Alignment**:

- Delays labeled `Δt₁, Δt₂, ..., Δtₙ` (subscript notation matches loudness matching gains)

3. **Output**:

- Multiple speaker icons with sound waves (rightmost endpoints)

### Detailed Analysis

1. **Panning Gains**:

- Sequential processing of `g₁` to `gₙ` suggests variable spatial positioning for multiple audio objects

- Each gain feeds into direct sound compensation with identical formula

2. **Direct Sound Compensation**:

- Formula adjusts gain based on loudness difference (ΔL_DS - ΔL)

- Exponential scaling (base 10) indicates decibel-based compensation

3. **Loudness Normalization**:

- Central normalization block uses power-law summation (exponent p)

- Normalization factor ensures consistent loudness across processed signals

4. **Loudness Matching**:

- Parallel processing of multiple loudness adjustments

- Each match uses specific ΔL values (ΔL₁ to ΔLₙ)

5. **Time Alignment**:

- Corresponding delays (Δt₁ to Δtₙ) match loudness matching gains

- Suggests temporal synchronization for spatial audio rendering

### Key Observations

- **Dashed Boxes**: Highlight two distinct processing branches:

1. First branch: `g₁ → ΔL₁ → Δt₁`

2. Last branch: `gₙ → ΔLₙ → Δtₙ`

- **Subscript Notation**: Indicates variable quantity of processing elements (n ≥ 2)

- **Formula Consistency**: All compensation/matching gains use decibel-based scaling (10^(ΔL/20))

- **Bidirectional Flow**: Metadata flows left-to-right through processing stages

### Interpretation

This pipeline demonstrates a sophisticated spatial audio processing system that:

1. **Spatially Positions Audio Objects** through panning gains

2. **Equalizes Loudness** via compensation and normalization

3. **Synchronizes Timing** for coherent spatial rendering

The use of identical formulas across multiple elements suggests a scalable architecture capable of handling multiple audio objects simultaneously. The power-law normalization (exponent p) implies adjustable loudness perception characteristics, while the decibel-based compensation maintains psychoacoustic accuracy. The parallel processing branches indicate potential for real-time adaptive processing or specialized audio object handling.