\n

## Diagram: LLM Response Flow

### Overview

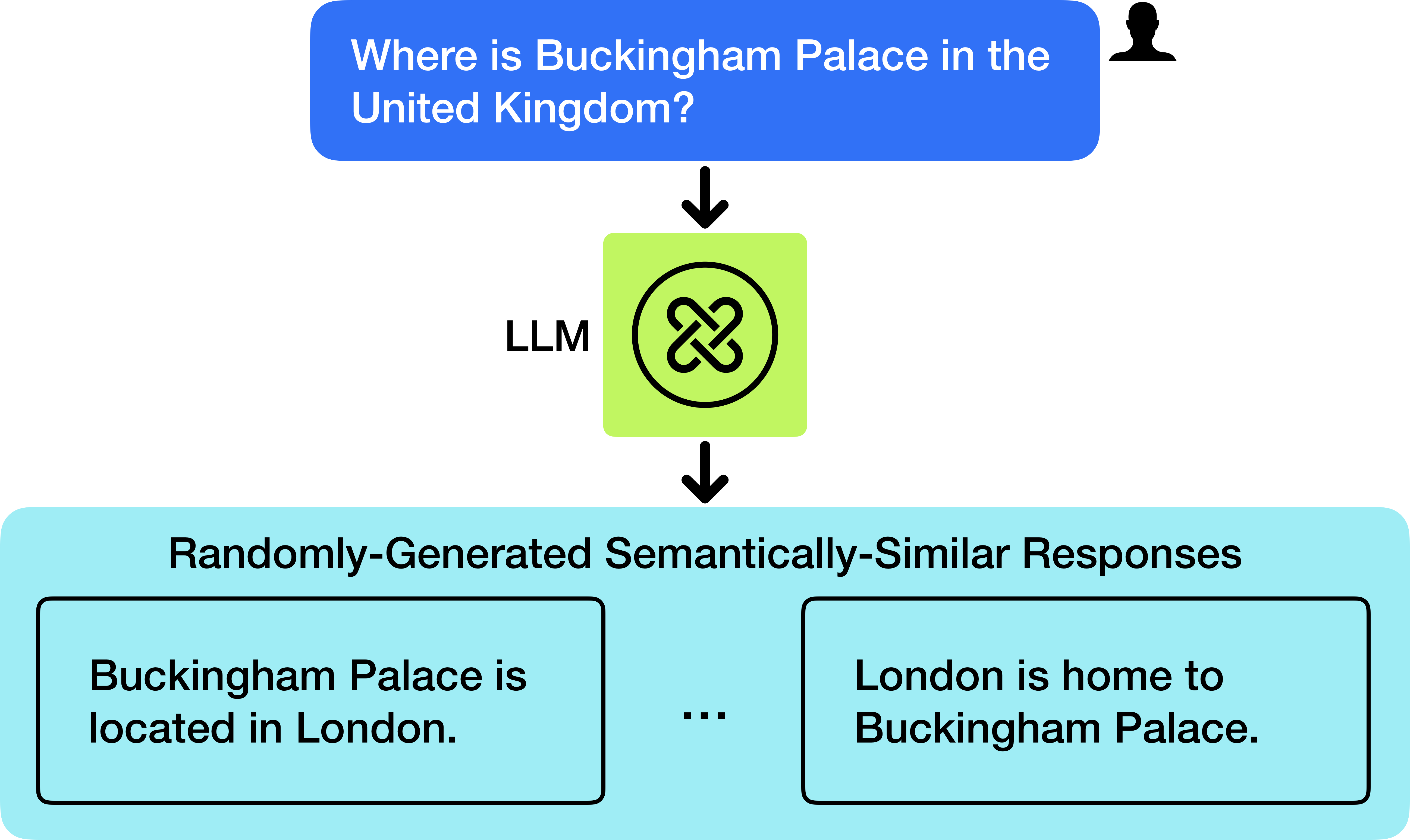

This diagram illustrates the process of a Large Language Model (LLM) responding to a user query. It depicts the input query, the LLM processing, and the generation of multiple semantically similar responses. The diagram is visually structured as a flow chart with directional arrows indicating the sequence of events.

### Components/Axes

The diagram consists of three main components:

1. **Input Query:** A light blue rounded rectangle at the top, containing the text "Where is Buckingham Palace in the United Kingdom?". A small grey silhouette of a person is positioned to the right of the query.

2. **LLM Processing:** A central circular element labeled "LLM" with a stylized green icon resembling intertwined loops.

3. **Output Responses:** A light green rounded rectangle at the bottom, labeled "Randomly-Generated Semantically-Similar Responses". This rectangle contains two example responses: "Buckingham Palace is located in London." and "London is home to Buckingham Palace." with an ellipsis ("...") indicating that more responses exist.

Arrows indicate the flow of information: from the input query to the LLM, and from the LLM to the output responses.

### Detailed Analysis or Content Details

* **Input Query:** The query is "Where is Buckingham Palace in the United Kingdom?".

* **LLM:** The LLM is represented by the label "LLM" and a green icon. The icon appears to be a stylized representation of neural network connections.

* **Output Responses:** Two example responses are provided:

* "Buckingham Palace is located in London."

* "London is home to Buckingham Palace."

The ellipsis ("...") suggests that the LLM can generate a variety of similar responses.

### Key Observations

The diagram highlights the LLM's ability to generate multiple, semantically equivalent responses to a single input query. This suggests a degree of randomness or variability in the LLM's output, even when the underlying meaning remains consistent. The diagram does not provide any quantitative data or performance metrics.

### Interpretation

The diagram demonstrates a core capability of LLMs: the generation of diverse outputs based on a single input. The "Randomly-Generated Semantically-Similar Responses" label suggests that the LLM doesn't simply provide a single, deterministic answer, but rather explores a range of possible formulations that convey the same information. This is a key feature of LLMs, allowing for more natural and engaging interactions. The diagram illustrates the process of natural language understanding and generation, where the LLM interprets the user's question and then formulates a relevant and coherent response. The use of semantically similar responses suggests the LLM is focused on conveying the *meaning* of the answer, rather than a specific phrasing. The diagram is a conceptual illustration and does not provide any information about the LLM's architecture, training data, or performance characteristics.