## Line Chart: ARC-C Accuracy vs Training Data Percentage

### Overview

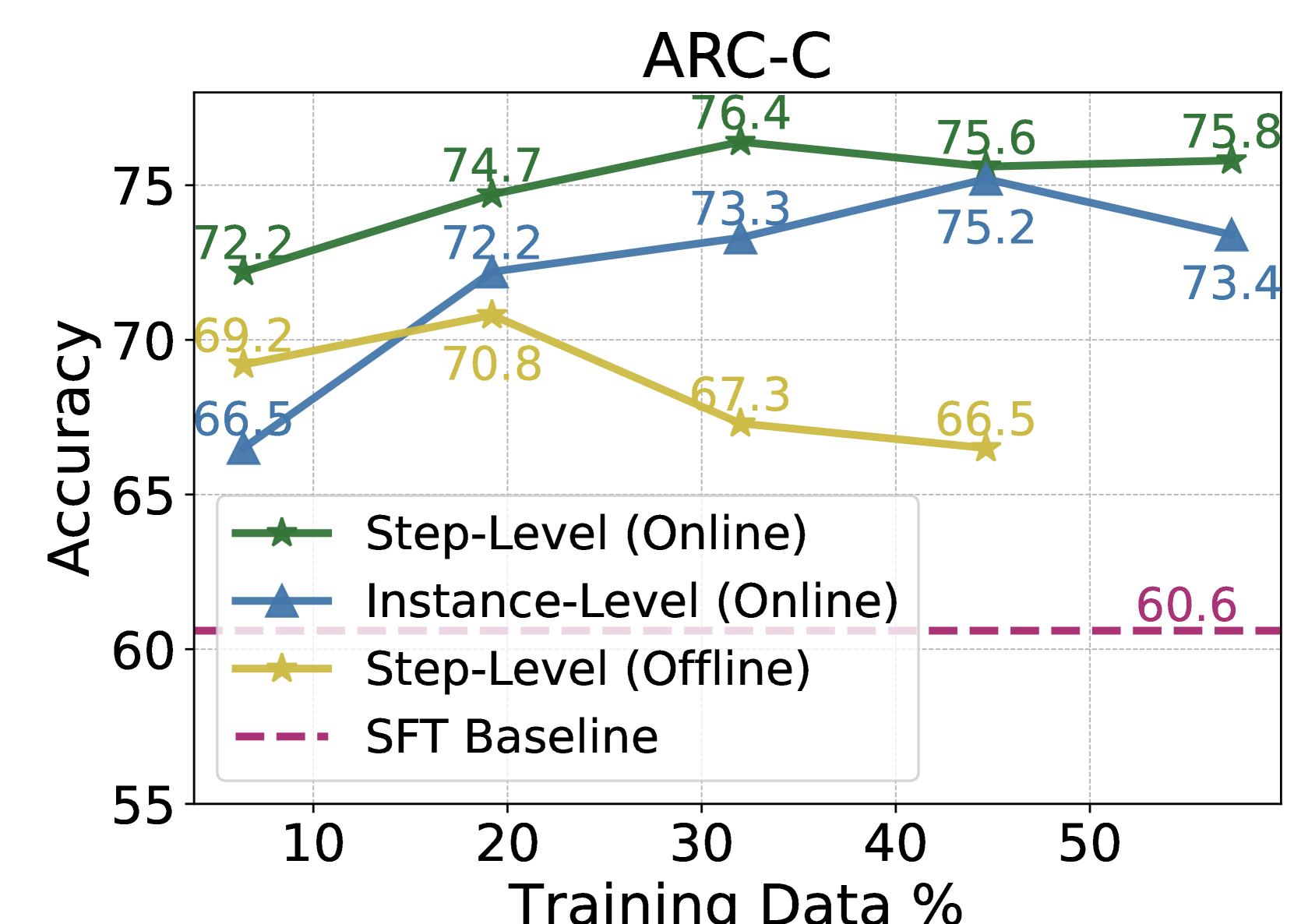

The chart compares the accuracy of four different training approaches (Step-Level Online, Instance-Level Online, Step-Level Offline, and SFT Baseline) across varying percentages of training data (10-50%). Accuracy is measured on the y-axis (55-75), while training data percentage is on the x-axis (10-50%).

### Components/Axes

- **X-axis**: Training Data % (10, 20, 30, 40, 50)

- **Y-axis**: Accuracy (55-75, increments of 5)

- **Legend**: Located at bottom-left, with four entries:

- Green line with stars: Step-Level (Online)

- Blue line with triangles: Instance-Level (Online)

- Yellow line with stars: Step-Level (Offline)

- Purple dashed line: SFT Baseline

- **Title**: "ARC-C" at top-center

### Detailed Analysis

1. **Step-Level (Online)** (Green):

- Starts at 72.2% accuracy at 10% training data

- Peaks at 76.4% at 30% training data

- Slight decline to 75.8% at 50% training data

- Trend: Initial sharp increase followed by stabilization

2. **Instance-Level (Online)** (Blue):

- Begins at 66.5% at 10% training data

- Rises to 73.3% at 30% training data

- Peaks at 75.2% at 40% training data

- Drops to 73.4% at 50% training data

- Trend: Gradual improvement then decline

3. **Step-Level (Offline)** (Yellow):

- Starts at 69.2% at 10% training data

- Peaks at 70.8% at 20% training data

- Declines to 66.5% at 50% training data

- Trend: Early peak followed by steady decline

4. **SFT Baseline** (Purple dashed):

- Constant at 60.6% across all training data percentages

- Trend: Flat line with no variation

### Key Observations

- Step-Level (Online) consistently outperforms all other methods

- Instance-Level (Online) shows the most significant improvement between 10% and 40% training data

- Step-Level (Offline) underperforms compared to its online counterpart

- SFT Baseline remains the lowest-performing method throughout

- All methods show diminishing returns beyond 30-40% training data

### Interpretation

The data demonstrates that online training approaches (both step-level and instance-level) significantly outperform offline methods and the SFT baseline. The Step-Level (Online) method achieves the highest accuracy (76.4%) at 30% training data, suggesting optimal performance at this threshold. The Instance-Level (Online) method shows the most dramatic improvement (from 66.5% to 75.2%) as training data increases, indicating strong scalability. The consistent decline in Step-Level (Offline) performance highlights the importance of online training dynamics. The flat SFT Baseline suggests it represents a fundamental lower bound for this particular task. The diminishing returns observed across all methods beyond 40% training data implies potential inefficiencies in utilizing larger datasets without proportional accuracy gains.