## Flowchart: Technical Process Architecture

### Overview

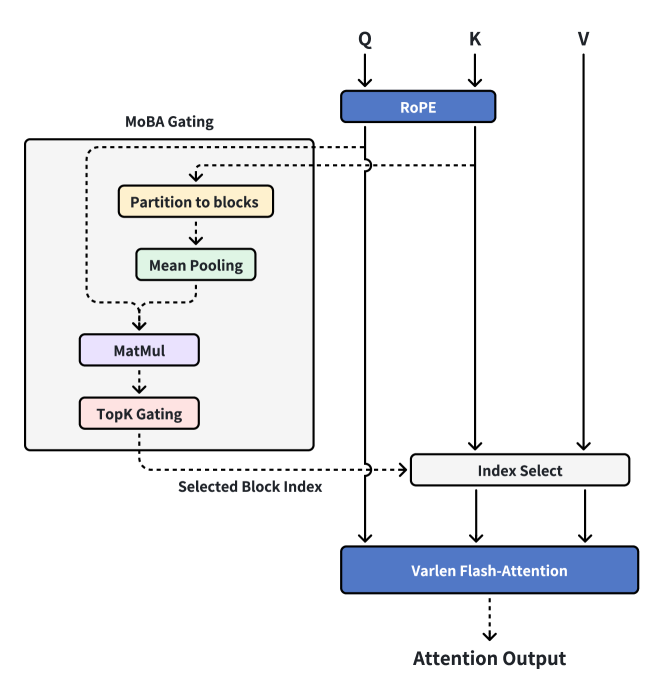

The image depicts a technical workflow diagram illustrating a multi-stage processing pipeline involving gating mechanisms, attention operations, and index selection. The flow progresses from left to right and top to bottom, with explicit connections between components.

### Components/Axes

1. **Main Blocks**:

- **MoBA Gating** (leftmost block, light gray background)

- **RoPE** (top-center block, blue background)

- **Index Select** (center-right block, light gray background)

- **Varen Flash-Attention** (bottom-right block, dark blue background)

- **Attention Output** (final output, dark blue arrow)

2. **Sub-Components within MoBA Gating**:

- **Partition to blocks** (yellow block)

- **Mean Pooling** (green block)

- **MatMul** (purple block)

- **Top Gating** (pink block)

3. **Arrows and Labels**:

- Dashed arrows between MoBA Gating sub-components

- Solid arrows connecting main blocks

- Explicit labels: "Selected Block Index" (between MoBA Gating and Index Select), "Attention Output" (final arrow)

### Detailed Analysis

1. **MoBA Gating Process**:

- Input is partitioned into blocks (yellow)

- Mean pooling aggregates block representations (green)

- Matrix multiplication (MatMul) processes pooled data (purple)

- Top Gating (pink) produces a selection mechanism

- Output: **Selected Block Index** (directed to Index Select)

2. **Index Selection**:

- Receives Selected Block Index from MoBA Gating

- Feeds into **Varen Flash-Attention** (dark blue)

3. **Attention Mechanism**:

- **Varen Flash-Attention** processes input via:

- Query (Q), Key (K), Value (V) pathways (top-center RoPE block)

- Produces **Attention Output** (dark blue arrow)

### Key Observations

1. **Hierarchical Flow**:

- MoBA Gating → Index Select → Varen Flash-Attention → Attention Output

- RoPE block appears to modulate Q/K/V inputs for attention

2. **Color Coding**:

- Gating components use warm colors (yellow/green/purple/pink)

- Attention components use cool colors (blue/dark blue)

- No explicit legend, but color coding suggests functional grouping

3. **Critical Nodes**:

- **Selected Block Index**: Acts as decision point between MoBA Gating and attention

- **RoPE**: Positional encoding integrated early in the pipeline

### Interpretation

This architecture combines gating mechanisms with attention operations in a transformer-like framework. The MoBA Gating system appears to:

1. Process input through multiple stages (partitioning → pooling → matrix ops → gating)

2. Selectively route information via block indexing

3. Feed selected data into optimized attention (Varen Flash-Attention)

The integration of RoPE suggests positional awareness is maintained throughout the pipeline. The "Flash-Attention" component implies computational optimizations for attention mechanisms, possibly reducing memory requirements while maintaining performance.

The diagram demonstrates a multi-stage approach where:

- Early stages (MoBA Gating) focus on feature selection

- Later stages (attention) focus on contextual integration

- Positional encoding (RoPE) is preserved across stages

This structure could represent a specialized transformer variant for tasks requiring both gating mechanisms and efficient attention computation, such as long-sequence processing or resource-constrained environments.