## Line Chart: Accuracy vs. Thinking Compute

### Overview

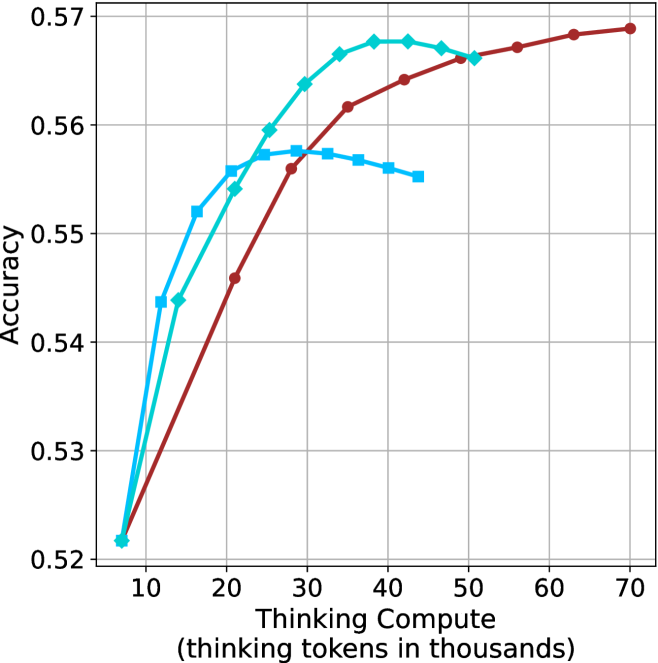

The image is a line chart plotting model accuracy against the amount of "thinking compute" allocated, measured in thousands of thinking tokens. It displays three distinct data series, each represented by a different colored line with unique markers, showing how performance scales with increased computational resources for different approaches or models.

### Components/Axes

* **X-Axis (Horizontal):**

* **Label:** "Thinking Compute (thinking tokens in thousands)"

* **Scale:** Linear scale from 10 to 70, with major gridlines and labels at intervals of 10 (10, 20, 30, 40, 50, 60, 70).

* **Y-Axis (Vertical):**

* **Label:** "Accuracy"

* **Scale:** Linear scale from 0.52 to 0.57, with major gridlines and labels at intervals of 0.01 (0.52, 0.53, 0.54, 0.55, 0.56, 0.57).

* **Data Series (Lines):**

* **Series 1 (Cyan Line with Diamond Markers):** This line starts at the lowest compute point and shows the steepest initial improvement.

* **Series 2 (Blue Line with Square Markers):** This line also starts low and rises quickly but plateaus earlier than the others.

* **Series 3 (Red/Brown Line with Circle Markers):** This line starts at a similar low point but follows a steadier, more gradual upward trajectory.

* **Legend:** There is no explicit legend box within the chart area. The series are differentiated solely by line color and marker shape.

### Detailed Analysis

**Data Series 1: Cyan Line (Diamond Markers)**

* **Trend:** Shows a rapid, near-linear increase in accuracy from low compute, peaks, and then exhibits a slight decline at the highest compute levels shown for this series.

* **Approximate Data Points:**

* (7k tokens, ~0.522 accuracy)

* (12k tokens, ~0.544)

* (18k tokens, ~0.554)

* (25k tokens, ~0.560)

* (30k tokens, ~0.564)

* (35k tokens, ~0.567)

* (40k tokens, ~0.568) **[Peak]**

* (45k tokens, ~0.567)

* (50k tokens, ~0.566)

**Data Series 2: Blue Line (Square Markers)**

* **Trend:** Rises very steeply at the lowest compute levels, then flattens into a plateau, showing minimal gains and even a slight decrease as compute increases further.

* **Approximate Data Points:**

* (7k tokens, ~0.522 accuracy)

* (12k tokens, ~0.544)

* (16k tokens, ~0.552)

* (20k tokens, ~0.556)

* (25k tokens, ~0.557)

* (30k tokens, ~0.558) **[Plateau Start]**

* (35k tokens, ~0.557)

* (40k tokens, ~0.556)

* (45k tokens, ~0.555)

**Data Series 3: Red/Brown Line (Circle Markers)**

* **Trend:** Demonstrates a consistent, monotonic increase in accuracy across the entire range of compute. Its growth is less steep initially but sustains longer, eventually surpassing the other two series.

* **Approximate Data Points:**

* (7k tokens, ~0.522 accuracy)

* (20k tokens, ~0.546)

* (28k tokens, ~0.556)

* (35k tokens, ~0.561)

* (42k tokens, ~0.564)

* (50k tokens, ~0.566)

* (58k tokens, ~0.567)

* (65k tokens, ~0.568)

* (70k tokens, ~0.569) **[Highest Point on Chart]**

### Key Observations

1. **Convergence at Low Compute:** All three methods start at nearly the same accuracy (~0.522) when given minimal compute (~7k tokens).

2. **Diverging Scaling Laws:** The methods scale very differently. The cyan and blue methods show strong early returns but hit diminishing returns (blue) or a peak followed by slight degradation (cyan). The red method shows a more sustainable scaling law.

3. **Crossover Point:** The red line, which initially lags behind the cyan line, crosses above it at approximately 50k thinking tokens and continues to rise, becoming the highest-performing method at the highest compute levels shown (70k tokens).

4. **Performance Ceiling:** The cyan line suggests a potential performance ceiling or even a slight negative return beyond ~40k tokens for that specific method. The blue line hits a clear ceiling earlier, around 30k tokens.

### Interpretation

This chart illustrates a fundamental trade-off in AI model scaling: the relationship between computational investment (thinking tokens) and performance (accuracy). The data suggests that different model architectures, training methods, or prompting strategies (represented by the three lines) have vastly different **compute-optimal** profiles.

* The **blue method** is highly efficient for low-compute scenarios but cannot leverage additional resources effectively. It would be the best choice under strict compute budgets.

* The **cyan method** offers the best peak performance for a mid-range compute budget (30k-45k tokens) but may be unstable or over-optimize at higher levels, leading to performance regression.

* The **red method** demonstrates the most robust and scalable behavior. While less efficient at the very low end, it continues to improve predictably with more compute, making it the superior choice if high accuracy is the primary goal and computational resources are not a limiting factor.

The chart provides a visual argument for investigating why certain approaches plateau while others scale. It implies that simply adding more compute is not a universal solution; the underlying method must be capable of utilizing that compute productively. The crossover point is particularly important for decision-making, indicating the threshold at which investing in the more scalable (red) method becomes advantageous.