\n

## Diagram: Attacks on Federated Learning and Transfer Learning

### Overview

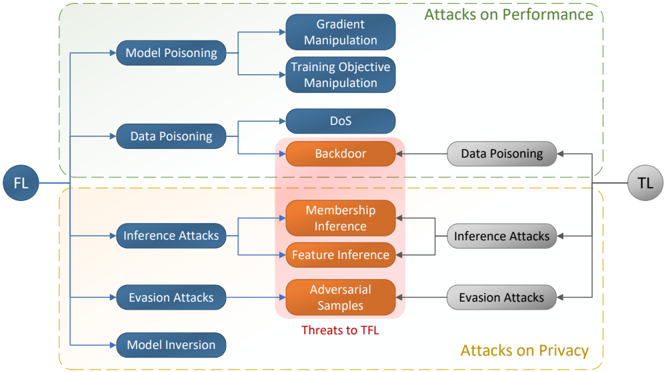

This diagram illustrates the various attack vectors targeting Federated Learning (FL) and Transfer Learning (TL) systems. The diagram is structured with "FL" on the left and "TL" on the right, representing the flow of potential attacks. Attacks are categorized into three main groups: Attacks on Performance (top), Attacks on Privacy (bottom), and Threats to Transfer Learning (center). The diagram uses a flowchart-like structure with boxes representing attack types and arrows indicating potential attack paths.

### Components/Axes

The diagram consists of the following components:

* **FL:** Located in the top-left corner, representing Federated Learning.

* **TL:** Located in the top-right corner, representing Transfer Learning.

* **Attacks on Performance:** A header at the top, indicating the category of attacks affecting model performance.

* **Attacks on Privacy:** A header at the bottom, indicating the category of attacks affecting data privacy.

* **Threats to TFL:** A header in the center, indicating the category of attacks specific to Transfer Federated Learning.

* **Attack Boxes:** Rectangular boxes containing the names of specific attack types. These are color-coded: blue for attacks on performance and privacy, orange for threats to TFL, and pink for attacks that bridge both performance and privacy.

* **Arrows:** Lines connecting the attack boxes, indicating the flow or relationship between attacks.

### Detailed Analysis or Content Details

The diagram details the following attacks:

**Attacks on Performance (Top)**

* **Model Poisoning:** Connected to Gradient Manipulation and Training Objective Manipulation.

* **Gradient Manipulation:** Receives input from Model Poisoning.

* **Training Objective Manipulation:** Receives input from Model Poisoning.

* **Data Poisoning:** Connected to DoS and Backdoor.

* **DoS (Denial of Service):** Receives input from Data Poisoning.

* **Backdoor:** Receives input from Data Poisoning, and also connects to Data Poisoning on the TL side.

**Attacks on Privacy (Bottom)**

* **Inference Attacks:** Connected to Membership Inference and Feature Inference.

* **Membership Inference:** Receives input from Inference Attacks, and also connects to Feature Inference.

* **Feature Inference:** Receives input from Inference Attacks and Membership Inference.

* **Evasion Attacks:** Connected to Adversarial Samples.

* **Adversarial Samples:** Receives input from Evasion Attacks, and also connects to Evasion Attacks on the TL side.

* **Model Inversion:** No direct connections shown.

**Threats to TFL (Center)**

* **Membership Inference:** Bridging attacks on performance and privacy.

* **Feature Inference:** Bridging attacks on performance and privacy.

* **Adversarial Samples:** Bridging attacks on performance and privacy.

The diagram shows a flow from FL to TL, with attacks originating on the FL side potentially impacting the TL side. Data Poisoning and Evasion Attacks have direct connections to their counterparts on the TL side. The pink boxes (Membership Inference, Feature Inference, and Adversarial Samples) represent attacks that simultaneously threaten both performance and privacy.

### Key Observations

* The diagram highlights the interconnectedness of different attack types. For example, Model Poisoning can lead to both Gradient Manipulation and Training Objective Manipulation.

* The central "Threats to TFL" section emphasizes the unique vulnerabilities introduced when combining Federated Learning and Transfer Learning.

* The color-coding effectively distinguishes between different categories of attacks.

* The diagram does not provide any quantitative data or specific metrics related to the severity or likelihood of these attacks.

### Interpretation

The diagram illustrates the complex security landscape of Federated Learning and Transfer Learning. It demonstrates that these systems are vulnerable to a wide range of attacks, spanning both performance and privacy concerns. The flow from FL to TL suggests that vulnerabilities in the federated learning process can propagate to the transfer learning stage. The diagram serves as a valuable tool for researchers and practitioners to understand the potential threats and develop appropriate mitigation strategies. The inclusion of "Threats to TFL" highlights the need for specialized security measures when deploying these combined techniques. The diagram is a conceptual overview and does not provide details on the specific mechanisms or implementations of these attacks. It is a high-level representation of the attack surface, intended to raise awareness and guide further investigation.