## Diagram: Transformer Circuits Knowledge Decomposition

### Overview

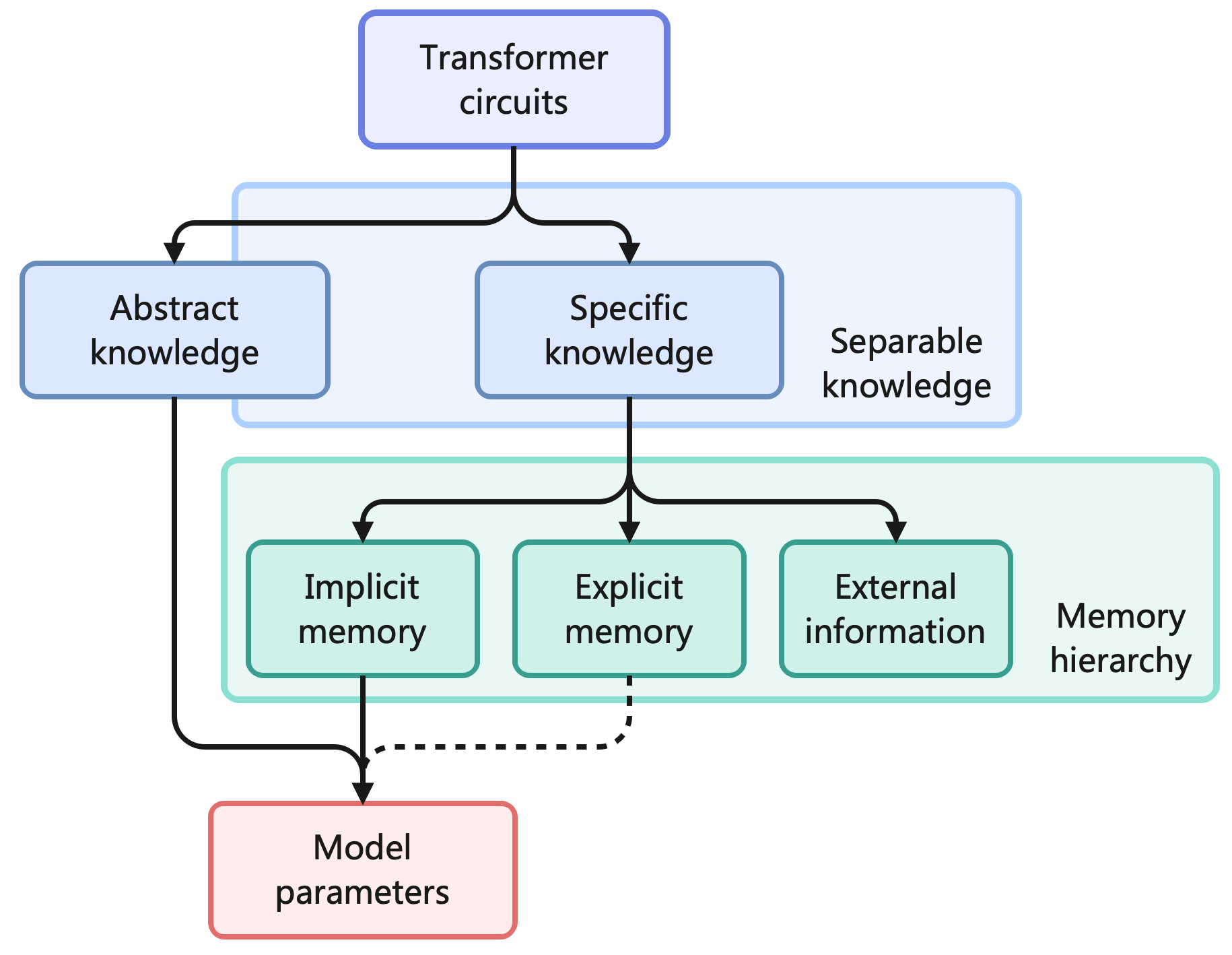

This image is a hierarchical flowchart illustrating the decomposition of knowledge within "Transformer circuits." It maps how different types of knowledge and memory relate to the foundational "Model parameters." The diagram uses color-coded boxes and directional arrows to show relationships and flow.

### Components/Axes

The diagram is structured as a top-down flowchart with the following labeled components, grouped by colored containers:

1. **Top-Level Component (Blue Box, Top Center):**

* Label: `Transformer circuits`

2. **First-Level Decomposition (Light Blue Container, Middle):**

* This container is labeled on its right side as `Separable knowledge`.

* It contains two primary boxes:

* Left Box: `Abstract knowledge`

* Right Box: `Specific knowledge`

3. **Second-Level Decomposition (Light Green Container, Lower Middle):**

* This container is labeled on its right side as `Memory hierarchy`.

* It contains three boxes, all stemming from "Specific knowledge":

* Left Box: `Implicit memory`

* Center Box: `Explicit memory`

* Right Box: `External information`

4. **Foundational Component (Red Box, Bottom Center):**

* Label: `Model parameters`

### Detailed Analysis

**Flow and Connections (Arrows):**

* A solid black arrow originates from `Transformer circuits` and splits, pointing to both `Abstract knowledge` and `Specific knowledge`.

* A solid black arrow flows directly from `Abstract knowledge` down to `Model parameters`.

* A solid black arrow flows from `Specific knowledge` down to the `Memory hierarchy` container, where it splits into three arrows pointing to `Implicit memory`, `Explicit memory`, and `External information`.

* A solid black arrow flows from `Implicit memory` down to `Model parameters`.

* A **dashed** black arrow flows from `Explicit memory` down to `Model parameters`. This dashed line likely indicates a different, weaker, or more indirect relationship compared to the solid lines.

**Spatial Grounding & Color Coding:**

* The `Separable knowledge` container (light blue) is positioned in the upper-middle section, encompassing the two knowledge types.

* The `Memory hierarchy` container (light green) is positioned directly below the `Specific knowledge` box, in the lower-middle section.

* The `Model parameters` box (light red/pink) is the terminal point at the bottom center of the diagram.

* All primary component boxes (`Abstract knowledge`, `Specific knowledge`, `Implicit memory`, `Explicit memory`, `External information`, `Model parameters`) have a darker blue or green border matching their container's theme, except for `Model parameters` which has a red border.

### Key Observations

1. **Hierarchical Decomposition:** The diagram presents a clear hierarchy: Transformer circuits are broken down into separable knowledge types, one of which (Specific knowledge) is further broken down into a memory hierarchy.

2. **Direct vs. Indirect Pathways:** `Abstract knowledge` has a direct, single-step connection to `Model parameters`. In contrast, `Specific knowledge` influences `Model parameters` indirectly through the three components of the `Memory hierarchy`.

3. **Dashed Line Significance:** The dashed arrow from `Explicit memory` to `Model parameters` is a notable visual distinction, suggesting this pathway may be less permanent, more computational, or functionally different from the solid-line pathways (e.g., from `Implicit memory`).

4. **Grouping Semantics:** The labels `Separable knowledge` and `Memory hierarchy` are not boxes themselves but annotations that define the conceptual grouping of the components they are placed next to.

### Interpretation

This diagram provides a conceptual model for understanding how a transformer-based AI system might organize information. It suggests that:

* **Knowledge is not monolithic.** It can be separated into abstract rules/patterns (`Abstract knowledge`) and concrete facts/data (`Specific knowledge`).

* **Specific knowledge is managed through a memory system.** This system includes `Implicit memory` (likely encoded in weights), `Explicit memory` (perhaps like key-value caches or retrieval), and access to `External information` (like tools or databases).

* **All pathways ultimately affect the model's core.** Both abstract understanding and specific memories, whether implicit or explicit, converge to influence or be stored within the foundational `Model parameters`. The dashed line for `Explicit memory` might imply that this type of memory is more transient or requires different processing to become a permanent part of the model's parameters.

The Peircean investigative reading would focus on the **signs** (the boxes and arrows) to infer the **system's logic**. The diagram acts as an *icon* (a likeness of the system's structure) and a *symbol* (using conventional arrows to represent flow/influence). The key insight is the proposed architecture: a transformer's "circuits" implement a separable knowledge system where specific facts are managed by a distinct memory hierarchy before affecting the core model. This has implications for interpretability (we can study these components separately) and capability (understanding how abstract vs. specific knowledge is leveraged).