## Diagram: Kimi-VL-Thinking Process

### Overview

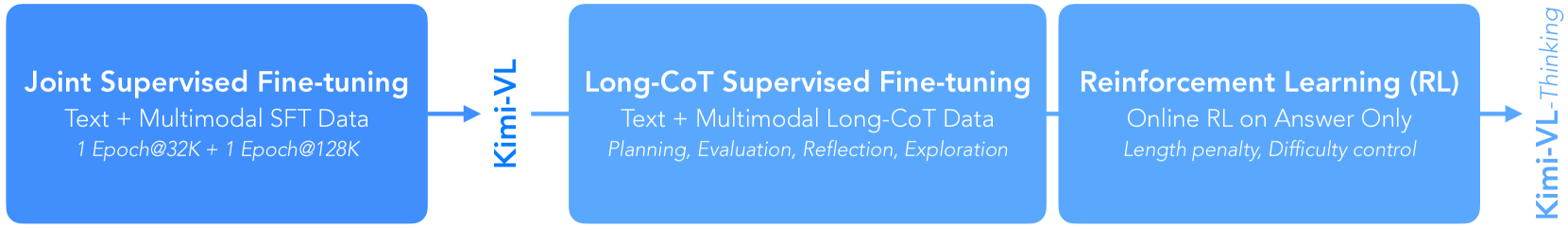

The image is a diagram illustrating the Kimi-VL-Thinking process, which consists of three main stages: Joint Supervised Fine-tuning, Long-CoT Supervised Fine-tuning, and Reinforcement Learning (RL). Each stage is represented by a blue rounded rectangle, with arrows indicating the flow of the process. The Kimi-VL model is mentioned between the first and second stages, and again at the end of the process as "Kimi-VL-Thinking".

### Components/Axes

* **Stages:**

* Joint Supervised Fine-tuning

* Long-CoT Supervised Fine-tuning

* Reinforcement Learning (RL)

* **Model:** Kimi-VL

* **Data Types:** Text, Multimodal SFT Data, Multimodal Long-CoT Data

* **Processes:** Planning, Evaluation, Reflection, Exploration, Online RL on Answer Only

* **Parameters:** Epoch, Length penalty, Difficulty control

### Detailed Analysis or Content Details

1. **Joint Supervised Fine-tuning:**

* Text: "Joint Supervised Fine-tuning"

* Data: "Text + Multimodal SFT Data"

* Epochs: "1 Epoch@32K + 1 Epoch@128K"

* Position: Located on the left side of the diagram.

2. **Kimi-VL (First Instance):**

* Text: "Kimi-VL"

* Position: Located between the "Joint Supervised Fine-tuning" and "Long-CoT Supervised Fine-tuning" stages, with an arrow pointing from the first stage to "Kimi-VL" and another arrow pointing from "Kimi-VL" to the second stage.

3. **Long-CoT Supervised Fine-tuning:**

* Text: "Long-CoT Supervised Fine-tuning"

* Data: "Text + Multimodal Long-CoT Data"

* Processes: "Planning, Evaluation, Reflection, Exploration"

* Position: Located in the center of the diagram.

4. **Reinforcement Learning (RL):**

* Text: "Reinforcement Learning (RL)"

* Process: "Online RL on Answer Only"

* Parameters: "Length penalty, Difficulty control"

* Position: Located on the right side of the diagram.

5. **Kimi-VL-Thinking:**

* Text: "Kimi-VL-Thinking"

* Position: Located on the far right side of the diagram, with an arrow pointing from the "Reinforcement Learning (RL)" stage to "Kimi-VL-Thinking".

### Key Observations

* The diagram illustrates a sequential process, starting with Joint Supervised Fine-tuning, progressing through Long-CoT Supervised Fine-tuning, and ending with Reinforcement Learning (RL).

* The Kimi-VL model is involved in the transition between the first two stages and is ultimately associated with "Thinking" at the end of the process.

* Each stage involves specific data types, processes, and parameters.

### Interpretation

The diagram outlines the training and refinement process for the Kimi-VL model, specifically focusing on its "Thinking" capabilities. The process begins with a broad joint supervised fine-tuning, then moves to a more specialized Long-CoT (Chain-of-Thought) supervised fine-tuning, and finally incorporates reinforcement learning to optimize the model's responses. The progression suggests an iterative approach to improving the model's ability to reason and provide accurate answers. The use of multimodal data throughout the process indicates that the model is designed to handle various types of input, not just text. The parameters mentioned in the RL stage, such as "Length penalty" and "Difficulty control," suggest that the model is being fine-tuned to generate concise and appropriate responses based on the complexity of the input.