## Diagram: Kimi-VL Training Pipeline

### Overview

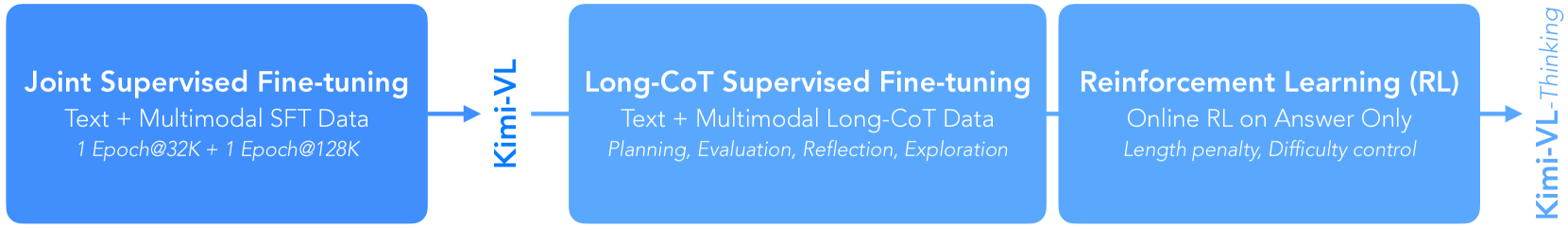

The image is a horizontal flowchart illustrating a three-stage training pipeline for an AI model named "Kimi-VL," culminating in a variant called "Kimi-VL-Thinking." The diagram uses a left-to-right flow with blue rectangular boxes representing distinct training phases, connected by arrows indicating the sequence and data flow.

### Components/Axes

The diagram consists of three primary blue boxes arranged horizontally, connected by arrows. Text is embedded within each box and along the connecting arrows.

1. **Leftmost Box (Stage 1):**

* **Position:** Far left.

* **Primary Label:** "Joint Supervised Fine-tuning"

* **Subtext (Line 1):** "Text + Multimodal SFT Data"

* **Subtext (Line 2):** "1 Epoch@32K + 1 Epoch@128K"

2. **First Connecting Arrow:**

* **Position:** Between the first and second boxes.

* **Label (Vertical Text):** "Kimi-VL"

3. **Middle Box (Stage 2):**

* **Position:** Center.

* **Primary Label:** "Long-CoT Supervised Fine-tuning"

* **Subtext (Line 1):** "Text + Multimodal Long-CoT Data"

* **Subtext (Line 2):** "Planning, Evaluation, Reflection, Exploration"

4. **Second Connecting Arrow:**

* **Position:** Between the second and third boxes.

* **Label:** No text on this arrow.

5. **Rightmost Box (Stage 3):**

* **Position:** Far right.

* **Primary Label:** "Reinforcement Learning (RL)"

* **Subtext (Line 1):** "Online RL on Answer Only"

* **Subtext (Line 2):** "Length penalty, Difficulty control"

6. **Final Output Arrow:**

* **Position:** Extending from the right side of the third box.

* **Label (Vertical Text):** "Kimi-VL-Thinking"

### Detailed Analysis

The diagram outlines a sequential, multi-stage training methodology:

* **Stage 1 - Joint Supervised Fine-tuning:** This initial phase uses a combined dataset of text and multimodal data for supervised fine-tuning (SFT). The training schedule is specified as one epoch on a 32K context length followed by one epoch on a 128K context length.

* **Stage 2 - Long-CoT Supervised Fine-tuning:** The model from Stage 1 ("Kimi-VL") undergoes a second supervised fine-tuning phase. This stage uses "Long-CoT" (Long Chain-of-Thought) data, which includes both text and multimodal examples. The training focuses on instilling reasoning capabilities described as "Planning, Evaluation, Reflection, Exploration."

* **Stage 3 - Reinforcement Learning (RL):** The model from Stage 2 is further refined using reinforcement learning. Key details are that it is "Online RL" (likely meaning updates are performed during interaction) and is applied "on Answer Only," suggesting the reward model or policy update focuses on the final answer quality. Training is guided by a "Length penalty" and "Difficulty control" mechanism.

* **Final Output:** The result of this three-stage pipeline is a model designated "Kimi-VL-Thinking."

### Key Observations

* The pipeline shows a clear progression from general supervised learning to specialized reasoning-focused training, and finally to optimization via reinforcement learning.

* The transition from "SFT Data" to "Long-CoT Data" indicates a deliberate shift in training data composition to foster complex reasoning.

* The RL stage's constraints ("Length penalty, Difficulty control") suggest an effort to balance answer quality with efficiency and to manage the complexity of training examples.

* The naming convention implies that "Kimi-VL-Thinking" is an enhanced version of the base "Kimi-VL" model, specifically endowed with advanced reasoning ("Thinking") capabilities through this pipeline.

### Interpretation

This diagram represents a sophisticated, contemporary approach to training large multimodal language models. The pipeline is designed to systematically build capabilities:

1. **Foundation:** Stage 1 establishes a broad base of knowledge and alignment using standard supervised fine-tuning on diverse data.

2. **Reasoning Specialization:** Stage 2 explicitly targets the development of chain-of-thought reasoning, a critical skill for complex problem-solving. The listed components (Planning, Evaluation, etc.) are hallmarks of advanced cognitive processes.

3. **Refinement and Optimization:** Stage 3 uses reinforcement learning to fine-tune the model's outputs based on reward signals, likely improving accuracy, helpfulness, and adherence to desired formats. The "online" aspect and specific penalties point to a dynamic and controlled training environment.

The overall flow suggests that achieving a model capable of sophisticated "Thinking" requires more than just feeding it data; it requires a structured curriculum that first teaches it *what* to know, then *how* to reason, and finally *how to optimize* its reasoning for specific goals. The explicit mention of multimodal data at each supervised stage underscores that this reasoning capability is intended to operate across text and visual inputs.