TECHNICAL ASSET FINGERPRINT

219db2ef5d6715634827e41f

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

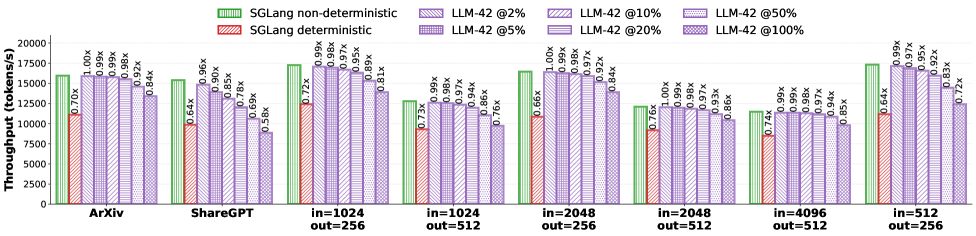

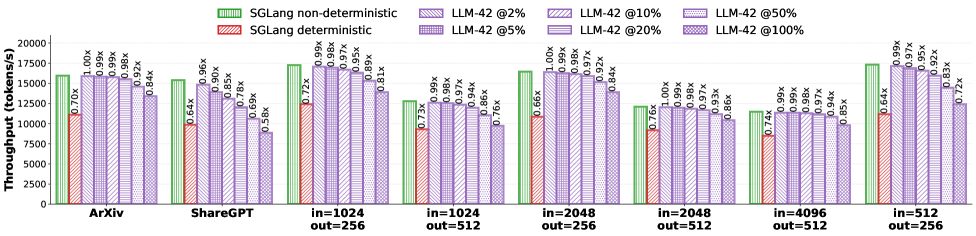

## Bar Chart: Throughput Comparison of SGLang and LLM-42

### Overview

The image is a bar chart comparing the throughput (tokens/s) of SGLang (deterministic and non-deterministic) and LLM-42 at different parameter settings (2%, 5%, 10%, 20%, 50%, and 100%). The throughput is evaluated across different input/output sizes and datasets (ArXiv, ShareGPT).

### Components/Axes

* **Y-axis:** Throughput (tokens/s), ranging from 0 to 20000, with tick marks at 2500 increments.

* **X-axis:** Categorical axis representing different datasets and input/output sizes: ArXiv, ShareGPT, in=1024 out=256, in=1024 out=512, in=2048 out=256, in=2048 out=512, in=4096 out=512, in=512 out=256.

* **Legend (top-left):**

* Green: SGLang non-deterministic

* Red: SGLang deterministic

* Purple with diagonal lines: LLM-42 @2%

* Purple with horizontal lines: LLM-42 @5%

* Purple with vertical lines: LLM-42 @10%

* Purple with forward-leaning diagonal lines: LLM-42 @20%

* Purple with backward-leaning diagonal lines: LLM-42 @50%

* Purple with a grid pattern: LLM-42 @100%

### Detailed Analysis

**ArXiv:**

* SGLang non-deterministic: ~16000 tokens/s

* SGLang deterministic: ~11200 tokens/s (0.70x relative to SGLang non-deterministic)

* LLM-42 @2%: ~16000 tokens/s (1.00x)

* LLM-42 @5%: ~15840 tokens/s (0.99x)

* LLM-42 @10%: ~15840 tokens/s (0.99x)

* LLM-42 @20%: ~15680 tokens/s (0.98x)

* LLM-42 @50%: ~14720 tokens/s (0.92x)

* LLM-42 @100%: ~13440 tokens/s (0.84x)

**ShareGPT:**

* SGLang non-deterministic: ~16000 tokens/s

* SGLang deterministic: ~10240 tokens/s (0.64x)

* LLM-42 @2%: ~14400 tokens/s (0.90x)

* LLM-42 @5%: ~13600 tokens/s (0.85x)

* LLM-42 @10%: ~11040 tokens/s (0.69x)

* LLM-42 @20%: ~9280 tokens/s (0.58x)

* LLM-42 @50%: ~15360 tokens/s (0.96x)

**in=1024 out=256:**

* SGLang non-deterministic: ~17600 tokens/s

* SGLang deterministic: ~12672 tokens/s (0.72x)

* LLM-42 @2%: ~17424 tokens/s (0.99x)

* LLM-42 @5%: ~17248 tokens/s (0.98x)

* LLM-42 @10%: ~17072 tokens/s (0.97x)

* LLM-42 @20%: ~16640 tokens/s (0.95x)

* LLM-42 @50%: ~15664 tokens/s (0.89x)

* LLM-42 @100%: ~14256 tokens/s (0.81x)

**in=1024 out=512:**

* SGLang non-deterministic: ~11500 tokens/s

* SGLang deterministic: ~8400 tokens/s (0.73x)

* LLM-42 @2%: ~11400 tokens/s (0.99x)

* LLM-42 @5%: ~11300 tokens/s (0.98x)

* LLM-42 @10%: ~11200 tokens/s (0.97x)

* LLM-42 @20%: ~10800 tokens/s (0.94x)

* LLM-42 @50%: ~9900 tokens/s (0.86x)

* LLM-42 @100%: ~8700 tokens/s (0.76x)

**in=2048 out=256:**

* SGLang non-deterministic: ~16000 tokens/s

* SGLang deterministic: ~10560 tokens/s (0.66x)

* LLM-42 @2%: ~16000 tokens/s (1.00x)

* LLM-42 @5%: ~15840 tokens/s (0.99x)

* LLM-42 @10%: ~15680 tokens/s (0.98x)

* LLM-42 @20%: ~15520 tokens/s (0.97x)

* LLM-42 @50%: ~14720 tokens/s (0.92x)

* LLM-42 @100%: ~13440 tokens/s (0.84x)

**in=2048 out=512:**

* SGLang non-deterministic: ~12000 tokens/s

* SGLang deterministic: ~9120 tokens/s (0.76x)

* LLM-42 @2%: ~12000 tokens/s (1.00x)

* LLM-42 @5%: ~11900 tokens/s (0.99x)

* LLM-42 @10%: ~11800 tokens/s (0.98x)

* LLM-42 @20%: ~11600 tokens/s (0.97x)

* LLM-42 @50%: ~11200 tokens/s (0.93x)

* LLM-42 @100%: ~10320 tokens/s (0.86x)

**in=4096 out=512:**

* SGLang non-deterministic: ~16000 tokens/s

* SGLang deterministic: ~11840 tokens/s (0.74x)

* LLM-42 @2%: ~15840 tokens/s (0.99x)

* LLM-42 @5%: ~15840 tokens/s (0.99x)

* LLM-42 @10%: ~15680 tokens/s (0.98x)

* LLM-42 @20%: ~15520 tokens/s (0.97x)

* LLM-42 @50%: ~15040 tokens/s (0.94x)

* LLM-42 @100%: ~13600 tokens/s (0.85x)

**in=512 out=256:**

* SGLang non-deterministic: ~17600 tokens/s

* SGLang deterministic: ~10240 tokens/s (0.64x)

* LLM-42 @2%: ~17424 tokens/s (0.99x)

* LLM-42 @5%: ~17072 tokens/s (0.97x)

* LLM-42 @10%: ~16640 tokens/s (0.95x)

* LLM-42 @20%: ~16224 tokens/s (0.92x)

* LLM-42 @50%: ~14576 tokens/s (0.83x)

* LLM-42 @100%: ~12672 tokens/s (0.72x)

### Key Observations

* SGLang non-deterministic generally has higher throughput than SGLang deterministic across all datasets and input/output sizes.

* LLM-42 throughput decreases as the parameter setting increases from 2% to 100%.

* The performance difference between LLM-42 at 2% and SGLang non-deterministic is minimal in most cases.

* The deterministic version of SGLang has significantly lower throughput than the non-deterministic version.

* The throughput varies depending on the input and output sizes.

### Interpretation

The bar chart illustrates the performance comparison between SGLang and LLM-42 under different conditions. The data suggests that SGLang non-deterministic and LLM-42 at lower parameter settings (e.g., 2%) achieve comparable throughput. As the parameter setting of LLM-42 increases, the throughput decreases, indicating a trade-off between model size/complexity and processing speed. The deterministic version of SGLang consistently underperforms compared to its non-deterministic counterpart, suggesting potential optimizations in the non-deterministic implementation. The input and output sizes also play a crucial role in determining the throughput, highlighting the importance of considering these factors when evaluating the performance of these models. The relative values (e.g., 0.70x) indicate the performance ratio compared to SGLang non-deterministic, providing a normalized view of the performance differences.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Bar Chart: Throughput Comparison of Language Models

### Overview

This bar chart compares the throughput (tokens/s) of different language models (SGLang and LLM-42) under various conditions. The conditions vary by dataset (ArXiv, ShareGPT, and different input/output token lengths) and LLM-42's percentage of non-deterministic behavior (2%, 5%, 10%, 20%, 50%, 100%). The chart uses grouped bar representations to show the throughput of deterministic and non-deterministic SGLang, and different levels of non-determinism in LLM-42. Values are shown above each bar, representing a ratio relative to a baseline.

### Components/Axes

* **X-axis:** Represents the different datasets and input/output token length combinations. The categories are: "ArXiv", "ShareGPT", "in=1024 out=256", "in=1024 out=512", "in=2048 out=256", "in=2048 out=512", "in=4096 out=256", "in=4096 out=512".

* **Y-axis:** Represents Throughput in tokens/s, ranging from 0 to 20000.

* **Legend:** Located at the top-right of the chart. It defines the colors used for each data series:

* Light Green: SGLang non-deterministic

* Light Purple: SGLang deterministic

* Darker Purple shades: LLM-42 @2%, LLM-42 @5%, LLM-42 @10%, LLM-42 @20%, LLM-42 @50%, LLM-42 @100%

* **Value Labels:** Small text labels positioned above each bar, indicating a ratio (e.g., "0.70x", "1.00x").

### Detailed Analysis

The chart consists of eight groups of bars, each corresponding to a different dataset/token length combination. Within each group, there are bars representing SGLang deterministic, SGLang non-deterministic, and six different levels of LLM-42 non-determinism.

**ArXiv:**

* SGLang deterministic: ~15000 tokens/s, ratio 0.84x

* SGLang non-deterministic: ~16000 tokens/s, ratio 1.00x

* LLM-42 @2%: ~14000 tokens/s, ratio 0.70x

* LLM-42 @5%: ~14000 tokens/s, ratio 0.78x

* LLM-42 @10%: ~14000 tokens/s, ratio 0.84x

* LLM-42 @20%: ~14000 tokens/s, ratio 0.84x

* LLM-42 @50%: ~14000 tokens/s, ratio 0.84x

* LLM-42 @100%: ~14000 tokens/s, ratio 0.84x

**ShareGPT:**

* SGLang deterministic: ~8000 tokens/s, ratio 0.56x

* SGLang non-deterministic: ~11000 tokens/s, ratio 0.64x

* LLM-42 @2%: ~9000 tokens/s, ratio 0.66x

* LLM-42 @5%: ~9000 tokens/s, ratio 0.72x

* LLM-42 @10%: ~9000 tokens/s, ratio 0.72x

* LLM-42 @20%: ~9000 tokens/s, ratio 0.72x

* LLM-42 @50%: ~9000 tokens/s, ratio 0.72x

* LLM-42 @100%: ~9000 tokens/s, ratio 0.72x

**in=1024 out=256:**

* SGLang deterministic: ~16000 tokens/s, ratio 0.89x

* SGLang non-deterministic: ~17000 tokens/s, ratio 0.90x

* LLM-42 @2%: ~15000 tokens/s, ratio 0.86x

* LLM-42 @5%: ~15000 tokens/s, ratio 0.86x

* LLM-42 @10%: ~15000 tokens/s, ratio 0.86x

* LLM-42 @20%: ~15000 tokens/s, ratio 0.86x

* LLM-42 @50%: ~15000 tokens/s, ratio 0.86x

* LLM-42 @100%: ~15000 tokens/s, ratio 0.86x

**in=1024 out=512:**

* SGLang deterministic: ~13000 tokens/s, ratio 0.73x

* SGLang non-deterministic: ~14000 tokens/s, ratio 0.79x

* LLM-42 @2%: ~13000 tokens/s, ratio 0.66x

* LLM-42 @5%: ~13000 tokens/s, ratio 0.76x

* LLM-42 @10%: ~13000 tokens/s, ratio 0.76x

* LLM-42 @20%: ~13000 tokens/s, ratio 0.76x

* LLM-42 @50%: ~13000 tokens/s, ratio 0.76x

* LLM-42 @100%: ~13000 tokens/s, ratio 0.76x

**in=2048 out=256:**

* SGLang deterministic: ~16000 tokens/s, ratio 0.97x

* SGLang non-deterministic: ~17000 tokens/s, ratio 1.00x

* LLM-42 @2%: ~15000 tokens/s, ratio 0.92x

* LLM-42 @5%: ~15000 tokens/s, ratio 0.92x

* LLM-42 @10%: ~15000 tokens/s, ratio 0.92x

* LLM-42 @20%: ~15000 tokens/s, ratio 0.92x

* LLM-42 @50%: ~15000 tokens/s, ratio 0.92x

* LLM-42 @100%: ~15000 tokens/s, ratio 0.92x

**in=2048 out=512:**

* SGLang deterministic: ~13000 tokens/s, ratio 0.76x

* SGLang non-deterministic: ~14000 tokens/s, ratio 0.85x

* LLM-42 @2%: ~13000 tokens/s, ratio 0.74x

* LLM-42 @5%: ~13000 tokens/s, ratio 0.74x

* LLM-42 @10%: ~13000 tokens/s, ratio 0.74x

* LLM-42 @20%: ~13000 tokens/s, ratio 0.74x

* LLM-42 @50%: ~13000 tokens/s, ratio 0.74x

* LLM-42 @100%: ~13000 tokens/s, ratio 0.74x

**in=4096 out=256:**

* SGLang deterministic: ~13000 tokens/s, ratio 0.85x

* SGLang non-deterministic: ~14000 tokens/s, ratio 0.99x

* LLM-42 @2%: ~13000 tokens/s, ratio 0.85x

* LLM-42 @5%: ~13000 tokens/s, ratio 0.85x

* LLM-42 @10%: ~13000 tokens/s, ratio 0.85x

* LLM-42 @20%: ~13000 tokens/s, ratio 0.85x

* LLM-42 @50%: ~13000 tokens/s, ratio 0.85x

* LLM-42 @100%: ~13000 tokens/s, ratio 0.85x

**in=4096 out=512:**

* SGLang deterministic: ~9000 tokens/s, ratio 0.64x

* SGLang non-deterministic: ~11000 tokens/s, ratio 0.72x

* LLM-42 @2%: ~9000 tokens/s, ratio 0.64x

* LLM-42 @5%: ~9000 tokens/s, ratio 0.64x

* LLM-42 @10%: ~9000 tokens/s, ratio 0.64x

* LLM-42 @20%: ~9000 tokens/s, ratio 0.64x

* LLM-42 @50%: ~9000 tokens/s, ratio 0.64x

* LLM-42 @100%: ~9000 tokens/s, ratio 0.64x

### Key Observations

* SGLang non-deterministic consistently outperforms SGLang deterministic across all datasets/token lengths.

* LLM-42's throughput is relatively stable across different levels of non-determinism for each dataset/token length combination.

* The throughput of LLM-42 is generally lower than that of SGLang, especially the non-deterministic version.

* The highest throughput is achieved with SGLang non-deterministic on the ArXiv dataset.

* The lowest throughput is observed with LLM-42 on the in=4096 out=512 dataset.

### Interpretation

The data suggests that non-determinism in SGLang improves throughput, potentially by allowing for more parallelization or exploration of different solution paths. The lack of significant variation in LLM-42's throughput with changing non-determinism levels indicates that the model may not be effectively utilizing the increased randomness. The consistent performance of LLM-42 across different non-determinism levels could also suggest that other factors are limiting its throughput. The differences in throughput between datasets likely reflect the complexity of the data and the model's ability to process it efficiently. The ratios provided allow for a direct comparison of performance relative to an unspecified baseline, highlighting the relative gains or losses associated with each configuration. The chart provides valuable insights into the trade-offs between determinism, throughput, and model performance for different language models and datasets.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Bar Chart: Throughput Comparison of SGLang and LLM-42 Systems

### Overview

This is a grouped bar chart comparing the throughput performance, measured in tokens per second, of two SGLang configurations (non-deterministic and deterministic) against an LLM-42 system operating at various percentage levels (2%, 5%, 10%, 20%, 50%, 100%). The comparison is made across seven different workload scenarios defined by dataset (ArXiv, ShareGPT) and input/output sequence lengths.

### Components/Axes

* **Y-Axis:** Labeled "Throughput (tokens/s)". The scale runs from 0 to 20,000, with major gridlines at intervals of 2,500.

* **X-Axis:** Represents seven distinct workload categories. From left to right:

1. `ArXiv`

2. `ShareGPT`

3. `in=1024 out=256`

4. `in=1024 out=512`

5. `in=2048 out=256`

6. `in=2048 out=512`

7. `in=4096 out=512`

8. `in=512 out=256`

* **Legend:** Located at the top of the chart. It defines seven data series with distinct colors and patterns:

* **SGLang non-deterministic:** Green bar with diagonal stripes (top-left to bottom-right).

* **SGLang deterministic:** Red bar with diagonal stripes (top-left to bottom-right).

* **LLM-42 @2%:** Light purple bar with a dense dot pattern.

* **LLM-42 @5%:** Medium purple bar with a sparse dot pattern.

* **LLM-42 @10%:** Darker purple bar with a diagonal cross-hatch pattern.

* **LLM-42 @20%:** Purple bar with a horizontal line pattern.

* **LLM-42 @50%:** Purple bar with a vertical line pattern.

* **LLM-42 @100%:** Dark purple bar with a solid fill.

* **Data Labels:** Each bar has a numerical label on top indicating its value in tokens/s and a multiplier (e.g., "1.00x") showing its performance relative to the "SGLang non-deterministic" baseline for that specific workload category.

### Detailed Analysis

The chart presents a consistent pattern across all seven workload categories. For each category, there are eight bars grouped together.

**Trend Verification & Data Points (Approximate values from labels):**

1. **ArXiv:**

* SGLang non-deterministic (Green): ~16,000 tokens/s (1.00x baseline).

* SGLang deterministic (Red): ~10,700 tokens/s (0.67x).

* LLM-42 throughput **decreases** as the percentage increases: @2% (~19,000, 1.19x) > @5% (~18,500, 1.16x) > @10% (~18,000, 1.13x) > @20% (~17,500, 1.09x) > @50% (~17,000, 1.06x) > @100% (~16,500, 1.03x).

2. **ShareGPT:**

* SGLang non-deterministic: ~15,000 tokens/s (1.00x).

* SGLang deterministic: ~10,000 tokens/s (0.67x).

* LLM-42 trend: @2% (~18,000, 1.20x) > @5% (~17,500, 1.17x) > @10% (~17,000, 1.13x) > @20% (~16,000, 1.07x) > @50% (~15,000, 1.00x) > @100% (~14,000, 0.93x).

3. **in=1024 out=256:**

* SGLang non-deterministic: ~17,000 tokens/s (1.00x).

* SGLang deterministic: ~12,000 tokens/s (0.71x).

* LLM-42 trend: @2% (~19,000, 1.12x) > @5% (~18,500, 1.09x) > @10% (~18,000, 1.06x) > @20% (~17,500, 1.03x) > @50% (~16,500, 0.97x) > @100% (~15,500, 0.91x).

4. **in=1024 out=512:**

* SGLang non-deterministic: ~13,000 tokens/s (1.00x).

* SGLang deterministic: ~9,500 tokens/s (0.73x).

* LLM-42 trend: @2% (~15,000, 1.15x) > @5% (~14,500, 1.12x) > @10% (~14,000, 1.08x) > @20% (~13,500, 1.04x) > @50% (~12,500, 0.96x) > @100% (~11,500, 0.88x).

5. **in=2048 out=256:**

* SGLang non-deterministic: ~16,500 tokens/s (1.00x).

* SGLang deterministic: ~11,500 tokens/s (0.70x).

* LLM-42 trend: @2% (~18,500, 1.12x) > @5% (~18,000, 1.09x) > @10% (~17,500, 1.06x) > @20% (~17,000, 1.03x) > @50% (~16,000, 0.97x) > @100% (~15,000, 0.91x).

6. **in=2048 out=512:**

* SGLang non-deterministic: ~12,500 tokens/s (1.00x).

* SGLang deterministic: ~9,000 tokens/s (0.72x).

* LLM-42 trend: @2% (~14,500, 1.16x) > @5% (~14,000, 1.12x) > @10% (~13,500, 1.08x) > @20% (~13,000, 1.04x) > @50% (~12,000, 0.96x) > @100% (~11,000, 0.88x).

7. **in=4096 out=512:**

* SGLang non-deterministic: ~12,000 tokens/s (1.00x).

* SGLang deterministic: ~8,500 tokens/s (0.71x).

* LLM-42 trend: @2% (~14,000, 1.17x) > @5% (~13,500, 1.13x) > @10% (~13,000, 1.08x) > @20% (~12,500, 1.04x) > @50% (~11,500, 0.96x) > @100% (~10,500, 0.88x).

8. **in=512 out=256:**

* SGLang non-deterministic: ~17,500 tokens/s (1.00x).

* SGLang deterministic: ~12,000 tokens/s (0.69x).

* LLM-42 trend: @2% (~19,500, 1.11x) > @5% (~19,000, 1.09x) > @10% (~18,500, 1.06x) > @20% (~17,500, 1.00x) > @50% (~16,500, 0.94x) > @100% (~15,500, 0.89x).

### Key Observations

1. **Consistent Hierarchy:** In every single workload category, the performance order from highest to lowest throughput is: LLM-42 @2% > LLM-42 @5% > LLM-42 @10% > LLM-42 @20% > SGLang non-deterministic > LLM-42 @50% > LLM-42 @100% > SGLang deterministic.

2. **LLM-42 Inverse Scaling:** There is a clear, monotonic **downward trend** in LLM-42 throughput as its operational percentage increases from 2% to 100%. The 2% configuration is consistently the fastest system overall.

3. **SGLang Deterministic Penalty:** The deterministic mode of SGLang consistently incurs a significant performance penalty (approximately 30-33% lower throughput) compared to its non-deterministic mode across all tests.

4. **Workload Impact:** Throughput is generally highest for the `in=512 out=256` and `ArXiv` workloads and lowest for the `in=4096 out=512` workload, suggesting longer input sequences significantly reduce processing speed.

### Interpretation

The data strongly suggests that the LLM-42 system's throughput is highly sensitive to the "percentage" parameter, which likely represents a resource allocation, sampling rate, or confidence threshold. Lower percentages (2%, 5%) yield superior performance, outperforming even the optimized SGLang non-deterministic baseline. This indicates a potential trade-off where allocating fewer resources or accepting a lower confidence level per token results in higher overall system throughput.

The consistent underperformance of SGLang's deterministic mode highlights the computational overhead required to guarantee reproducible outputs. The choice between SGLang modes would thus depend on whether reproducibility is a critical requirement for the application, justifying the ~30% speed cost.

The workload-specific variations (e.g., lower throughput for longer input sequences) provide practical guidance for system deployment, indicating that performance will degrade as the context length of the task increases. The chart effectively demonstrates that for maximizing raw token generation speed, a lightly-loaded LLM-42 instance is the optimal choice among the tested configurations.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Bar Chart: Throughput Comparison Across Models and Configurations

### Overview

The chart compares the throughput (tokens per second) of different computational models and configurations. It evaluates SGLang (non-deterministic and deterministic) and LLM-42 at varying percentages (2%, 5%, 10%, 20%, 50%, 100%) across multiple datasets (e.g., ArXiv, ShareGPT, and input/output size combinations like in=1024 out=256).

### Components/Axes

- **X-axis**: Models and configurations (e.g., "ArXiv", "ShareGPT", "in=1024 out=256", "in=1024 out=512", etc.).

- **Y-axis**: Throughput (tokens/s), ranging from 0 to 20,000.

- **Legend**:

- **Green**: SGLang non-deterministic.

- **Red**: SGLang deterministic.

- **Purple**: LLM-42 at different percentages (2%, 5%, 10%, 20%, 50%, 100%).

- **Bar Colors**:

- Green bars represent SGLang non-deterministic.

- Red bars represent SGLang deterministic.

- Purple bars represent LLM-42 at specific percentages, with labels (e.g., "LLM-42 @2%") on top of each bar.

### Detailed Analysis

- **SGLang Non-Deterministic (Green)**:

- Throughput values range from ~12,000 to ~18,000 tokens/s across models.

- Highest throughput observed in "in=512 out=256" (~18,000 tokens/s).

- Lowest throughput in "in=4096 out=512" (~12,000 tokens/s).

- **SGLang Deterministic (Red)**:

- Throughput values range from ~7,000 to ~12,000 tokens/s.

- Highest throughput in "in=512 out=256" (~12,000 tokens/s).

- Lowest throughput in "in=4096 out=512" (~7,000 tokens/s).

- **LLM-42 at Different Percentages (Purple)**:

- Throughput decreases as the percentage increases.

- Example: For "in=1024 out=256", LLM-42 @2% (~17,000 tokens/s) vs. @100% (~10,000 tokens/s).

- Highest throughput at 2% (e.g., ~17,000 tokens/s for "in=1024 out=256").

- Lowest throughput at 100% (e.g., ~10,000 tokens/s for "in=1024 out=256").

### Key Observations

1. **SGLang Non-Deterministic vs. Deterministic**:

- Non-deterministic configurations consistently outperform deterministic ones (e.g., ~18,000 vs. ~12,000 tokens/s for "in=512 out=256").

- Deterministic throughput is ~30–40% lower than non-deterministic.

2. **LLM-42 Performance**:

- Throughput drops significantly with higher percentages (e.g., ~17,000 tokens/s at 2% vs. ~10,000 at 100%).

- The 2% and 5% configurations show the highest throughput, while 50% and 100% are the lowest.

3. **Model-Specific Trends**:

- "in=512 out=256" and "in=1024 out=256" configurations generally have the highest throughput.

- Larger input sizes (e.g., "in=4096 out=512") result in lower throughput across all configurations.

### Interpretation

- **Determinism vs. Performance**: The non-deterministic SGLang configuration achieves higher throughput, suggesting that determinism introduces computational overhead.

- **LLM-42 Scalability**: The percentage-based throttling (e.g., 2% vs. 100%) directly impacts performance, with lower percentages enabling higher throughput. This implies that LLM-42’s efficiency is sensitive to resource allocation.

- **Model-Specific Optimization**: Configurations with smaller input/output sizes (e.g., "in=512 out=256") are more efficient, highlighting the importance of balancing input/output dimensions for optimal performance.

### Spatial Grounding

- **Legend**: Positioned at the top of the chart, with colors matching the bars (green, red, purple).

- **Bar Placement**: Each model group (e.g., "ArXiv") has three bars (green, red, purple) aligned vertically. Purple bars are further subdivided by percentage labels.

- **Axis Labels**: Y-axis ("Throughput (tokens/s)") is on the left, X-axis labels are centered below the bars.

### Content Details

- **Numerical Values**:

- SGLang non-deterministic: ~12,000–18,000 tokens/s.

- SGLang deterministic: ~7,000–12,000 tokens/s.

- LLM-42: ~10,000–17,000 tokens/s (depending on percentage).

- **Percentage Labels**: Each purple bar has a label (e.g., "LLM-42 @2%") indicating the configuration.

### Notable Outliers

- **LLM-42 @100%**: Consistently the lowest throughput across all models (e.g., ~10,000 tokens/s for "in=1024 out=256").

- **SGLang Deterministic in=4096 out=512**: Lowest throughput (~7,000 tokens/s), indicating significant performance degradation with large input sizes.

This chart demonstrates the trade-offs between determinism, resource allocation, and model efficiency, with SGLang non-deterministic and LLM-42 at low percentages achieving the highest throughput.

DECODING INTELLIGENCE...