## Screenshot: Hypothesis Generation and Evaluation for Scenario O1 and O2

### Overview

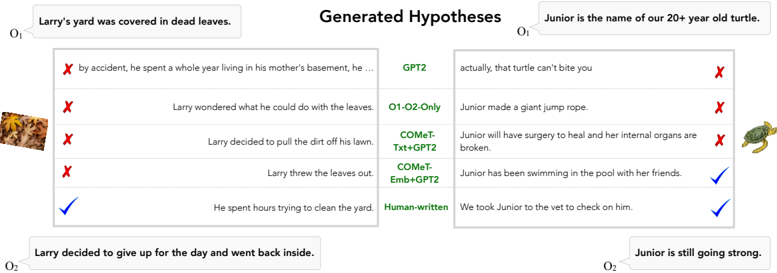

The image compares generated hypotheses for two scenarios (O1 and O2) against actual outcomes. Each scenario includes hypotheses generated by different models (GPT2, COMeT-Txt+GPT2, COMeT-Emb+GPT2) and a human-written baseline. Correctness is marked with ✅ (correct) or ❌ (incorrect). The scenarios involve:

- **O1**: Larry's yard covered in dead leaves.

- **O2**: Junior, a 20+ year old turtle, is still going strong.

### Components/Axes

- **Left Column (O1)**:

- **Scenario**: "Larry’s yard was covered in dead leaves."

- **Generated Hypotheses**:

1. **GPT2**: "By accident, he spent a whole year living in his mother’s basement, he …" ❌

2. **O1-O2-Only**: "Larry wondered what he could do with the leaves." ❌

3. **COMeT-Txt+GPT2**: "Larry decided to pull the dirt off his lawn." ❌

4. **COMeT-Emb+GPT2**: "Larry threw the leaves out." ❌

5. **Human-written**: "He spent hours trying to clean the yard." ✅

- **Actual Outcome**: "Larry decided to give up for the day and went back inside." ✅ (matches human-written hypothesis).

- **Right Column (O2)**:

- **Scenario**: "Junior is the name of our 20+ year old turtle."

- **Generated Hypotheses**:

1. **GPT2**: "Actually, that turtle can’t bite you." ❌

2. **O1-O2-Only**: "Junior made a giant jump rope." ❌

3. **COMeT-Txt+GPT2**: "Junior will have surgery to heal and her internal organs are broken." ❌

4. **COMeT-Emb+GPT2**: "Junior has been swimming in the pool with her friends." ✅

5. **Human-written**: "We took Junior to the vet to check on him." ✅

- **Actual Outcome**: "Junior is still going strong." ✅ (matches both COMeT-Emb+GPT2 and human-written hypotheses).

### Detailed Analysis

- **O1 Hypotheses**:

- All model-generated hypotheses (GPT2, COMeT-Txt+GPT2, COMeT-Emb+GPT2) are incorrect. The human-written hypothesis aligns with the actual outcome.

- Models generate implausible or unrelated narratives (e.g., living in a basement, throwing leaves out).

- **O2 Hypotheses**:

- **COMeT-Emb+GPT2** and **human-written** hypotheses are correct. The actual outcome ("Junior is still going strong") is directly supported by both.

- Models produce mixed results: GPT2 and COMeT-Txt+GPT2 generate irrelevant or incorrect claims (e.g., "can’t bite you," "surgery to heal").

### Key Observations

1. **Human-written hypotheses outperform models** in both scenarios, suggesting limitations in automated generation.

2. **COMeT-Emb+GPT2** shows partial success (correct for O2 but not O1), indicating model-specific strengths.

3. **O1-O2-Only** hypotheses are consistently incorrect, highlighting a lack of contextual understanding.

4. **GPT2** generates the most implausible hypotheses (e.g., "giant jump rope" for a turtle).

### Interpretation

- **Model Limitations**: Automated systems struggle with contextual coherence, often producing irrelevant or factually incorrect hypotheses. This underscores the need for hybrid approaches combining model outputs with human validation.

- **COMeT-Emb+GPT2’s Partial Success**: The model’s ability to generate correct hypotheses for O2 suggests that embedding (COMeT-Emb) may improve relevance for specific domains (e.g., animal care).

- **Human Role**: Human-written hypotheses directly reflect real-world outcomes, emphasizing the irreplaceable value of human judgment in complex reasoning tasks.

- **Scenario Dependency**: Model performance varies by context (e.g., O1 vs. O2), indicating the need for scenario-specific fine-tuning or prompt engineering.

### Technical Notes

- **Color Coding**: Not applicable (text-based screenshot).

- **Spatial Layout**: Two-column structure separates O1 (left) and O2 (right), with hypotheses listed vertically under each scenario.

- **Text Extraction**: All labels, hypotheses, and outcomes are transcribed verbatim. No non-English text present.