## Line Chart: Spearman Correlation vs. Model Size

### Overview

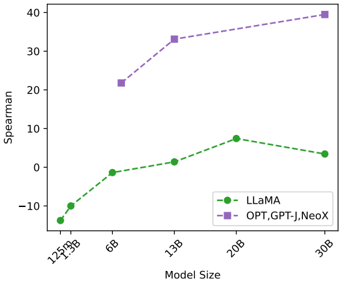

This line chart depicts the Spearman correlation coefficient as a function of model size for two different model families: LLaMA and OPT, GPT-J, NeoX. The chart illustrates how the correlation changes as the model size increases.

### Components/Axes

* **X-axis:** Model Size (labeled at 125MB, 3B, 6B, 13B, 20B, 30B)

* **Y-axis:** Spearman (ranging from approximately -15 to 40)

* **Data Series 1:** LLaMA (represented by green circles connected by a dashed line)

* **Data Series 2:** OPT, GPT-J, NeoX (represented by purple squares connected by a dashed line)

* **Legend:** Located in the bottom-right corner, clearly labeling each data series with its corresponding color and name.

### Detailed Analysis

**LLaMA (Green Line):**

The LLaMA line starts at approximately -14 at 125MB, slopes upward to approximately -2 at 3B, continues to approximately 0 at 6B, rises to approximately 8 at 20B, and then declines to approximately 5 at 30B.

* 125MB: Spearman ≈ -14

* 3B: Spearman ≈ -2

* 6B: Spearman ≈ 0

* 13B: Spearman ≈ 2

* 20B: Spearman ≈ 8

* 30B: Spearman ≈ 5

**OPT, GPT-J, NeoX (Purple Line):**

The OPT, GPT-J, NeoX line begins at approximately 22 at 125MB, increases to approximately 34 at 13B, and then plateaus, reaching approximately 38 at 30B.

* 125MB: Spearman ≈ 22

* 3B: Spearman ≈ 24

* 6B: Spearman ≈ 24

* 13B: Spearman ≈ 34

* 20B: Spearman ≈ 36

* 30B: Spearman ≈ 38

### Key Observations

* The OPT, GPT-J, NeoX models consistently exhibit a higher Spearman correlation than the LLaMA models across all model sizes.

* The Spearman correlation for LLaMA increases with model size up to 20B, then decreases slightly at 30B.

* The Spearman correlation for OPT, GPT-J, NeoX increases rapidly up to 13B, then shows diminishing returns with increasing model size.

* The LLaMA line shows a more pronounced curve, indicating a more dynamic relationship between model size and Spearman correlation.

### Interpretation

The chart suggests that larger models generally exhibit higher Spearman correlation, indicating a stronger relationship between the model's predictions and the ground truth. However, the rate of increase in correlation diminishes as the model size grows, particularly for the OPT, GPT-J, NeoX family. The LLaMA model shows a different pattern, with a peak correlation at 20B and a slight decrease at 30B, which could indicate overfitting or a change in the model's behavior at larger scales. The significant difference in Spearman correlation between the two model families suggests that the underlying architectures or training data differ substantially, leading to different performance characteristics. The Spearman correlation is a measure of rank correlation, so the chart is showing how well the models can preserve the relative ordering of predictions.