## Line Chart: Loss Comparison Between MoBA and Full Attention Baseline

### Overview

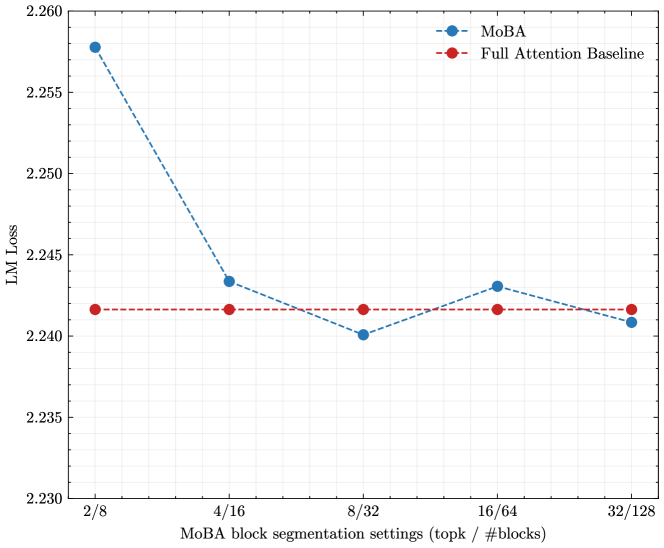

The chart compares the Language Model (LM) Loss of two models: MoBA (blue dashed line) and Full Attention Baseline (red dashed line) across different block segmentation settings. The x-axis represents MoBA block segmentation configurations (topk / #blocks), while the y-axis shows LM Loss values ranging from 2.230 to 2.260.

### Components/Axes

- **X-axis**: MoBA block segmentation settings (topk / #blocks)

Labels: `2/8`, `4/16`, `8/32`, `16/64`, `32/128`

- **Y-axis**: LM Loss (range: 2.230–2.260)

- **Legend**:

- Blue dashed line: MoBA

- Red dashed line: Full Attention Baseline

- **Legend Position**: Top-right corner

### Detailed Analysis

#### MoBA (Blue Dashed Line)

- **2/8**: 2.258 (highest loss)

- **4/16**: 2.243 (sharp drop)

- **8/32**: 2.240 (lowest loss)

- **16/64**: 2.242 (slight increase)

- **32/128**: 2.241 (minor decrease)

#### Full Attention Baseline (Red Dashed Line)

- **All Segments**: Stable at ~2.242 (flat line)

### Key Observations

1. **MoBA** shows a significant reduction in LM Loss from `2/8` (2.258) to `4/16` (2.243), followed by gradual stabilization.

2. **Full Attention Baseline** maintains a consistent loss (~2.242) across all segmentation settings.

3. MoBA’s loss converges toward the baseline’s value in later segments (`16/64` and `32/128`).

### Interpretation

The data suggests that MoBA outperforms the Full Attention Baseline in reducing LM Loss, particularly in early block segmentation settings (`2/8` to `4/16`). The sharp drop in MoBA’s loss indicates improved efficiency in these configurations. However, as segmentation becomes finer (`8/32` onward), the performance gap narrows, implying diminishing returns for increased granularity. The baseline’s stability highlights its robustness but also reveals that MoBA’s adaptive segmentation offers meaningful advantages in specific scenarios.

**Notable Anomaly**: The MoBA line’s slight uptick at `16/64` (2.242) before stabilizing at `32/128` (2.241) may indicate minor overfitting or computational trade-offs in finer segmentation.