## Diagram: Transformer Architectures Comparison

### Overview

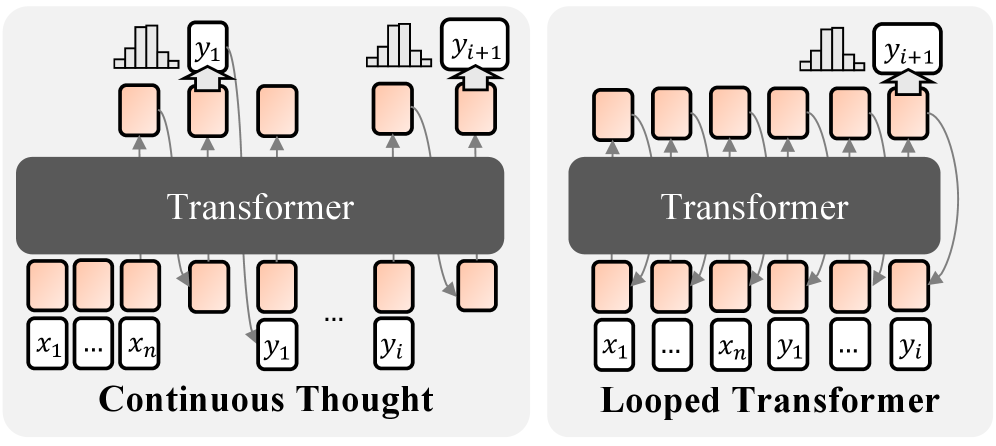

The image compares two Transformer-based architectures: "Continuous Thought" (left) and "Looped Transformer" (right). Both diagrams illustrate input-output relationships and processing flows within a Transformer model.

### Components/Axes

- **Central Block**: Labeled "Transformer" in both diagrams, representing the core processing unit.

- **Input Sequence**:

- Left diagram: Labeled `x₁ ... xₙ` (input tokens).

- Right diagram: Same input sequence, but with additional outputs (`y₁ ... yᵢ`) fed back into the input.

- **Output Sequence**:

- Left diagram: Labeled `y₁ ... yᵢ` (output tokens).

- Right diagram: Outputs `y₁ ... yᵢ` with a feedback loop connecting `yᵢ₊₁` back to the input.

- **Thought Process**:

- Left diagram: Labeled "Continuous Thought," showing sequential processing without feedback.

- Right diagram: Labeled "Looped Transformer," showing a circular feedback loop from `yᵢ₊₁` to `x₁`.

### Detailed Analysis

- **Continuous Thought**:

- Inputs (`x₁ ... xₙ`) flow unidirectionally through the Transformer to produce outputs (`y₁ ... yᵢ`).

- No feedback mechanism; processing stops after the final output.

- **Looped Transformer**:

- Outputs (`y₁ ... yᵢ`) are fed back into the input sequence via a looped connection (`yᵢ₊₁ → x₁`).

- Enables iterative processing, where outputs influence subsequent inputs.

### Key Observations

1. **Feedback Loop**: The Looped Transformer introduces a circular dependency between outputs and inputs, absent in the Continuous Thought model.

2. **Sequential vs. Iterative**: The left diagram represents a one-pass Transformer, while the right diagram supports multi-pass processing.

3. **Token Flow**: Both diagrams use identical input/output token labels (`x₁ ... xₙ`, `y₁ ... yᵢ`), but the looped architecture modifies the flow.

### Interpretation

The diagrams highlight a critical architectural difference:

- **Continuous Thought** models standard Transformer behavior, where inputs are processed once to generate outputs.

- **Looped Transformer** introduces a feedback mechanism, enabling recursive reasoning. This could allow the model to refine outputs iteratively, mimicking human-like "chain-of-thought" reasoning.

The looped architecture suggests potential applications in tasks requiring dynamic context updates, such as real-time decision-making or self-correcting language models. However, the added complexity may increase computational overhead compared to the simpler Continuous Thought design.