## Line Chart: Differentiable Parameter Learning with 1 label

### Overview

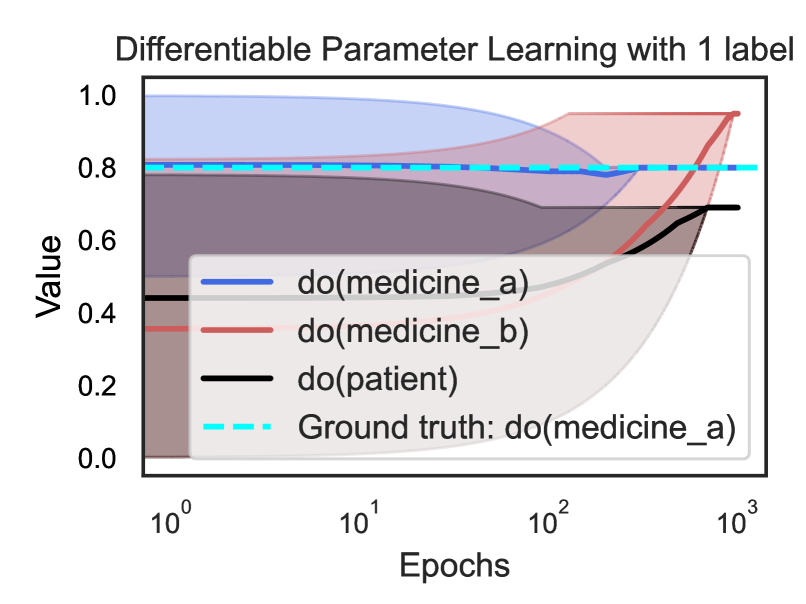

The chart visualizes the convergence of differentiable parameter learning for three interventions (`do(medicine_a)`, `do(medicine_b)`, `do(patient)`) over logarithmic epochs (1 to 1000). A dashed cyan line represents the ground truth (`do(medicine_a)`). All lines are plotted against a logarithmic x-axis (epochs) and linear y-axis (value from 0.0 to 1.0).

---

### Components/Axes

- **X-axis**: "Epochs" (logarithmic scale: 10⁰ to 10³)

- **Y-axis**: "Value" (linear scale: 0.0 to 1.0)

- **Legend**:

- Blue solid line: `do(medicine_a)`

- Red solid line: `do(medicine_b)`

- Black solid line: `do(patient)`

- Dashed cyan line: Ground truth (`do(medicine_a)`)

---

### Detailed Analysis

1. **`do(medicine_a)` (Blue)**:

- Starts at **~0.8** (epoch 10⁰).

- Dips slightly to **~0.75** at 10¹ epochs.

- Stabilizes near **~0.8** by 10² epochs.

- Remains flat at **~0.8** through 10³ epochs.

2. **`do(medicine_b)` (Red)**:

- Begins at **~0.4** (epoch 10⁰).

- Rises steadily to **~0.8** by 10² epochs.

- Continues increasing to **~0.9** at 10³ epochs.

3. **`do(patient)` (Black)**:

- Starts at **~0.2** (epoch 10⁰).

- Gradually increases to **~0.6** by 10² epochs.

- Reaches **~0.7** at 10³ epochs.

4. **Ground Truth** (Dashed Cyan):

- Horizontal line at **0.8** across all epochs.

---

### Key Observations

- **`do(medicine_a)`** closely tracks the ground truth initially but exhibits a minor dip at 10¹ epochs.

- **`do(medicine_b)`** outperforms `do(patient)` and approaches the ground truth by 10² epochs, surpassing it slightly by 10³.

- **`do(patient)`** shows the slowest convergence, never reaching the ground truth value.

- The ground truth remains constant, serving as a benchmark for comparison.

---

### Interpretation

The chart demonstrates how parameter learning trajectories differ across interventions. `do(medicine_a)` (blue) aligns with the ground truth but shows instability at intermediate epochs, suggesting potential overfitting or sensitivity to hyperparameters. `do(medicine_b)` (red) exhibits robust convergence, closing the gap with the ground truth by 10² epochs and exceeding it by 10³, indicating superior adaptability. `do(patient)` (black) lags significantly, highlighting its inefficiency in this learning paradigm. The logarithmic epoch scale emphasizes early-stage performance differences, while the linear value axis underscores the magnitude of parameter adjustments. The ground truth’s constancy implies a fixed target, with `do(medicine_b)` ultimately achieving the closest approximation.