\n

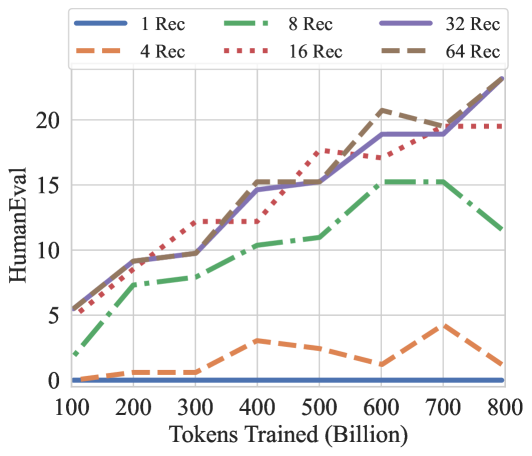

## Line Chart: HumanEval Performance vs. Tokens Trained

### Overview

This line chart depicts the relationship between the number of tokens trained (in billions) and the HumanEval score for different numbers of retrieval augmentations ("Rec"). The chart shows how performance on the HumanEval benchmark changes as the model is trained on more data, for varying levels of retrieval context.

### Components/Axes

* **X-axis:** "Tokens Trained (Billion)" - Ranges from approximately 100 to 800 billion tokens.

* **Y-axis:** "HumanEval" - Ranges from 0 to 22.

* **Legend:** Located at the top of the chart, identifies the different lines representing different numbers of retrieval augmentations:

* Blue Solid Line: "1 Rec"

* Orange Dashed Line: "4 Rec"

* Green Dashed-Dotted Line: "8 Rec"

* Red Dotted Line: "16 Rec"

* Purple Solid Line: "32 Rec"

* Gray Dashed Line: "64 Rec"

### Detailed Analysis

The chart displays six lines, each representing a different number of retrieval augmentations.

* **1 Rec (Blue Solid):** The line slopes upward consistently from approximately 6 at 100 billion tokens to approximately 21 at 800 billion tokens. Specific data points (approximate): (100, 6), (200, 8), (300, 11), (400, 15), (500, 17), (600, 19), (700, 20), (800, 21).

* **4 Rec (Orange Dashed):** The line starts at approximately 0 at 100 billion tokens, rises to a peak of approximately 5 at 700 billion tokens, and then declines to approximately 2 at 800 billion tokens. Specific data points (approximate): (100, 0), (200, 1), (300, 2), (400, 3), (500, 4), (600, 3), (700, 5), (800, 2).

* **8 Rec (Green Dashed-Dotted):** The line starts at approximately 2 at 100 billion tokens, rises to approximately 15 at 600 billion tokens, and then declines to approximately 13 at 800 billion tokens. Specific data points (approximate): (100, 2), (200, 5), (300, 8), (400, 11), (500, 13), (600, 15), (700, 14), (800, 13).

* **16 Rec (Red Dotted):** The line starts at approximately 4 at 100 billion tokens, rises to approximately 18 at 600 billion tokens, and then plateaus around 18-19. Specific data points (approximate): (100, 4), (200, 7), (300, 10), (400, 14), (500, 16), (600, 18), (700, 19), (800, 19).

* **32 Rec (Purple Solid):** The line starts at approximately 5 at 100 billion tokens, rises steadily to approximately 20 at 800 billion tokens. Specific data points (approximate): (100, 5), (200, 8), (300, 12), (400, 16), (500, 18), (600, 19), (700, 20), (800, 20).

* **64 Rec (Gray Dashed):** The line starts at approximately 1 at 100 billion tokens, rises to approximately 15 at 400 billion tokens, and then declines to approximately 11 at 800 billion tokens. Specific data points (approximate): (100, 1), (200, 2), (300, 5), (400, 10), (500, 13), (600, 14), (700, 13), (800, 11).

### Key Observations

* Increasing the number of tokens trained generally improves HumanEval performance for all retrieval augmentation levels.

* The "1 Rec" and "32 Rec" lines consistently show the highest performance.

* The "4 Rec" and "64 Rec" lines exhibit a peak performance at intermediate token counts, followed by a decline. This suggests that too much retrieval context can be detrimental to performance.

* The "8 Rec" and "16 Rec" lines show a more consistent upward trend, but do not reach the same peak performance as "1 Rec" and "32 Rec".

### Interpretation

The data suggests that retrieval augmentation can improve performance on the HumanEval benchmark, but the optimal amount of retrieval context depends on the number of tokens trained. Initially, more retrieval context (up to 16 Rec) seems to help, but beyond that, it appears to hinder performance. The "1 Rec" and "32 Rec" lines indicate that a moderate amount of retrieval context, combined with sufficient training data, yields the best results. The decline in performance for "4 Rec" and "64 Rec" at higher token counts could be due to the model being distracted by irrelevant information retrieved from the context, or overfitting to the specific retrieval data. The chart highlights the importance of carefully tuning the amount of retrieval context to maximize performance. The fact that the lines do not converge suggests that the optimal retrieval strategy may vary depending on the model size and training data. The chart demonstrates a clear trade-off between the benefits of retrieval augmentation and the potential for distraction or overfitting.