## Heatmap: Layer Activation vs. Training Steps

### Overview

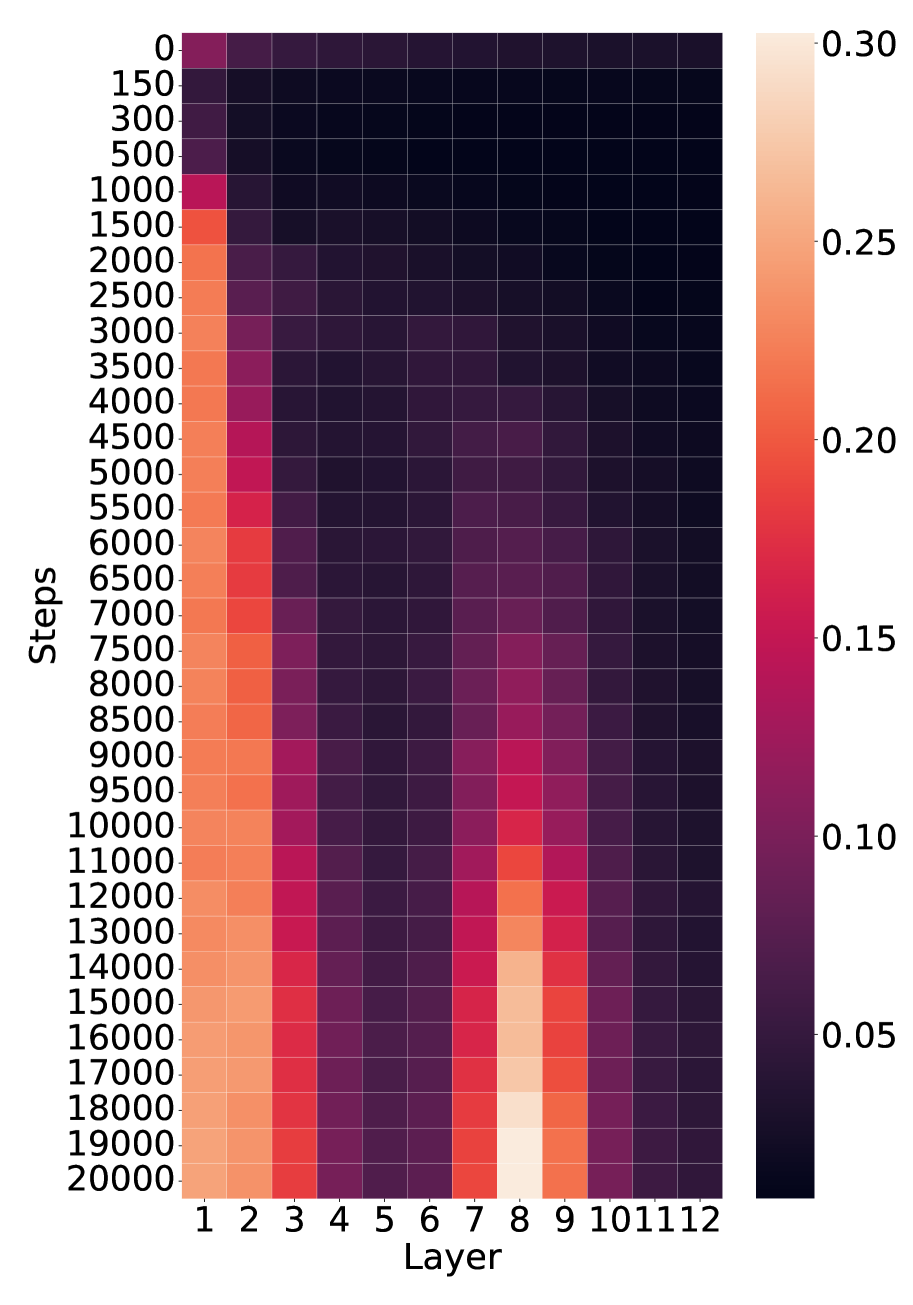

The image is a heatmap visualizing the activation levels of different layers in a neural network during training. The x-axis represents the layer number (1 to 12), and the y-axis represents the training steps (0 to 20000). The color intensity indicates the activation level, ranging from dark purple (low activation) to light orange (high activation).

### Components/Axes

* **X-axis:** "Layer" - Represents the layer number in the neural network, ranging from 1 to 12.

* **Y-axis:** "Steps" - Represents the training steps, ranging from 0 to 20000, with increments of 150, 300, 500, 1000, 1500, 2000, 2500, 3000, 3500, 4000, 4500, 5000, 5500, 6000, 6500, 7000, 7500, 8000, 8500, 9000, 9500, 10000, 11000, 12000, 13000, 14000, 15000, 16000, 17000, 18000, 19000, 20000.

* **Colorbar (Legend):** Located on the right side of the heatmap. It maps color intensity to activation levels, ranging from 0.05 (dark purple) to 0.30 (light orange), with increments of 0.05.

### Detailed Analysis

The heatmap shows how the activation levels of each layer change as the training progresses.

* **Layer 1:** Shows high activation (light orange) from the beginning (0 steps) until the end (20000 steps). The activation level appears relatively constant throughout the training process.

* **Layer 2:** Similar to Layer 1, it exhibits high activation levels throughout the training.

* **Layer 3:** Starts with low activation (dark purple) and gradually increases to a moderate level (pink) as training progresses.

* **Layers 4-7:** These layers show consistently low activation levels (dark purple) throughout the entire training process.

* **Layer 8:** Starts with low activation and gradually increases to a moderate level (pink) as training progresses, similar to Layer 3.

* **Layer 9:** Shows a pattern similar to Layer 8, with activation increasing as training progresses.

* **Layers 10-12:** These layers show consistently low activation levels (dark purple) throughout the entire training process.

**Specific Data Points (Approximate):**

* At 0 steps, Layer 1 has an activation level of approximately 0.30 (light orange).

* At 20000 steps, Layer 1 has an activation level of approximately 0.30 (light orange).

* At 0 steps, Layer 3 has an activation level of approximately 0.05 (dark purple).

* At 20000 steps, Layer 3 has an activation level of approximately 0.15 (pink).

* At 0 steps, Layer 5 has an activation level of approximately 0.05 (dark purple).

* At 20000 steps, Layer 5 has an activation level of approximately 0.05 (dark purple).

* At 10000 steps, Layer 8 has an activation level of approximately 0.10 (purple-pink).

* At 20000 steps, Layer 8 has an activation level of approximately 0.25 (orange).

### Key Observations

* Layers 1 and 2 consistently exhibit high activation levels throughout the training process.

* Layers 3, 8, and 9 show an increase in activation levels as training progresses.

* Layers 4-7 and 10-12 consistently exhibit low activation levels throughout the training process.

* The most significant changes in activation occur in Layers 3, 8, and 9.

### Interpretation

The heatmap suggests that Layers 1 and 2 are highly active from the beginning of training, potentially indicating that they are quickly learning important features. Layers 3, 8, and 9 gradually increase their activation, suggesting that they learn more complex features as training progresses. Layers 4-7 and 10-12 remain relatively inactive, which could indicate that they are either redundant or not effectively contributing to the learning process for this specific task. This information could be used to optimize the network architecture, potentially by removing or modifying the less active layers. The consistent high activation of the first two layers might also suggest that they are overfitting to the initial training data, which could be addressed with regularization techniques.