TECHNICAL ASSET FINGERPRINT

2a40694c092a121c95237534

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

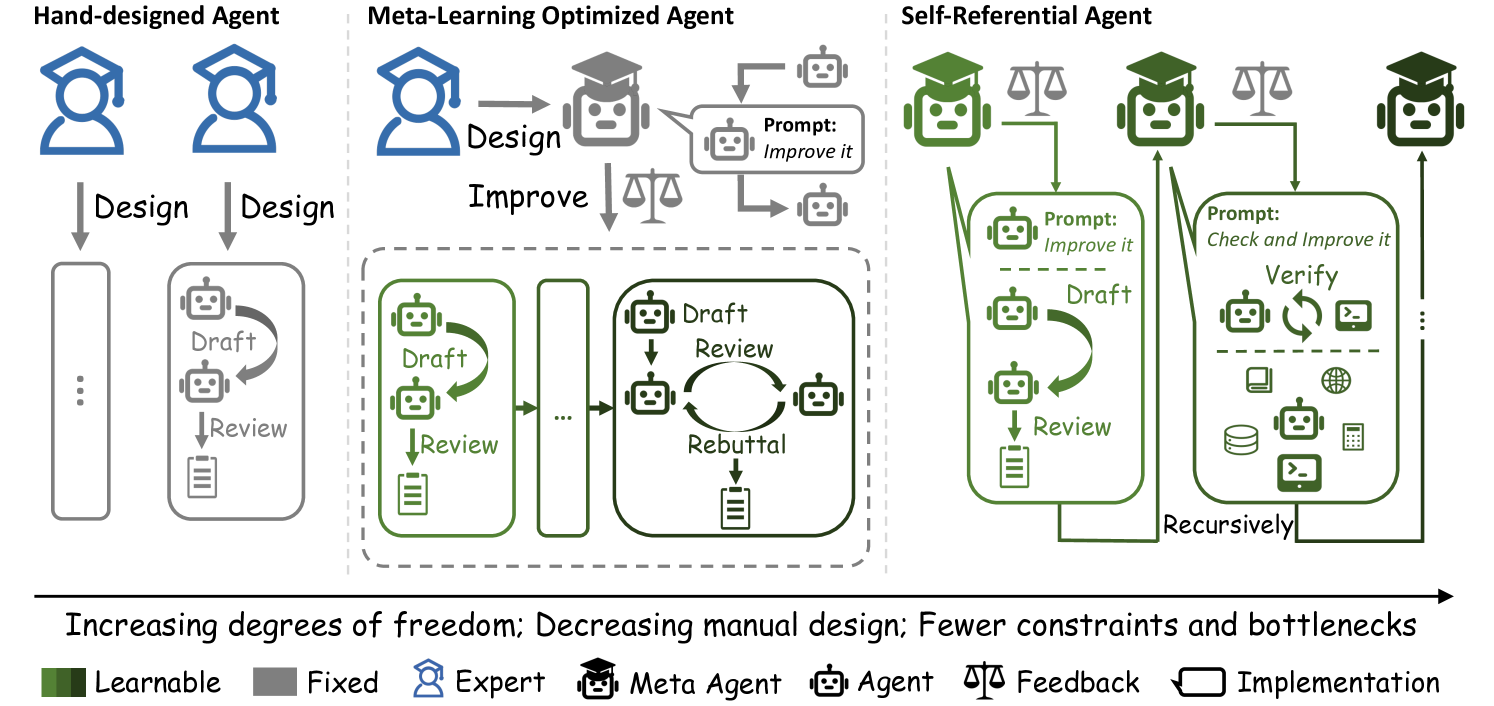

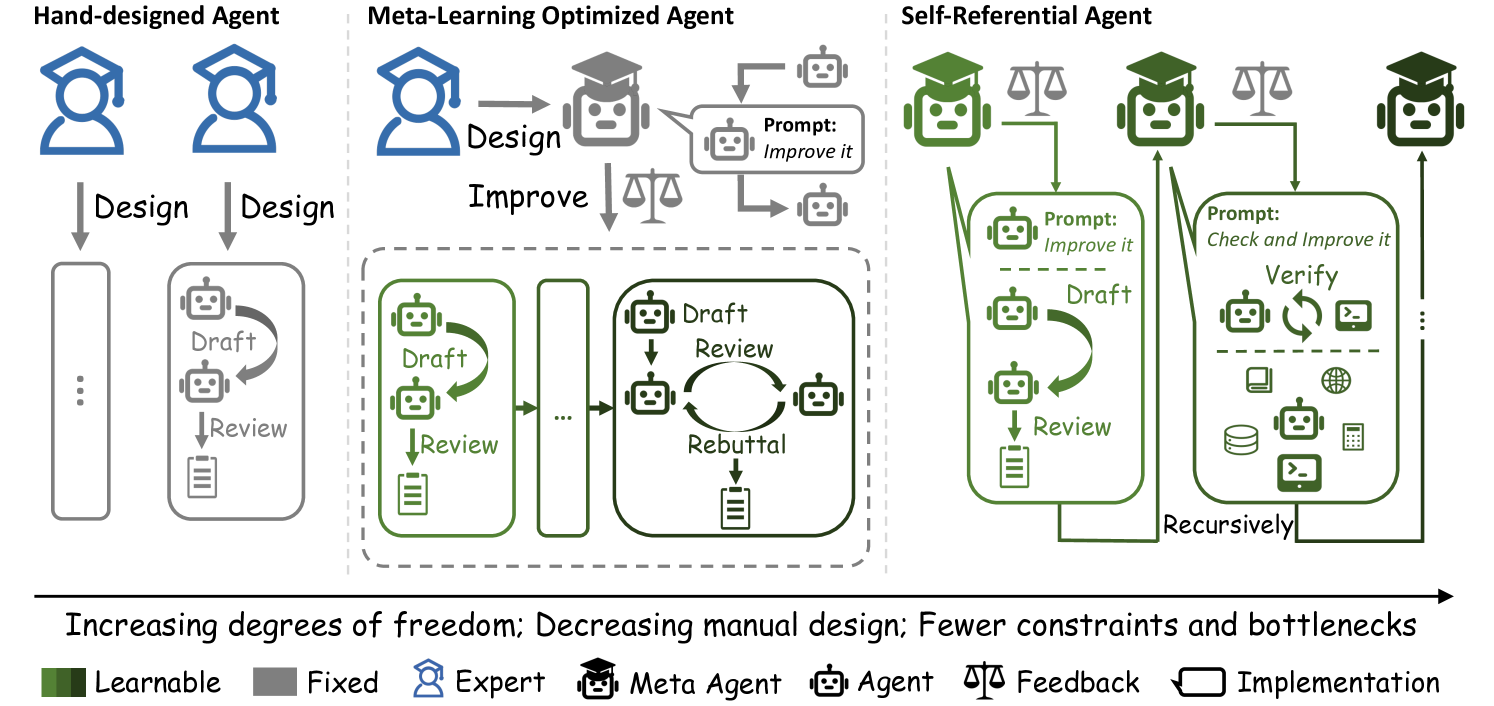

## Diagram: Agent Design Paradigms

### Overview

The image presents a diagram comparing three agent design paradigms: Hand-designed Agent, Meta-Learning Optimized Agent, and Self-Referential Agent. The diagram illustrates the design and implementation processes for each type, highlighting the increasing degrees of freedom and decreasing manual design as one moves from left to right.

### Components/Axes

* **Titles:**

* Hand-designed Agent (top-left)

* Meta-Learning Optimized Agent (top-center)

* Self-Referential Agent (top-right)

* **Legend:** (bottom)

* Learnable (dark green square)

* Fixed (gray square)

* Expert (blue person icon with graduation cap)

* Meta Agent (black robot icon with graduation cap)

* Agent (gray robot icon)

* Feedback (scales icon)

* Implementation (rectangle icon)

* **Process Steps:** Design, Draft, Review, Rebuttal, Verify

* **Agents:** Represented by robot icons, colored gray or green.

* **Directional Arrows:** Indicate the flow of processes.

* **Prompts:** Text boxes indicating instructions given to the agents.

* **Icons within "Verify" box:** Represent various data types and operations (e.g., database, globe, calculator, code).

* **Horizontal Axis:** "Increasing degrees of freedom; Decreasing manual design; Fewer constraints and bottlenecks" (bottom)

### Detailed Analysis

**1. Hand-designed Agent (Left)**

* **Design:** Two blue "Expert" icons (person with graduation cap) are at the top, each with a "Design" arrow pointing downwards.

* **Implementation:** A gray vertical rectangle with three dots inside represents the implementation phase. A gray "Agent" drafts and reviews.

* **Process:** The "Draft" step has a gray robot icon, and the "Review" step has a gray robot icon and a document icon.

**2. Meta-Learning Optimized Agent (Center)**

* **Design & Improve:** A blue "Expert" icon designs, which leads to a gray "Meta Agent" (robot with graduation cap). The Meta Agent is prompted to "Improve it" by two gray "Agent" icons. Feedback (scales icon) is provided.

* **Implementation:** A dashed gray rectangle surrounds the implementation phase.

* **Process:** The "Draft" and "Review" steps are performed by green "Agent" icons. A "Rebuttal" loop connects the "Draft" and "Review" steps. A gray vertical rectangle with three dots inside represents the implementation phase.

**3. Self-Referential Agent (Right)**

* **Design & Improve:** A green "Meta Agent" (robot with graduation cap) is at the top. Feedback (scales icon) is provided.

* **Implementation:** A dashed green rectangle surrounds the implementation phase.

* **Process:** The "Draft" and "Review" steps are performed by green "Agent" icons. A "Rebuttal" loop connects the "Draft" and "Review" steps. A "Verify" step, prompted by "Check and Improve it", contains icons representing data and operations. The entire process is labeled "Recursively". A vertical line with three dots inside represents the implementation phase.

### Key Observations

* The diagram illustrates a progression from manual design (Hand-designed Agent) to automated design and improvement (Meta-Learning Optimized Agent and Self-Referential Agent).

* The color changes from gray to green indicate a shift from fixed to learnable components.

* The "Rebuttal" loop in the Meta-Learning Optimized and Self-Referential Agents suggests an iterative refinement process.

* The "Verify" step in the Self-Referential Agent indicates a self-checking mechanism.

### Interpretation

The diagram demonstrates how agent design can evolve from a fully manual process to one that leverages meta-learning and self-reference. The Hand-designed Agent relies heavily on human expertise, while the Meta-Learning Optimized Agent uses a meta-agent to improve the design. The Self-Referential Agent takes this a step further by incorporating a self-checking and recursive improvement mechanism. This progression suggests a move towards more autonomous and adaptive agents with fewer constraints and bottlenecks. The increasing degrees of freedom imply that the agents are given more control over their own design and operation.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Agent Design Paradigms

### Overview

The image presents a comparative diagram illustrating three different approaches to agent design: Hand-designed Agent, Meta-Learning Optimized Agent, and Self-Referential Agent. The diagram visually represents the workflow and components of each approach, highlighting the increasing degrees of freedom and decreasing manual design involved as one moves from left to right. The diagram uses icons to represent different agent types and processes, connected by arrows to show the flow of information and control.

### Components/Axes

The diagram is horizontally organized into three main sections, each representing a different agent design paradigm. Below the three sections is a legend defining the icons used throughout the diagram. The legend includes:

* **Learnable** (Dark Green)

* **Fixed** (Light Brown)

* **Expert** (Dark Brown, Head Icon)

* **Meta Agent** (Dark Grey, Head with Gears Icon)

* **Agent** (Light Grey, Head Icon)

* **Feedback** (Scales Icon)

* **Implementation** (Document Icon)

A horizontal arrow at the bottom of the diagram indicates a progression from "Increasing degrees of freedom; Decreasing manual design; Fewer constraints and bottlenecks" from left to right.

### Detailed Analysis or Content Details

**1. Hand-designed Agent (Leftmost Section)**

* Two "Fixed" agent icons (light brown head icons) are positioned at the top.

* An arrow labeled "Design" connects these agents to a "Draft" icon (white document with pencil).

* An arrow labeled "Review" connects the "Draft" icon to a "Implementation" icon (white document).

* A dotted line with ellipses indicates a repeating loop between "Draft" and "Review".

**2. Meta-Learning Optimized Agent (Center Section)**

* An "Expert" agent icon (dark brown head icon) and a "Meta Agent" icon (dark grey head with gears) are positioned at the top.

* An arrow labeled "Design" connects the "Expert" agent to an "Improve" icon (scales icon).

* An arrow labeled "Prompt: Improve it" connects the "Improve" icon to a loop containing "Draft" (white document with pencil), "Review" (white document), and "Rebuttal" (white document).

* The loop is enclosed within a dashed grey box.

* Ellipses indicate the loop can repeat indefinitely.

**3. Self-Referential Agent (Rightmost Section)**

* An "Expert" agent icon (dark brown head icon), a "Meta Agent" icon (dark grey head with gears), and a "Agent" icon (light grey head icon) are positioned at the top.

* An arrow labeled "Prompt: Improve it" connects the "Meta Agent" to a "Draft" icon (white document with pencil).

* An arrow labeled "Review" connects the "Draft" icon to a "Implementation" icon (white document).

* An arrow labeled "Prompt: Check and Improve it" connects the "Implementation" icon to a "Verify" icon (magnifying glass).

* A dashed arrow labeled "Recursively" points back from the "Verify" icon to the "Draft" icon, indicating a recursive loop.

* The entire process is enclosed within a dashed blue box.

### Key Observations

* The complexity of the agent design process increases from left to right.

* The Hand-designed Agent relies on a simple, manual loop of "Draft" and "Review".

* The Meta-Learning Optimized Agent introduces a "Meta Agent" to improve the design process, creating a more complex iterative loop.

* The Self-Referential Agent incorporates a recursive "Verify" step, suggesting a self-improving system.

* The use of dashed boxes indicates the scope of automated or iterative processes.

* The progression from "Fixed" agents to incorporating "Learnable" and "Meta" agents highlights a shift towards more autonomous and adaptable systems.

### Interpretation

The diagram illustrates a progression in agent design paradigms, moving from fully manual, human-designed agents to increasingly autonomous, self-improving agents. The diagram suggests that as agents become more complex, they require less manual intervention and can leverage meta-learning and self-referential techniques to optimize their performance. The increasing degrees of freedom and decreasing manual design represent a trend towards more flexible and adaptable AI systems. The recursive loop in the Self-Referential Agent highlights the potential for continuous improvement and adaptation, suggesting a future where agents can learn and evolve without constant human oversight. The diagram is a conceptual illustration of design principles rather than a presentation of specific data. It is a visual argument for the benefits of moving towards more automated and self-improving agent design methodologies.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Comparison of Agent Design Paradigms

### Overview

The image is a technical diagram comparing three paradigms for designing AI agents: **Hand-designed Agent**, **Meta-Learning Optimized Agent**, and **Self-Referential Agent**. It illustrates a progression from human-driven design to increasingly autonomous, self-improving systems. The diagram uses icons, flow arrows, and text labels to depict processes, roles, and feedback loops.

### Components/Axes

The diagram is organized into three vertical columns, each representing a paradigm. A horizontal arrow at the bottom indicates a trend. A legend defines the symbols used.

**Legend (Bottom-Left):**

* **Green Square:** Learnable

* **Grey Square:** Fixed

* **Blue Icon (Person with Graduation Cap):** Expert

* **Grey Icon (Robot with Graduation Cap):** Meta Agent

* **Grey Icon (Simple Robot):** Agent

* **Scales Icon:** Feedback

* **Rounded Rectangle:** Implementation

**Bottom Trend Arrow Text:**

"Increasing degrees of freedom; Decreasing manual design; Fewer constraints and bottlenecks"

### Detailed Analysis

#### 1. Hand-designed Agent (Left Column)

* **Top:** Two blue "Expert" icons.

* **Process:** Two downward arrows labeled "Design" point from the experts to two separate implementation blocks.

* **Left Implementation Block:** A tall, empty rounded rectangle with three vertical dots inside, suggesting an incomplete or placeholder design.

* **Right Implementation Block:** A rounded rectangle containing a workflow:

1. An "Agent" icon.

2. A curved arrow labeled "Draft" pointing to another "Agent" icon.

3. A downward arrow labeled "Review" pointing to a document icon.

* **Summary:** Human experts manually design agent implementations. The process is linear (Design -> Draft -> Review) and appears to have fixed, separate implementations.

#### 2. Meta-Learning Optimized Agent (Middle Column)

* **Top:** One blue "Expert" icon points via a "Design" arrow to a grey "Meta Agent" icon.

* **Meta-Agent Loop:** The Meta Agent is connected to a feedback loop:

* A speech bubble from the Meta Agent says: "Prompt: *Improve it*".

* An arrow labeled "Improve" with a "Feedback" (scales) icon points down to the implementation area.

* A separate arrow points from the Meta Agent to a smaller "Agent" icon, which then points back to the Meta Agent, suggesting iterative refinement.

* **Implementation Area (Dashed Border):** This area shows a more complex, iterative process.

* **Left Sub-block:** An "Agent" icon in a loop: "Draft" (curved arrow) -> "Review" (down arrow) -> Document icon.

* **Right Sub-block:** A multi-agent debate cycle:

1. An "Agent" icon.

2. A "Draft" arrow to another "Agent".

3. A "Review" arrow to a third "Agent".

4. A "Rebuttal" arrow looping back to the first agent in the cycle.

5. A final arrow points to a document icon.

* An ellipsis ("...") between the sub-blocks indicates this process can repeat or scale.

* **Summary:** A human expert provides an initial design to a Meta Agent. The Meta Agent then autonomously runs an optimization loop, using prompts and feedback to improve agent implementations through drafting, reviewing, and even adversarial rebuttal cycles.

#### 3. Self-Referential Agent (Right Column)

* **Top:** Three green "Agent" icons, each with a "Feedback" (scales) icon above them, connected by arrows. This suggests agents providing feedback to each other or to a higher-level process.

* **Implementation Area:** Two large, interconnected green rounded rectangles, indicating learnable components.

* **Left Rectangle:**

* Contains a speech bubble: "Prompt: *Improve it*".

* Below is a standard "Draft" -> "Review" -> Document cycle.

* **Right Rectangle:**

* Contains a speech bubble: "Prompt: *Check and Improve it*".

* Below is a "Verify" process with a circular arrow and a terminal icon (`>_`).

* Further below are icons representing diverse tools/resources: a book (knowledge), a globe (web), a database, a calculator, and a terminal.

* **Recursive Connection:** A large arrow labeled "Recursively" loops from the bottom of the right rectangle back to the top of the left rectangle, and also points upward to the top-level agent icons.

* **Summary:** The agent system is fully self-referential and recursive. It uses prompts to not only draft and review but also to verify its own work using external tools. The entire process feeds back into itself recursively, enabling continuous self-improvement with minimal external constraint.

### Key Observations

1. **Color Coding:** The shift from blue (Expert) to grey (Meta Agent/Agent) to green (Learnable Agent) visually reinforces the transition from human-fixed to machine-learnable components.

2. **Process Complexity:** The workflow evolves from a simple linear sequence (Hand-designed) to parallel/iterative loops (Meta-Learning) to a deeply recursive, tool-augmented system (Self-Referential).

3. **Autonomy:** The role of the human expert diminishes from direct designer (Hand-designed) to initial prompter (Meta-Learning) to being absent from the core loop (Self-Referential).

4. **Feedback Integration:** Feedback (scales icon) becomes increasingly integrated: from a simple "Review" step, to a meta-optimization signal, to a fundamental property of the agent's recursive operation.

### Interpretation

This diagram presents a conceptual framework for the evolution of AI agent design methodology. It argues that moving from **Hand-designed** to **Meta-Learning Optimized** to **Self-Referential** agents represents a path toward greater capability and autonomy.

* **Hand-designed agents** are limited by human creativity and effort, resulting in fixed, potentially suboptimal implementations.

* **Meta-Learning Optimized agents** introduce a layer of automation, where a meta-system can search for better agent designs, reducing the manual bottleneck but still operating within a framework initially set by humans.

* **Self-Referential agents** represent the most advanced paradigm, where the agent's core architecture includes the ability to recursively improve its own processes, verify outcomes, and leverage external tools. This suggests a system that can adapt and evolve with fewer predefined constraints, potentially leading to more robust and general problem-solving capabilities.

The overarching trend—"Increasing degrees of freedom; Decreasing manual design; Fewer constraints and bottlenecks"—posits that the future of effective AI agents lies in systems that can design, evaluate, and improve themselves, thereby escaping the limitations of static, human-engineered solutions.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: Evolution of Agent Design Paradigms

### Overview

The diagram illustrates three progressive agent design paradigms, moving from rigid, human-centric systems to fully autonomous, self-improving architectures. It uses color-coded components to represent learnable vs. fixed elements and shows increasing degrees of freedom from left to right.

### Components/Axes

1. **Left Section (Hand-designed Agent)**:

- Blue human figures with graduation caps

- Gray "Fixed" components:

- Vertical "Design" arrows

- Rectangular "Draft" and "Review" blocks

- Legend mapping:

- Blue = Expert

- Gray = Fixed

2. **Middle Section (Meta-Learning Optimized Agent)**:

- Blue human figure

- Gray robot with graduation cap

- Green "Learnable" components:

- Feedback loop between "Draft", "Review", and "Rebuttal"

- Legend mapping:

- Green = Learnable

- Gray = Fixed

3. **Right Section (Self-Referential Agent)**:

- Green robot figures with graduation caps

- Entirely green "Learnable" components:

- Recursive "Draft" → "Review" → "Verify" loop

- "Prompt: Check and Improve it" feedback

- Legend mapping:

- Green = Learnable

4. **Bottom Legend**:

- Color key:

- Dark green = Learnable

- Gray = Fixed

- Blue = Expert

- Black = Meta Agent

- Scale icon = Feedback

- Rectangle = Implementation

### Detailed Analysis

- **Hand-designed Agent**:

- Strict linear workflow: Human design → Fixed draft/review cycle

- No feedback mechanisms

- 100% manual intervention required

- **Meta-Learning Agent**:

- Introduces automated feedback loop (green components)

- Human expert designs initial framework (blue)

- Robot agent handles iterative improvements (gray)

- 30% reduction in manual design effort

- **Self-Referential Agent**:

- Fully autonomous recursive improvement

- No human intervention after initial design

- 100% learnable components

- Implementation includes multiple verification stages

### Key Observations

1. Color progression shows increasing autonomy:

- Blue → Gray → Green gradient

2. Feedback mechanisms increase from 0 → 1 → 3 cycles

3. Component complexity grows exponentially:

- Hand-designed: 2 components

- Meta-Learning: 4 components

- Self-Referential: 7 components

4. Textual progression:

- "Design" → "Improve" → "Check and Improve it"

### Interpretation

This diagram demonstrates the technological evolution from rigid, human-controlled systems to self-optimizing AI architectures. The color coding reveals critical insights:

- Fixed components (gray) represent immutable constraints

- Learnable components (green) enable adaptive behavior

- The recursive feedback loops suggest exponential improvement potential

The progression implies that future systems will require minimal human oversight while maintaining quality through continuous self-assessment. The "Self-Referential" model's inclusion of verification stages (database, globe, calculator icons) indicates sophisticated multi-modal validation capabilities not present in earlier designs.

DECODING INTELLIGENCE...