## Diagram: Gödel Agent Self-Improvement Cycle

### Overview

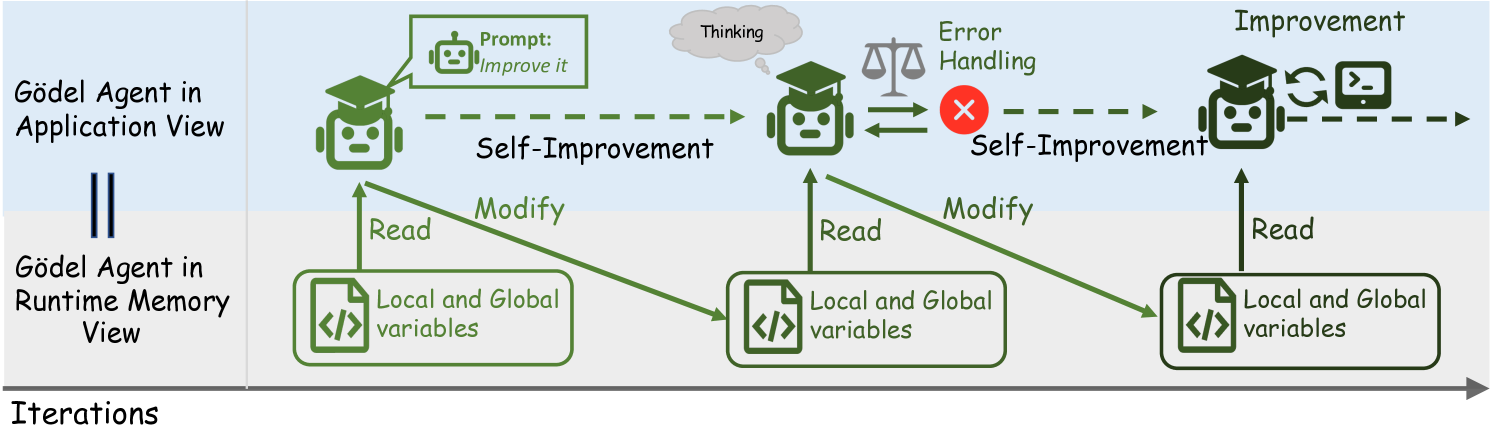

This diagram illustrates the conceptual architecture and iterative process of a self-improving AI system called the "Gödel Agent." It depicts the agent operating in two parallel views—Application View and Runtime Memory View—across multiple iterations. The core process involves the agent receiving a prompt, engaging in self-improvement through reading and modifying its own code/variables, handling errors, and achieving improvement.

### Components/Axes

The diagram is structured into three horizontal layers and progresses left-to-right across iterations.

**1. Leftmost Label Column:**

* **Top:** `Gödel Agent in Application View`

* **Bottom:** `Gödel Agent in Runtime Memory View`

* **Separator:** A double vertical line (`||`) separates the two view labels.

**2. Main Process Flow (Top Layer - Application View):**

This layer shows the agent's high-level cognitive process.

* **First Agent (Left):** A green robot icon wearing a graduation cap. A speech bubble points to it containing the text: `Prompt: Improve it`.

* **Second Agent (Center):** A similar green robot icon. A thought bubble labeled `Thinking` is above it. To its right is a balance scale icon labeled `Error Handling`, connected by a double-headed arrow. A red circle with a white "X" is positioned between the scale and the next arrow.

* **Third Agent (Right):** A green robot icon. Above it is the label `Improvement`. To its right are two icons: a circular arrow (refresh/sync symbol) and a terminal/command prompt symbol (`>_`).

* **Flow Arrows:** Dashed green arrows connect the agents sequentially from left to right. The label `Self-Improvement` appears twice: once between the first and second agents, and once between the second and third agents (after the error handling block).

**3. Main Process Flow (Bottom Layer - Runtime Memory View):**

This layer shows the agent's interaction with its own memory/state.

* **Three Identical Boxes:** Each is a rounded rectangle containing a code icon (`</>`) and the text `Local and Global variables`. These boxes are aligned vertically beneath each of the three agent icons in the top layer.

* **Data Flow Arrows:** Solid green arrows connect the Application View agents to the Runtime Memory View boxes.

* From the **first agent**, an arrow labeled `Read` points down to the first memory box. Another arrow labeled `Modify` points from the first memory box up to the **second agent**.

* From the **second agent**, an arrow labeled `Read` points down to the second memory box. Another arrow labeled `Modify` points from the second memory box up to the **third agent**.

* From the **third agent**, an arrow labeled `Read` points down to the third memory box.

**4. Bottom Timeline:**

* A solid gray arrow runs along the very bottom of the diagram, pointing to the right.

* It is labeled `Iterations` at its starting point on the left.

### Detailed Analysis

The diagram details a three-stage iterative cycle for a Gödel Agent:

1. **Stage 1 (Initialization/Prompt):**

* **Application View:** The agent receives an external directive: `Prompt: Improve it`.

* **Runtime Memory View:** The agent performs a `Read` operation on its `Local and Global variables`.

2. **Stage 2 (Processing & Error Handling):**

* **Application View:** The agent engages in `Thinking`. It then undergoes an `Error Handling` process, symbolized by the balance scale. The red "X" suggests a failure or error state is detected and addressed at this stage.

* **Runtime Memory View:** The agent uses information from the first read to `Modify` its variables. It then performs a new `Read` operation on the updated state.

3. **Stage 3 (Improvement & Output):**

* **Application View:** The process culminates in `Improvement`. The circular arrow icon implies a feedback loop or update mechanism, and the terminal icon suggests the output of improved code or a new capability.

* **Runtime Memory View:** The agent performs a final `Read` operation on its improved variable state.

The entire process is cyclical, as indicated by the `Self-Improvement` labels and the `Iterations` timeline, suggesting this three-stage loop repeats to achieve progressive enhancement.

### Key Observations

* **Dual-Perspective Architecture:** The diagram explicitly separates the agent's high-level reasoning (`Application View`) from its low-level state manipulation (`Runtime Memory View`), showing they are two sides of the same process.

* **Error Handling as a Core Component:** Error handling is not an afterthought but a dedicated, central stage in the improvement cycle, positioned between thinking and the final improvement.

* **Self-Referential Modification:** The agent's primary mechanism for improvement is reading and modifying its own `Local and Global variables`, embodying the concept of a self-referential, Gödelian system.

* **Asymmetric Data Flow:** The "Modify" action flows from the memory view *up* to the next agent stage, while the "Read" action flows *down*. This suggests the agent's next cognitive stage is built upon the modified state from the previous stage.

### Interpretation

This diagram presents a theoretical model for an Artificial General Intelligence (AGI) agent capable of autonomous, recursive self-improvement. The name "Gödel Agent" likely references Kurt Gödel's incompleteness theorems, hinting at a system designed to reason about and modify its own logical foundations.

The process demonstrates a closed-loop system where:

1. An external goal (`Improve it`) initiates the cycle.

2. The agent introspects (`Read`), reasons (`Thinking`), and acts (`Modify`) upon its own code and knowledge base (`Local and Global variables`).

3. It incorporates a safety or validation mechanism (`Error Handling`) to manage failures during self-modification.

4. The output is an improved version of itself, ready for the next iteration.

The separation into two views is crucial. It implies that for such an agent to be understood or engineered, one must consider both its "mind" (application logic) and its "memory" (runtime state) as interconnected, co-evolving entities. The model suggests that true self-improvement isn't just about learning new data, but about the agent having the capacity to rewrite the very rules and variables that constitute its intelligence. The `Iterations` timeline underscores that this is not a one-time event but a continuous, potentially exponential, process of growth.