## Diagram: Chain-of-Thought Prompting vs. Self-Consistency

### Overview

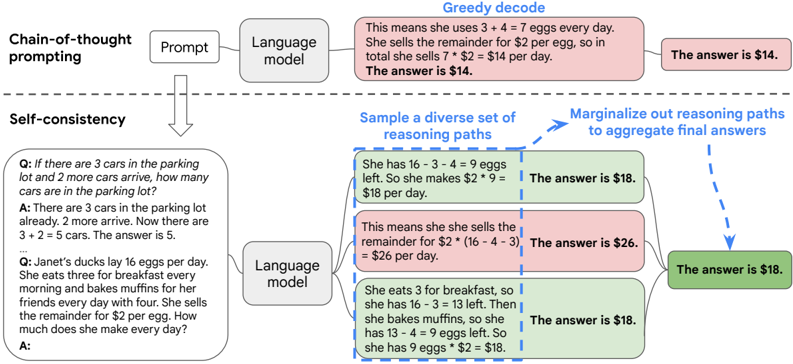

The image illustrates two different approaches to problem-solving using language models: Chain-of-Thought prompting and Self-Consistency. It compares how these methods arrive at solutions to mathematical word problems, highlighting the reasoning steps involved.

### Components/Axes

* **Titles:** "Chain-of-thought prompting" (top-left), "Self-consistency" (bottom-left), "Greedy decode" (top-center), "Sample a diverse set of reasoning paths" (center), "Marginalize out reasoning paths to aggregate final answers" (top-right).

* **Nodes:**

* "Prompt" (top-left, gray rounded rectangle)

* "Language model" (top-center, gray rounded rectangle)

* "Language model" (bottom-center, gray rounded rectangle)

* Reasoning steps and answers (various colored rounded rectangles)

* **Arrows:** Solid arrows indicate the flow of information in Chain-of-Thought prompting. Dashed arrows indicate the flow of information in Self-Consistency.

* **Color Coding:** Green rounded rectangles indicate correct answers. Red rounded rectangles indicate incorrect answers.

### Detailed Analysis

**1. Chain-of-Thought Prompting (Top Section):**

* **Prompt:** An unspecified prompt is fed into a language model.

* **Language Model:** The language model processes the prompt and generates a step-by-step reasoning process.

* **Greedy Decode:** The reasoning steps are as follows:

* "This means she uses 3 + 4 = 7 eggs every day."

* "She sells the remainder for $2 per egg, so in total she sells 7 * $2 = $14 per day."

* **The answer is $14.** (Red rounded rectangle, indicating an incorrect answer)

**2. Self-Consistency (Bottom Section):**

* **Prompt:** Two questions are presented:

* "Q: If there are 3 cars in the parking lot and 2 more cars arrive, how many cars are in the parking lot?"

* "A: There are 3 cars in the parking lot already. 2 more arrive. Now there are 3 + 2 = 5 cars. The answer is 5."

* "Q: Janet's ducks lay 16 eggs per day. She eats three for breakfast every morning and bakes muffins for her friends every day with four. She sells the remainder for $2 per egg. How much does she make every day?"

* "A:" (The language model will provide the answer)

* **Language Model:** The language model processes the prompt.

* **Sample a diverse set of reasoning paths:** The language model generates multiple reasoning paths. Three paths are shown:

* Path 1 (Green rounded rectangle):

* "She has 16 - 3 - 4 = 9 eggs left. So she makes $2 * 9 = $18 per day."

* **The answer is $18.**

* Path 2 (Red rounded rectangle):

* "This means she sells the remainder for $2 * (16 - 4 - 3) = $26 per day."

* **The answer is $26.**

* Path 3 (Green rounded rectangle):

* "She eats 3 for breakfast, so she has 16 - 3 = 13 left. Then she bakes muffins, so she has 13 - 4 = 9 eggs left. So she has 9 eggs * $2 = $18."

* **The answer is $18.**

* **Marginalize out reasoning paths to aggregate final answers:** The answers from the different reasoning paths are aggregated.

* **The answer is $18.** (Green rounded rectangle, indicating a correct answer)

### Key Observations

* Chain-of-Thought prompting, in this example, leads to an incorrect answer.

* Self-Consistency, by sampling multiple reasoning paths and aggregating the results, arrives at the correct answer.

* The color-coding clearly distinguishes between correct (green) and incorrect (red) answers.

### Interpretation

The diagram demonstrates the advantage of the Self-Consistency method over the Chain-of-Thought prompting method for solving complex problems. Self-Consistency leverages the diversity of reasoning paths generated by the language model to arrive at a more robust and accurate solution. By sampling multiple paths and aggregating the results, the Self-Consistency method mitigates the risk of relying on a single, potentially flawed, line of reasoning, as seen in the Chain-of-Thought example. The example highlights the importance of exploring multiple perspectives and aggregating information to improve the accuracy and reliability of language model outputs.