\n

## Diagram: Chain-of-Thought Prompting and Self-Consistency

### Overview

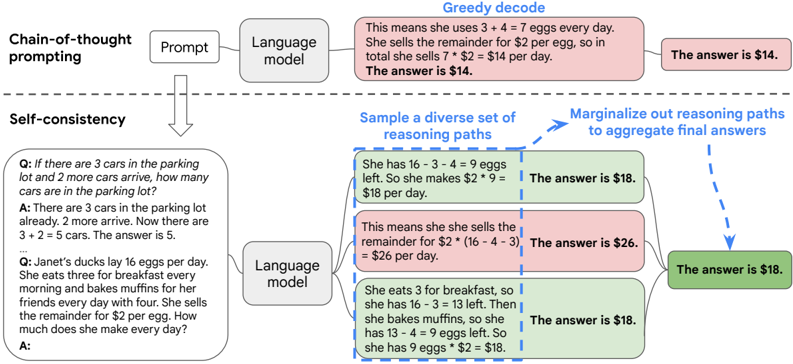

This diagram illustrates the process of Chain-of-Thought prompting and Self-Consistency in the context of Large Language Models (LLMs). It shows how a prompt is fed into a language model, generating reasoning paths, and how these paths are aggregated to arrive at a final answer. The diagram highlights two main approaches: Greedy Decode and Self-Consistency.

### Components/Axes

The diagram is structured into three main sections:

1. **Chain-of-Thought Prompting:** This section shows the initial prompt and the language model.

2. **Self-Consistency:** This section demonstrates sampling diverse reasoning paths and marginalizing them to aggregate final answers.

3. **Reasoning Paths & Answers:** This section displays several reasoning paths generated by the language model, along with their corresponding answers.

Key labels include:

* "Chain-of-thought prompting"

* "Prompt"

* "Language model"

* "Greedy decode"

* "Self-consistency"

* "Sample a diverse set of reasoning paths"

* "Marginalize out reasoning paths to aggregate final answers"

* "Q:" (Question)

* "A:" (Answer)

### Detailed Analysis or Content Details

**1. Chain-of-Thought Prompting & Greedy Decode:**

* A prompt is sent to a "Language model".

* The language model generates a single reasoning path: "This means she uses 3 + 4 = 7 eggs every day. She sells the remainder for $2 per egg, so in total she sells 7 * $2 = $14 per day. The answer is $14."

* The final answer is stated as "$14".

**2. Self-Consistency:**

* The process begins with a "Language model" receiving a prompt.

* The model is instructed to "Sample a diverse set of reasoning paths".

* These paths are then "Marginalize out reasoning paths to aggregate final answers".

**3. Reasoning Paths & Answers (Self-Consistency Examples):**

* **Example 1 (Parking Lot):**

* Q: "If there are 3 cars in the parking lot and 2 more cars arrive, how many cars are in the parking lot?"

* A: "There are 3 cars in the parking lot already. 2 more arrive. Now there are 3 + 2 = 5 cars. The answer is 5."

* Answer: 5

* **Example 2 (Eggs & Muffins - Path 1):**

* Q: "Janet's ducks lay 16 eggs per day. She eats three for breakfast every morning and bakes muffins for her friends every day with four. She sells the remainder for $2 per egg. How much does she make every day?"

* A: "She has 16 - 3 - 4 = 9 eggs left. So she makes $2 * 9 = $18 per day."

* Answer: $18

* **Example 3 (Eggs & Muffins - Path 2):**

* A: "This means she sells the remainder for $2" (16 - 4 - 3) = $26 per day."

* Answer: $26

* **Example 4 (Eggs & Muffins - Path 3):**

* A: "She eats 3 for breakfast, so she has 16 - 3 = 13 left. Then she bakes muffins, so she has 13 - 4 = 9 eggs left. So she has 9 eggs *$2 = $18."

* Answer: $18

**4. Aggregated Answer:**

* The final aggregated answer, after marginalizing the reasoning paths, is "$18".

### Key Observations

* The Self-Consistency approach generates multiple reasoning paths to the same question.

* The answers from the different reasoning paths vary (e.g., $18, $26).

* The final answer is determined by aggregating these paths, resulting in a more robust and potentially accurate answer ($18).

* The Greedy Decode approach provides a single answer, which may not be the most reliable.

### Interpretation

The diagram demonstrates the benefits of using Self-Consistency in LLMs. By generating multiple reasoning paths and aggregating them, the model can mitigate the risk of relying on a single, potentially flawed, line of reasoning. This approach leads to a more reliable and accurate final answer. The diagram highlights the importance of exploring diverse reasoning paths to improve the performance of LLMs, particularly in tasks requiring complex reasoning. The variation in answers from different paths suggests that the model is exploring different interpretations of the problem, and the aggregation process helps to identify the most plausible solution. The contrast between Greedy Decode and Self-Consistency underscores the value of considering multiple perspectives when solving problems with LLMs.