## Diagram: Generic vs. Explainable AI Approach

### Overview

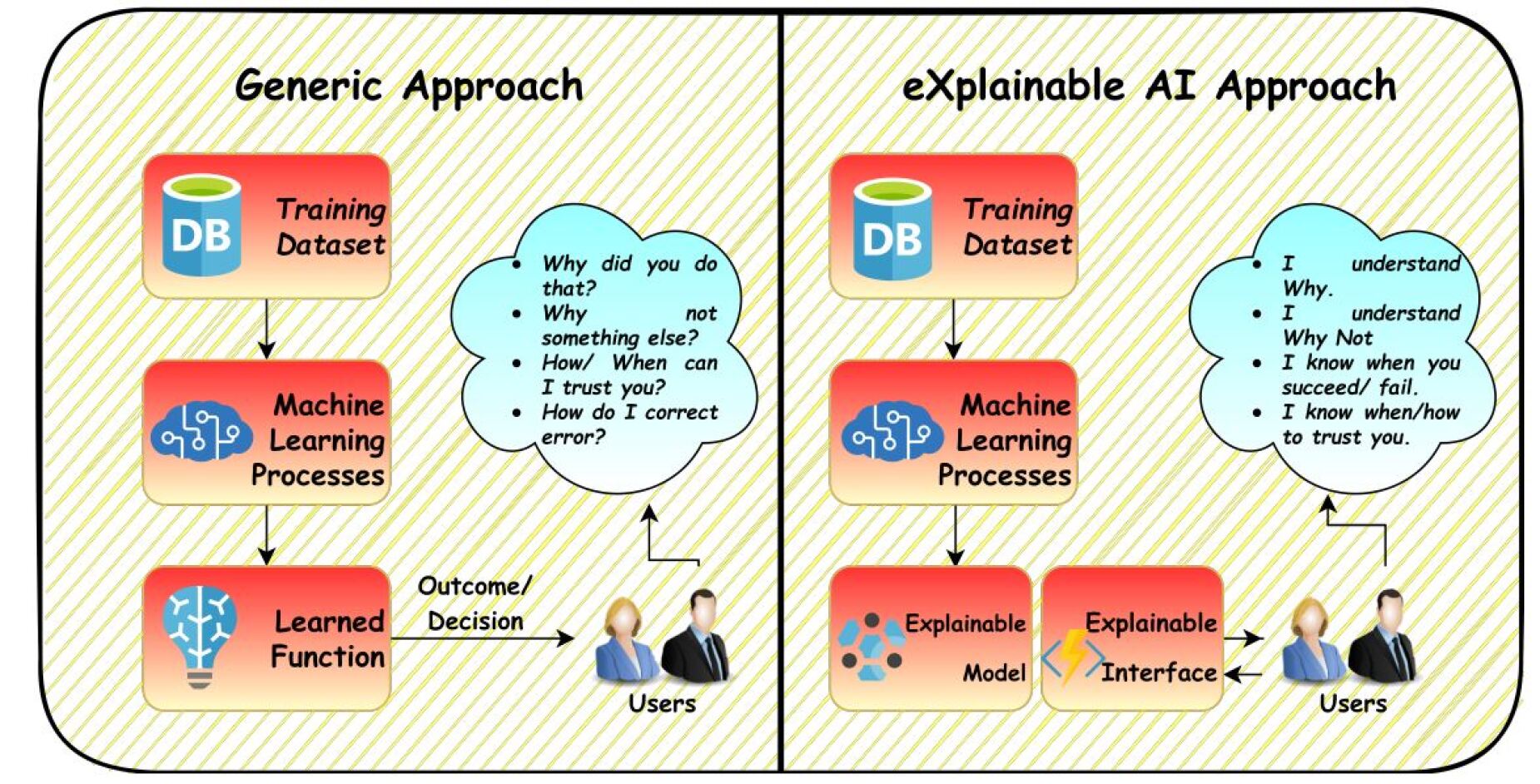

The image presents a comparative diagram illustrating the workflow of a generic machine learning approach versus an explainable AI (XAI) approach. The diagram highlights the key differences in how the models are used and how users interact with them.

### Components/Axes

**Left Side: Generic Approach**

* **Title:** Generic Approach

* **Top Block:** "DB" icon with the label "Training Dataset"

* **Middle Block:** "Machine Learning Processes" with a blue brain icon.

* **Bottom Block:** "Learned Function" with a lightbulb icon.

* **Cloud:** A thought bubble containing questions:

* "Why did you do that?"

* "Why not something else?"

* "How/When can I trust you?"

* "How do I correct error?"

* **Users:** A graphic of two people labeled "Users".

* **Arrows:** Arrows indicate the flow of information from the "Training Dataset" to "Machine Learning Processes" to "Learned Function". An arrow also connects the "Learned Function" to the "Users" with the label "Outcome/Decision". An arrow also connects the "Users" to the thought bubble.

**Right Side: eXplainable AI Approach**

* **Title:** eXplainable AI Approach

* **Top Block:** "DB" icon with the label "Training Dataset"

* **Middle Block:** "Machine Learning Processes" with a blue brain icon.

* **Bottom Block (Left):** "Explainable Model" with a blue icon.

* **Bottom Block (Right):** "Explainable Interface" with a yellow lightning bolt icon.

* **Cloud:** A thought bubble containing statements:

* "I understand Why."

* "I understand Why Not."

* "I know when you succeed/fail."

* "I know when/how to trust you."

* **Users:** A graphic of two people labeled "Users".

* **Arrows:** Arrows indicate the flow of information from the "Training Dataset" to "Machine Learning Processes" to "Explainable Model" and "Explainable Interface". An arrow connects the "Explainable Interface" to the "Users". An arrow also connects the "Users" to the thought bubble.

### Detailed Analysis

**Generic Approach Flow:**

1. A "Training Dataset" (represented by a database icon labeled "DB") feeds into "Machine Learning Processes".

2. The "Machine Learning Processes" block leads to a "Learned Function" (represented by a lightbulb icon).

3. The "Learned Function" provides an "Outcome/Decision" to the "Users".

4. The "Users" have questions about the decision, represented by a thought bubble.

**eXplainable AI Approach Flow:**

1. A "Training Dataset" (represented by a database icon labeled "DB") feeds into "Machine Learning Processes".

2. The "Machine Learning Processes" block leads to an "Explainable Model" and an "Explainable Interface".

3. The "Explainable Interface" provides information to the "Users".

4. The "Users" have a better understanding of the model's decisions, represented by a thought bubble.

### Key Observations

* The primary difference between the two approaches is the inclusion of an "Explainable Model" and "Explainable Interface" in the XAI approach.

* The generic approach leads to user questions and potential distrust, while the XAI approach aims to provide understanding and trust.

* Both approaches start with a "Training Dataset" and "Machine Learning Processes".

### Interpretation

The diagram illustrates the shift from traditional "black box" machine learning models to more transparent and understandable XAI models. The generic approach, while functional, leaves users questioning the model's decisions and potentially distrustful. The XAI approach addresses this by providing an "Explainable Model" and "Explainable Interface" that allow users to understand the reasoning behind the model's outputs, fostering trust and enabling better decision-making. The diagram highlights the importance of explainability in AI, particularly in applications where transparency and user understanding are critical.