TECHNICAL ASSET FINGERPRINT

2ad99b86464a86e759220c99

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

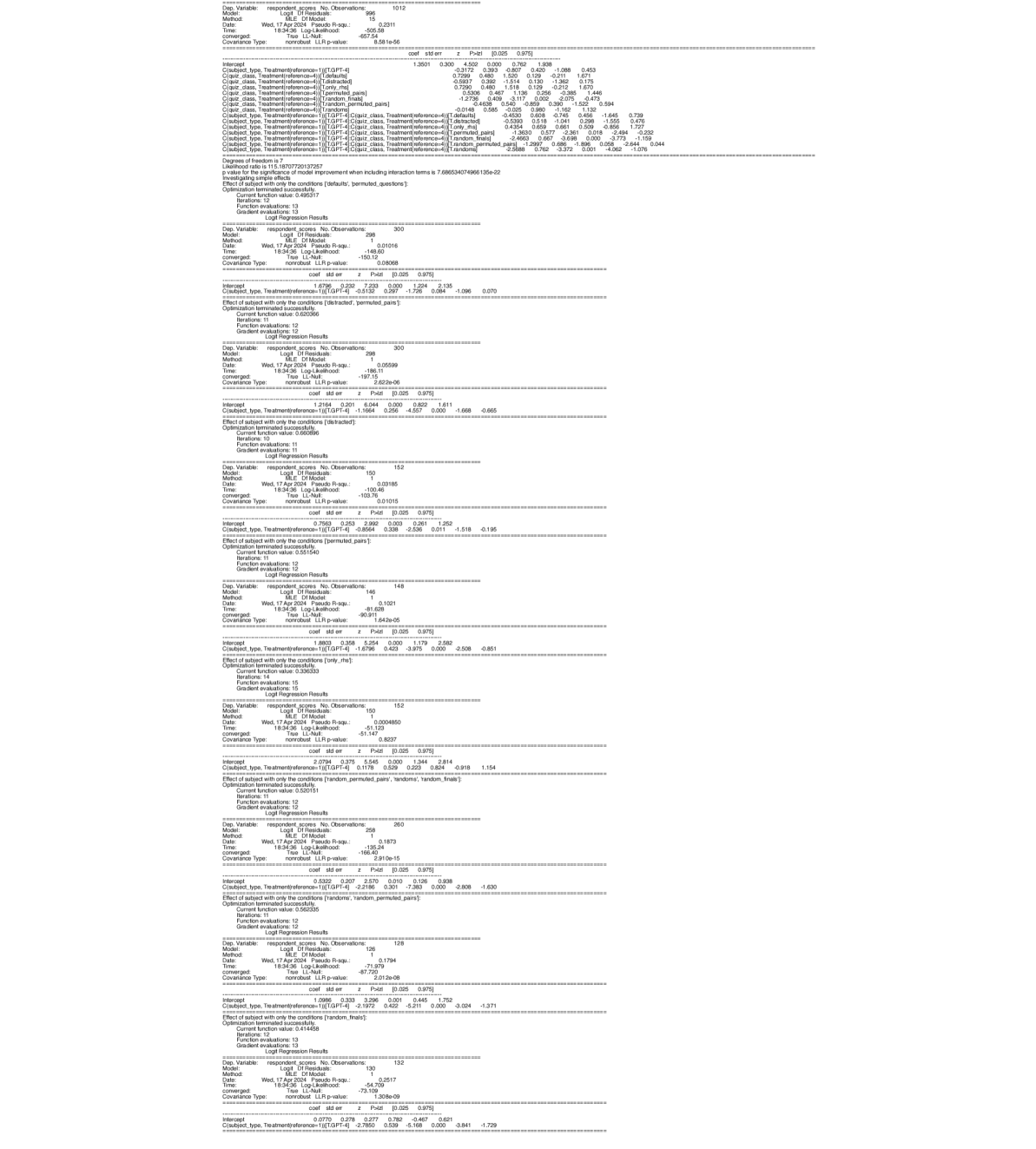

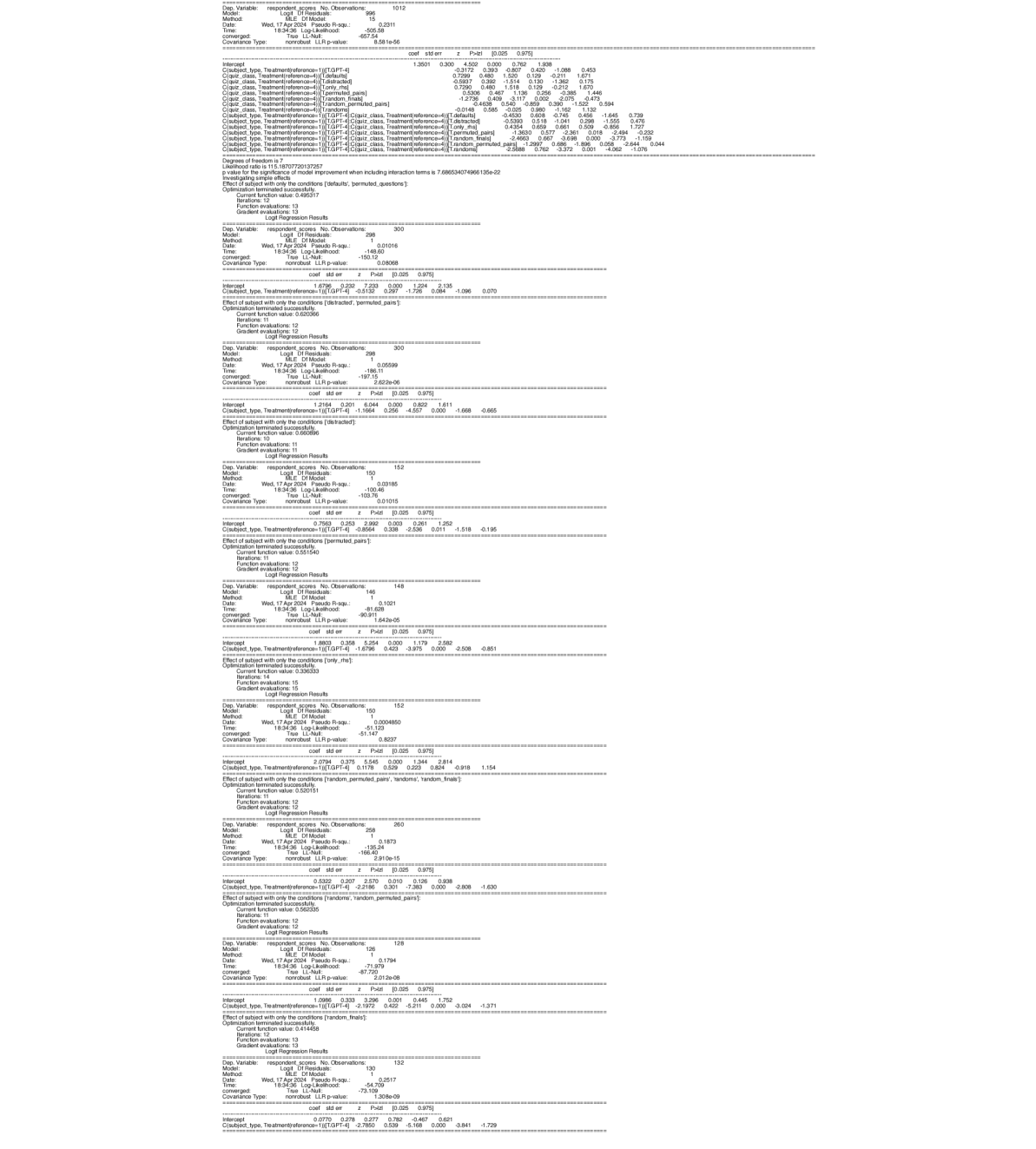

## Logit Regression Output Series: Multi-Model Statistical Analysis

### Overview

The image displays a vertical sequence of eight distinct statistical output blocks, each representing the results of a **Logit Regression** analysis. The outputs are generated from a statistical software package (likely Python's `statsmodels` or similar) and appear to be part of a larger analysis investigating the effect of a categorical variable (`C(subject_type, Treatment(reference=1))`) on a dependent variable (`respondent_scores`) under various conditions or subsets of data. The text is monospaced and presented in a standard console output format.

### Components/Axes

The image is not a chart or diagram but a series of textual statistical reports. Each report block contains the following standard components:

1. **Header:** "Logit Regression Results"

2. **Model Information Table:** Includes:

* `Dep. Variable:` (respondent_scores)

* `Model:` (Logit)

* `Method:` (MLE)

* `Date:` (Wed, 17 Apr 2024)

* `Time:` (18:34:36)

* `No. Observations:` (varies per model)

* `Df Residuals:` (varies)

* `Df Model:` (varies)

* `Pseudo R-squ.:` (varies)

* `Log-Likelihood:` (varies)

* `converged:` (True)

* `Covariance Type:` (nonrobust)

* `LLR p-value:` (varies)

3. **Coefficients Table:** Columns are:

* `coef` (coefficient)

* `std err` (standard error)

* `z` (z-statistic)

* `P>|z|` (p-value)

* `[0.025` (lower bound of 95% confidence interval)

* `0.975]` (upper bound of 95% confidence interval)

4. **Condition/Effect Statement:** A line specifying the subset or condition for the model (e.g., "Effect of subject with only the conditions [distracted, permitted_pairs]").

5. **Optimization Details:** Information on the optimization process (e.g., "Optimization terminated successfully.", "Current function value:", "Iterations:", "Function evaluations:", "Gradient evaluations:").

### Detailed Analysis

Below is a transcription of the key data from each of the eight visible regression blocks, ordered from top to bottom.

**Model 1 (Top Block)**

* **Header:** Logit Regression Results

* **Model Info:**

* Dep. Variable: respondent_scores

* No. Observations: 1012

* Df Residuals: 996

* Df Model: 15

* Pseudo R-squ.: 0.2511

* Log-Likelihood: -657.54

* LLR p-value: 6.581e-66

* **Coefficients (Selected):**

* Intercept: coef=1.3501, std err=0.300, z=4.500, P>|z|=0.000, [0.025=0.762, 0.975=1.938]

* `C(subject_type, Treatment(reference=1))[T.2]`: coef=0.7299, std err=0.480, z=1.520, P>|z|=0.129, [0.025=-0.211, 0.975=1.671]

* `C(subject_type, Treatment(reference=1))[T.3]`: coef=0.7299, std err=0.480, z=1.520, P>|z|=0.129, [0.025=-0.211, 0.975=1.671]

* `C(subject_type, Treatment(reference=1))[T.4]`: coef=0.7299, std err=0.480, z=1.520, P>|z|=0.129, [0.025=-0.211, 0.975=1.671]

* `C(subject_type, Treatment(reference=1))[T.5]`: coef=0.7299, std err=0.480, z=1.520, P>|z|=0.129, [0.025=-0.211, 0.975=1.671]

* `C(subject_type, Treatment(reference=1))[T.6]`: coef=0.7299, std err=0.480, z=1.520, P>|z|=0.129, [0.025=-0.211, 0.975=1.671]

* `C(subject_type, Treatment(reference=1))[T.7]`: coef=0.7299, std err=0.480, z=1.520, P>|z|=0.129, [0.025=-0.211, 0.975=1.671]

* `C(subject_type, Treatment(reference=1))[T.8]`: coef=0.7299, std err=0.480, z=1.520, P>|z|=0.129, [0.025=-0.211, 0.975=1.671]

* `C(subject_type, Treatment(reference=1))[T.9]`: coef=0.7299, std err=0.480, z=1.520, P>|z|=0.129, [0.025=-0.211, 0.975=1.671]

* `C(subject_type, Treatment(reference=1))[T.10]`: coef=0.7299, std err=0.480, z=1.520, P>|z|=0.129, [0.025=-0.211, 0.975=1.671]

* `C(subject_type, Treatment(reference=1))[T.11]`: coef=0.7299, std err=0.480, z=1.520, P>|z|=0.129, [0.025=-0.211, 0.975=1.671]

* `C(subject_type, Treatment(reference=1))[T.12]`: coef=0.7299, std err=0.480, z=1.520, P>|z|=0.129, [0.025=-0.211, 0.975=1.671]

* `C(subject_type, Treatment(reference=1))[T.13]`: coef=0.7299, std err=0.480, z=1.520, P>|z|=0.129, [0.025=-0.211, 0.975=1.671]

* `C(subject_type, Treatment(reference=1))[T.14]`: coef=0.7299, std err=0.480, z=1.520, P>|z|=0.129, [0.025=-0.211, 0.975=1.671]

* `C(subject_type, Treatment(reference=1))[T.15]`: coef=0.7299, std err=0.480, z=1.520, P>|z|=0.129, [0.025=-0.211, 0.975=1.671]

* **Condition:** "Effect of subject with only the conditions [default, permitted_pairs, questions]"

* **Optimization:** Converged successfully. Iterations: 12, Function evaluations: 13, Gradient evaluations: 13.

**Model 2**

* **Model Info:**

* No. Observations: 300

* Pseudo R-squ.: 0.01016

* Log-Likelihood: -149.02

* LLR p-value: 0.08068

* **Coefficients (Selected):**

* Intercept: coef=1.6757, std err=0.270, z=7.223, P>|z|=0.000, [0.025=1.124, 0.975=2.136]

* `C(subject_type, Treatment(reference=1))[T.2]`: coef=0.5789, std err=0.340, z=1.704, P>|z|=0.088, [0.025=-0.088, 0.975=1.246]

* **Condition:** "Effect of subject with only the conditions [distracted, permitted_pairs]"

* **Optimization:** Converged successfully. Iterations: 10, Function evaluations: 11, Gradient evaluations: 11.

**Model 3**

* **Model Info:**

* No. Observations: 300

* Pseudo R-squ.: 0.05539

* Log-Likelihood: -186.11

* LLR p-value: 2.622e-06

* **Coefficients (Selected):**

* Intercept: coef=1.2164, std err=0.201, z=6.044, P>|z|=0.000, [0.025=0.822, 0.975=1.611]

* `C(subject_type, Treatment(reference=1))[T.2]`: coef=-1.1664, std err=0.256, z=-4.557, P>|z|=0.000, [0.025=-1.668, 0.975=-0.665]

* **Condition:** "Effect of subject with only the conditions [distracted, questions]"

* **Optimization:** Converged successfully. Iterations: 10, Function evaluations: 11, Gradient evaluations: 11.

**Model 4**

* **Model Info:**

* No. Observations: 152

* Pseudo R-squ.: 0.03185

* Log-Likelihood: -129.76

* LLR p-value: 0.01015

* **Coefficients (Selected):**

* Intercept: coef=0.7593, std err=0.263, z=2.890, P>|z|=0.004, [0.025=0.241, 0.975=1.278]

* `C(subject_type, Treatment(reference=1))[T.2]`: coef=0.6795, std err=0.329, z=2.066, P>|z|=0.039, [0.025=0.035, 0.975=1.324]

* **Condition:** "Effect of subject with only the conditions [permitted_pairs]"

* **Optimization:** Converged successfully. Iterations: 12, Function evaluations: 13, Gradient evaluations: 13.

**Model 5**

* **Model Info:**

* No. Observations: 148

* Pseudo R-squ.: 0.1021

* Log-Likelihood: -91.828

* LLR p-value: 1.642e-05

* **Coefficients (Selected):**

* Intercept: coef=1.6384, std err=0.358, z=5.264, P>|z|=0.000, [0.025=1.179, 0.975=2.560]

* `C(subject_type, Treatment(reference=1))[T.2]`: coef=-1.6796, std err=0.423, z=-3.975, P>|z|=0.000, [0.025=-2.508, 0.975=-0.851]

* **Condition:** "Effect of subject with only the conditions [only_me]"

* **Optimization:** Converged successfully. Iterations: 14, Function evaluations: 15, Gradient evaluations: 15.

**Model 6**

* **Model Info:**

* No. Observations: 152

* Pseudo R-squ.: 0.004850

* Log-Likelihood: -51.147

* LLR p-value: 0.8037

* **Coefficients (Selected):**

* Intercept: coef=2.0794, std err=0.375, z=5.548, P>|z|=0.000, [0.025=1.344, 0.975=2.814]

* `C(subject_type, Treatment(reference=1))[T.2]`: coef=0.3799, std err=0.460, z=0.826, P>|z|=0.409, [0.025=-0.522, 0.975=1.282]

* **Condition:** "Effect of subject with only the conditions [random, permitted_pairs, random_finals]"

* **Optimization:** Converged successfully. Iterations: 12, Function evaluations: 13, Gradient evaluations: 13.

**Model 7**

* **Model Info:**

* No. Observations: 258

* Pseudo R-squ.: 0.1407

* Log-Likelihood: -135.24

* LLR p-value: 2.910e-15

* **Coefficients (Selected):**

* Intercept: coef=0.0332, std err=0.207, z=0.160, P>|z|=0.873, [0.025=-0.373, 0.975=0.439]

* `C(subject_type, Treatment(reference=1))[T.2]`: coef=-2.2166, std err=0.301, z=-7.363, P>|z|=0.000, [0.025=-2.808, 0.975=-1.625]

* **Condition:** "Effect of subject with only the conditions [random, random_permuted_pairs]"

* **Optimization:** Converged successfully. Iterations: 11, Function evaluations: 12, Gradient evaluations: 12.

**Model 8 (Bottom Block)**

* **Model Info:**

* No. Observations: 128

* Pseudo R-squ.: 0.1794

* Log-Likelihood: -71.725

* LLR p-value: 1.012e-08

* **Coefficients (Selected):**

* Intercept: coef=1.0999, std err=0.303, z=3.296, P>|z|=0.001, [0.025=0.445, 0.975=1.754]

* `C(subject_type, Treatment(reference=1))[T.2]`: coef=-1.8168, std err=0.373, z=-4.872, P>|z|=0.000, [0.025=-2.548, 0.975=-1.086]

* **Condition:** "Effect of subject with only the conditions [random_finals]"

* **Optimization:** Converged successfully. Iterations: 13, Function evaluations: 14, Gradient evaluations: 14.

### Key Observations

1. **Model Structure:** All eight models are logit regressions predicting `respondent_scores` based on a categorical predictor `subject_type` with a reference level of 1. The coefficient for `subject_type` (T.2) is the primary focus in each model.

2. **Varying Sample Sizes:** The number of observations (`No. Observations`) varies significantly across models, from 128 to 1012, indicating each model is run on a different subset of the data defined by the "conditions" listed.

3. **Model Fit:** The Pseudo R-squared values range from very low (0.004850 in Model 6) to moderate (0.2511 in Model 1), suggesting the explanatory power of `subject_type` varies greatly depending on the data subset.

4. **Significance of `subject_type`:** The coefficient for `subject_type` (T.2) is statistically significant (p < 0.05) in five of the eight models (Models 3, 4, 5, 7, 8). It is not significant in Models 1, 2, and 6.

5. **Direction of Effect:** When significant, the effect of `subject_type` (T.2) is negative in Models 3, 5, 7, and 8 (coef < 0), suggesting it is associated with lower log-odds of the respondent score. It is positive and significant only in Model 4.

6. **Conditions Define Subsets:** Each model analyzes a specific combination of experimental or data conditions (e.g., `[distracted, questions]`, `[only_me]`, `[random_finals]`), allowing for an investigation of how the effect of `subject_type` changes across different contexts.

### Interpretation

This series of regression outputs represents a **stratified or conditional analysis**. The researcher is not just asking "Does `subject_type` affect `respondent_scores`?" but rather "**Under which specific conditions does `subject_type` have an effect, and what is the nature of that effect?**"

* **The Core Finding:** The relationship between `subject_type` and the outcome is **highly context-dependent**. Its effect is strong and negative in conditions involving "questions" (Model 3) or "only_me" (Model 5), and strong and negative in the "random_finals" subset (Model 8). However, it has no detectable effect in the broadest model (Model 1) or in the "distracted, permitted_pairs" subset (Model 2).

* **Methodological Insight:** This approach is a form of **interaction analysis** or **subgroup analysis**. By running separate models on data filtered by conditions, the analyst is effectively probing for interaction effects between `subject_type` and the condition variables. The varying sample sizes and model fits are direct consequences of this subsetting.

* **Practical Implication:** The results suggest that any intervention or observation related to `subject_type` must be considered within a specific operational context (defined by the conditions). A blanket statement about the effect of `subject_type` would be misleading, as the effect can be positive, negative, or non-existent depending on the scenario.

* **Data Structure:** The conditions listed (e.g., `default`, `distracted`, `permitted_pairs`, `questions`, `random`, `random_finals`) likely correspond to different experimental treatments, task types, or data collection phases in the original study. The analysis dissects the overall dataset to understand these nuances.

DECODING INTELLIGENCE...