## Line Chart: Training Performance of Reinforcement Learning Algorithms

### Overview

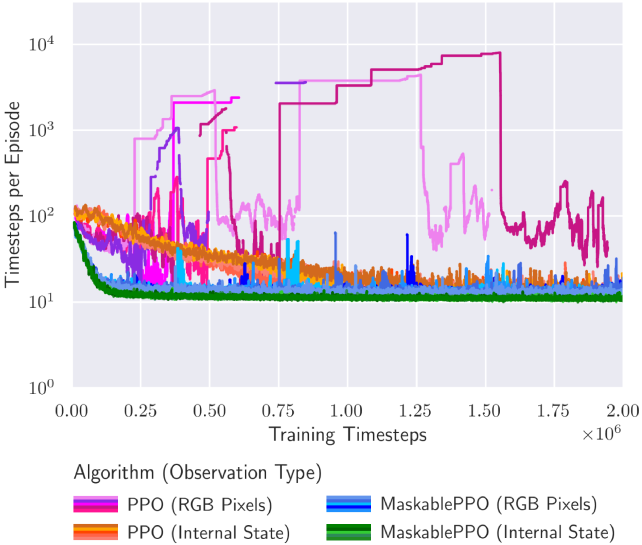

The image is a line chart comparing the training performance of four reinforcement learning algorithm variants. The chart plots the number of timesteps required to complete an episode (a measure of efficiency or performance) against the total number of training timesteps. The y-axis uses a logarithmic scale. The data suggests an evaluation of how quickly different algorithms learn to solve a task, with lower values on the y-axis indicating better performance (fewer steps to complete the episode).

### Components/Axes

* **Chart Type:** Line chart with multiple series.

* **X-Axis:**

* **Label:** "Training Timesteps"

* **Scale:** Linear, ranging from 0.00 to 2.00 x 10^6 (0 to 2 million).

* **Major Ticks:** 0.00, 0.25, 0.50, 0.75, 1.00, 1.25, 1.50, 1.75, 2.00 (all multiplied by 10^6).

* **Y-Axis:**

* **Label:** "Timesteps per Episode"

* **Scale:** Logarithmic (base 10), ranging from 10^0 (1) to 10^4 (10,000).

* **Major Ticks:** 10^0, 10^1, 10^2, 10^3, 10^4.

* **Legend:**

* **Title:** "Algorithm (Observation Type)"

* **Position:** Bottom center, below the x-axis label.

* **Entries (Color to Label Mapping):**

* **Magenta/Pink Line:** PPO (RGB Pixels)

* **Orange Line:** PPO (Internal State)

* **Blue Line:** MaskablePPO (RGB Pixels)

* **Green Line:** MaskablePPO (Internal State)

### Detailed Analysis

The chart displays four distinct performance curves, each corresponding to an algorithm-observation pair.

1. **PPO (RGB Pixels) - Magenta/Pink Line:**

* **Trend:** Highly unstable and erratic. Starts around 10^2, exhibits massive spikes and drops throughout training. Shows several prolonged periods where performance degrades severely (timesteps per episode jump to between 10^3 and 10^4).

* **Key Points:** Major spikes occur near 0.25M, 0.5M, 0.75M, and 1.5M timesteps. The highest peak approaches 10^4. After 1.5M timesteps, it shows a volatile but slightly improving trend, ending near 10^2.

2. **PPO (Internal State) - Orange Line:**

* **Trend:** Shows a clear, steady learning curve. Starts near 10^2 and consistently decreases over time, indicating improving performance.

* **Key Points:** Begins around 100. By 0.5M timesteps, it has dropped to approximately 20-30. It continues a gradual decline, converging to a value slightly above 10^1 (around 15-20) by the end of training at 2M timesteps.

3. **MaskablePPO (RGB Pixels) - Blue Line:**

* **Trend:** Generally stable and efficient after an initial learning phase. Starts around 10^2, drops quickly, and then maintains a low, relatively flat profile with minor fluctuations.

* **Key Points:** Initial value ~100. Drops below 20 within the first 0.25M timesteps. For the remainder of training, it fluctuates in a narrow band between approximately 10 and 30, ending near 15.

4. **MaskablePPO (Internal State) - Green Line:**

* **Trend:** The most stable and best-performing algorithm. Demonstrates rapid convergence to an optimal policy.

* **Key Points:** Starts near 10^2. Experiences a very sharp drop within the first ~0.1M timesteps, falling to near 10^1. It then remains extremely stable, hugging the 10^1 line (approximately 10-12 timesteps per episode) for the entire remainder of the training period with minimal variance.

### Key Observations

* **Performance Hierarchy:** MaskablePPO (Internal State) is the clear best performer, followed by MaskablePPO (RGB Pixels) and PPO (Internal State), which are comparable in final performance but differ in learning stability. PPO (RGB Pixels) is by far the worst and most unstable.

* **Impact of Observation Type:** For both PPO and MaskablePPO, using "Internal State" observations leads to significantly more stable and efficient learning compared to using "RGB Pixels." The performance gap is most dramatic for the standard PPO algorithm.

* **Impact of Algorithm:** MaskablePPO variants consistently outperform their standard PPO counterparts using the same observation type, showing faster convergence and greater stability.

* **Stability:** The green line (MaskablePPO, Internal State) shows almost no variance after initial learning, indicating highly reliable policy execution. In contrast, the magenta line (PPO, RGB Pixels) is characterized by extreme volatility.

### Interpretation

This chart provides strong empirical evidence for two key conclusions in the context of the evaluated reinforcement learning task:

1. **The superiority of structured state information:** Using "Internal State" (likely a direct, symbolic representation of the environment) as observation leads to dramatically better learning outcomes than using raw "RGB Pixels" (visual input). This suggests the task's state is more efficiently captured by the internal representation, and learning from pixels is a much harder, more unstable problem for these algorithms.

2. **The benefit of action masking:** The "MaskablePPO" algorithm, which can ignore invalid actions during policy improvement, demonstrates a decisive advantage over standard PPO. This is true for both observation types but is especially critical when learning from high-dimensional pixels, as it prevents the agent from wasting exploration on nonsensical actions, leading to faster and more stable learning.

The extreme instability of PPO with pixels (magenta line) suggests it struggles to find a consistent policy, possibly due to the high dimensionality of the input and the lack of constraints on action selection. The near-perfect stability of MaskablePPO with internal state (green line) indicates the combination of a compact state representation and action masking allows the agent to quickly discover and reliably execute a near-optimal policy. The data implies that for this specific task, engineering the observation space (providing internal state) and using an algorithm that incorporates domain knowledge (action masking) are more impactful than simply increasing training time.