TECHNICAL ASSET FINGERPRINT

2cc222d8ff7a2fd05f8ec3b1

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

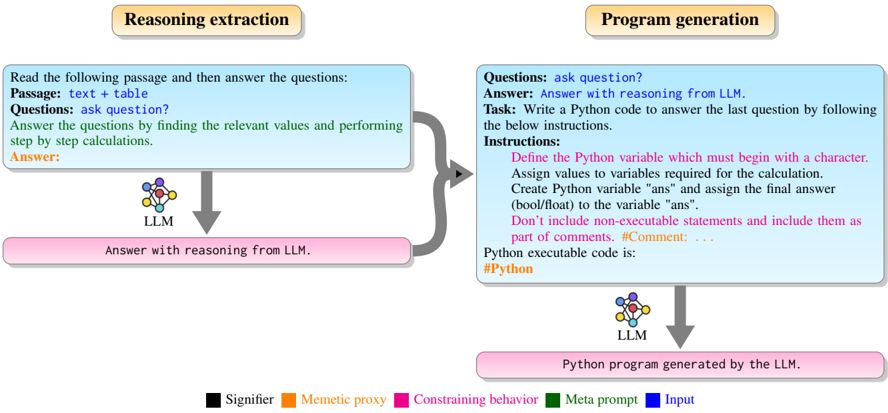

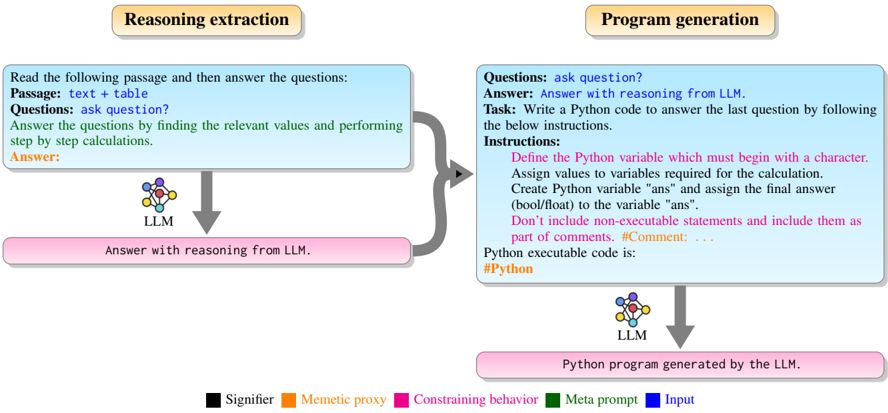

## Diagram: Reasoning Extraction and Program Generation

### Overview

The image presents a diagram illustrating the process of reasoning extraction and program generation using a Large Language Model (LLM). It outlines the steps involved in taking a passage and questions, extracting relevant information, and generating a Python program to answer the questions.

### Components/Axes

The diagram is divided into two main sections: "Reasoning extraction" on the left and "Program generation" on the right.

**Reasoning Extraction (Left Side):**

* **Header:** "Reasoning extraction" (orange background)

* **Input Box (Top):** Light blue box containing the following text:

* "Read the following passage and then answer the questions:"

* "Passage: text + table"

* "Questions: ask question?"

* "Answer the questions by finding the relevant values and performing step by step calculations."

* "Answer:"

* **LLM Icon:** A multi-colored node diagram labeled "LLM"

* **Output Box (Bottom):** Pink box containing the text: "Answer with reasoning from LLM."

* **Flow:** An arrow flows from the Input Box to the LLM icon, and another arrow flows from the LLM icon to the Output Box.

**Program Generation (Right Side):**

* **Header:** "Program generation" (orange background)

* **Input Box (Top):** Light blue box containing the following text:

* "Questions: ask question?"

* "Answer: Answer with reasoning from LLM."

* "Task: Write a Python code to answer the last question by following the below instructions."

* "Instructions:"

* "Define the Python variable which must begin with a character."

* "Assign values to variables required for the calculation."

* "Create Python variable "ans" and assign the final answer (bool/float) to the variable "ans"."

* "Don't include non-executable statements and include them as part of comments. #Comment: ..."

* "Python executable code is:"

* "#Python"

* **LLM Icon:** A multi-colored node diagram labeled "LLM"

* **Output Box (Bottom):** Pink box containing the text: "Python program generated by the LLM."

* **Flow:** An arrow flows from the Input Box to the LLM icon, and another arrow flows from the LLM icon to the Output Box.

**Legend (Bottom):**

* Black square: "Signifier"

* Orange square: "Memetic proxy"

* Pink square: "Constraining behavior"

* Green square: "Meta prompt"

* Blue square: "Input"

### Detailed Analysis or ### Content Details

**Reasoning Extraction:**

1. The process begins with an input passage containing text and a table, along with a question.

2. The LLM processes this information to find relevant values and perform calculations.

3. The LLM outputs an answer with reasoning.

**Program Generation:**

1. The process starts with a question and the answer with reasoning from the LLM.

2. The task is to write a Python code to answer the last question, following specific instructions.

3. The instructions include defining Python variables, assigning values, creating an "ans" variable, and including comments.

4. The LLM generates a Python program.

### Key Observations

* The diagram highlights the use of an LLM in both reasoning extraction and program generation.

* The input and output boxes provide context for the LLM's role in each process.

* The instructions for program generation emphasize the importance of defining variables, assigning values, and including comments in the Python code.

### Interpretation

The diagram illustrates a system where an LLM is used to first extract reasoning from a given passage and questions, and then to generate a Python program based on that reasoning. This suggests a process of automated problem-solving where the LLM not only provides an answer but also generates the code necessary to arrive at that answer. The separation into "Reasoning extraction" and "Program generation" suggests a modular approach, where the LLM's reasoning capabilities are leveraged to create executable code. The instructions provided to the LLM for program generation are crucial for ensuring the code is well-structured and includes necessary elements like variable definitions and comments.

DECODING INTELLIGENCE...

EXPERT: gemini-2.5-flash-free VERSION 1

RUNTIME: google-free/gemini-2.5-flash

INTEL_VERIFIED

## Diagram: LLM-based Reasoning Extraction and Program Generation Workflow

### Overview

This image presents a two-stage workflow diagram illustrating how a Large Language Model (LLM) can be used for "Reasoning extraction" followed by "Program generation." The diagram details the specific prompts and instructions provided to the LLM at each stage, highlighting different types of prompt components using a color-coded legend. The overall flow demonstrates a process where an LLM first generates a reasoned answer to a question based on provided context, and then uses that reasoning to generate executable Python code.

### Components/Axes

The diagram is structured horizontally into two main phases, each with a distinct header:

* **Top-center-left header**: "Reasoning extraction" (light brown oval with black text).

* **Top-center-right header**: "Program generation" (light brown oval with black text).

Each phase contains:

* A **light blue rectangular box with rounded corners**: Represents the input prompt provided to the LLM.

* A **neural network icon labeled "LLM"**: Represents the Large Language Model processing the input.

* A **pink rectangular box with rounded corners**: Represents the output generated by the LLM.

* **Gray arrows**: Indicate the flow of information between components.

**Legend (bottom-center of the image):**

The legend defines the color coding used for different types of prompt elements within the input boxes:

* **Black square**: Signifier

* **Orange square**: Memetic proxy

* **Magenta square**: Constraining behavior

* **Dark Green square**: Meta prompt

* **Blue square**: Input

### Detailed Analysis

The workflow proceeds from left to right, detailing two sequential processes involving an LLM.

**Phase 1: Reasoning extraction**

* **Input Prompt (light blue box, left)**:

* "Read the following passage and then answer the questions:" (Black text - Signifier)

* "Passage: text + table" (Blue text - Input)

* "Questions: ask question?" (Black text - Signifier)

* "Answer the questions by finding the relevant values and performing step by step calculations." (Dark Green text - Meta prompt)

* "Answer:" (Orange text - Memetic proxy)

* **Processing**: A gray arrow points downwards from the input prompt box to the "LLM" component (neural network icon).

* **Output (pink box, bottom-left)**:

* "Answer with reasoning from LLM." (Black text)

**Phase 2: Program generation**

* **Input Flow**: A large, curved gray arrow originates from the output of the "Reasoning extraction" phase (the pink box) and points to the right, indicating that this output feeds into the input prompt for the "Program generation" phase.

* **Input Prompt (light blue box, right)**: This box combines new instructions with the output from the previous phase.

* "Questions: ask question?" (Black text - Signifier)

* "Answer: Answer with reasoning from LLM." (Orange text - Memetic proxy) - This text is identical to the output of the previous phase, indicating it's directly incorporated.

* "Task: Write a Python code to answer the last question by following the below instructions." (Black text - Signifier)

* "Instructions:" (Black text - Signifier)

* "Define the Python variable which must begin with a character." (Magenta text - Constraining behavior)

* "Assign values to variables required for the calculation." (Magenta text - Constraining behavior)

* "Create Python variable "ans" and assign the final answer (bool/float) to the variable "ans"." (Magenta text - Constraining behavior)

* "Don't include non-executable statements and include them as part of comments. #Comment: ..." (Magenta text - Constraining behavior)

* "Python executable code is:" (Black text - Signifier)

* "#Python" (Dark Green text - Meta prompt)

* **Processing**: A gray arrow points downwards from the input prompt box to the "LLM" component (neural network icon).

* **Output (pink box, bottom-right)**:

* "Python program generated by the LLM." (Black text)

### Key Observations

* The diagram clearly delineates two distinct stages of an LLM's application: first for generating human-readable reasoning, and second for translating that reasoning into executable code.

* The output of the first stage ("Answer with reasoning from LLM.") becomes a crucial part of the input for the second stage, demonstrating a chained prompting approach.

* The use of color-coding in the input prompts is critical for understanding the different roles of various prompt components:

* **Signifiers (Black)**: General instructions or labels.

* **Input (Blue)**: The raw data or context provided (e.g., "text + table").

* **Meta prompt (Dark Green)**: High-level instructions guiding the LLM's approach (e.g., "step by step calculations," "#Python" indicating the desired output language).

* **Memetic proxy (Orange)**: Placeholders or examples of the desired output format, or the actual output from a previous step that serves as context for the current step.

* **Constraining behavior (Magenta)**: Specific, detailed rules or constraints that the LLM must adhere to when generating its output (e.g., variable naming conventions, output variable name, comment requirements).

### Interpretation

This diagram illustrates a sophisticated prompting strategy designed to leverage the capabilities of Large Language Models for complex problem-solving.

The "Reasoning extraction" phase demonstrates how an LLM can be prompted to not just provide an answer, but to explicitly show its step-by-step reasoning. This is achieved by using a "Meta prompt" (dark green) that instructs the LLM to perform "step by step calculations" and a "Memetic proxy" (orange) that sets up the expectation for an "Answer:". This approach is valuable for transparency, debugging, and ensuring the LLM follows a logical path.

The "Program generation" phase then takes this extracted reasoning and uses it as context to generate executable code. The "Memetic proxy" (orange) carries the LLM's previous reasoning into this new prompt, allowing the LLM to build upon its prior understanding. Crucially, this phase heavily utilizes "Constraining behavior" (magenta) prompts. These detailed instructions guide the LLM to produce code that adheres to specific syntax, variable naming conventions, and output formats (e.g., defining `ans` as a `bool/float`). The "Meta prompt" (dark green) "#Python" explicitly signals the desired programming language.

The overall process suggests a method for enhancing the reliability and controllability of LLM outputs. By first extracting explicit reasoning and then using that reasoning, along with strict constraints, to generate code, the system aims to produce more accurate, verifiable, and usable programmatic solutions compared to a single-shot prompt for code generation. This two-step approach mitigates potential issues where an LLM might generate correct code without clear reasoning, or incorrect code due to misinterpreting implicit requirements. It highlights a pattern of using LLMs for both cognitive (reasoning) and generative (coding) tasks in a structured, interdependent manner.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Reasoning Extraction vs. Program Generation

### Overview

This diagram illustrates two distinct processes: "Reasoning Extraction" and "Program Generation," both utilizing a Large Language Model (LLM). The diagram visually represents the input, processing steps, and output for each process, highlighting the different instructions and expected outcomes.

### Components/Axes

The diagram is divided into two main sections, one for each process, positioned side-by-side. Each section contains rectangular boxes representing stages: Input, LLM processing, and Output. Arrows indicate the flow of information. A legend at the bottom explains the color-coding used to represent different elements within the diagram.

**Legend:**

* **Black:** Signifier

* **Orange:** Memetic proxy

* **Yellow:** Constraining behavior

* **Purple:** Meta prompt

* **Green:** Input

**Reasoning Extraction Section:**

* **Header:** "Reasoning extraction" (Black text on light blue background)

* **Input Box:** Contains the text: "Read the following passage and then answer the questions: Passage: text + table Questions: ask question? Answer the questions by finding the relevant values and performing step by step calculations. Answer:" (Black text)

* **LLM Box:** Contains the text: "LLM" and below it "Answer with reasoning from LLM." (Black text)

* **Output Box:** Contains the text: "Answer with reasoning from LLM." (Black text)

**Program Generation Section:**

* **Header:** "Program generation" (Black text on light blue background)

* **Input Box:** Contains the text: "Questions: ask question? Answer: Answer with reasoning from LLM." (Black text)

* **Instructions Box:** Contains the text: "Instructions: Define the Python variable which must begin with a character. Assign values to variables required for the calculation. Create Python variable "ans" and assign the final answer (bool/float) to the variable "ans". Don't include non-executable statements and include them as part of comments. #Comment: ... Python executable code is: #Python" (Black text)

* **LLM Box:** Contains the text: "LLM" and below it "Python program generated by the LLM." (Black text)

* **Output Box:** Contains the text: "Python program generated by the LLM." (Black text)

### Detailed Analysis or Content Details

The diagram illustrates a clear distinction in the tasks performed by the LLM.

**Reasoning Extraction:**

The input is a passage of text combined with a table, and a question. The LLM is instructed to answer the question by extracting relevant values and performing calculations. The output is the answer, accompanied by the reasoning process.

**Program Generation:**

The input is a question and an initial answer from the LLM. The LLM is provided with specific instructions for generating Python code to answer the question. These instructions include defining variables, assigning values, and creating a final answer variable. The output is a Python program generated by the LLM.

### Key Observations

The diagram highlights the LLM's versatility in handling different types of tasks. It can be used for both reasoning and code generation, depending on the input and instructions provided. The color-coding effectively distinguishes between different elements of the process, such as input, processing, and output. The use of "Meta prompt" (purple) suggests a higher-level instruction guiding the LLM's behavior.

### Interpretation

The diagram demonstrates two distinct applications of LLMs: one focused on extracting and reasoning about information from text and tables, and the other focused on translating reasoning into executable code. The "Reasoning Extraction" process emphasizes understanding and interpretation, while the "Program Generation" process emphasizes implementation and execution. The diagram suggests a workflow where the LLM can first reason about a problem and then generate a program to solve it. The inclusion of instructions for Python code generation indicates a focus on practical application and automation. The diagram is a conceptual illustration of how LLMs can be used in a broader system for problem-solving and decision-making. The color coding is a visual aid to understand the flow of information and the different roles played by various components. The diagram does not contain any numerical data or specific values, but rather focuses on the process and flow of information.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: LLM-Based Reasoning Extraction and Program Generation Pipeline

### Overview

The image is a technical flowchart illustrating a two-stage process for using a Large Language Model (LLM) to first extract reasoning from a text passage and then generate a Python program to solve a specific question. The diagram uses color-coded elements to distinguish between different types of components in the process.

### Components/Axes

The diagram is divided into two primary, side-by-side sections, each with a title in a rounded rectangle at the top. A legend at the bottom defines the color scheme used throughout.

**1. Left Section: "Reasoning extraction"**

* **Input Block (Light Blue):** Contains the initial instructions and data.

* Text: "Read the following passage and then answer the questions:"

* "Passage: text + table"

* "Questions: ask question?"

* "Answer the questions by finding the relevant values and performing step by step calculations."

* "Answer:" (in orange text)

* **Process Icon:** A stylized brain/network icon labeled "LLM".

* **Output Block (Pink):** The result of the first stage.

* Text: "Answer with reasoning from LLM."

* **Flow:** An arrow points from the Input Block to the LLM icon, and another arrow points from the LLM icon to the Output Block.

**2. Right Section: "Program generation"**

* **Input Block (Light Blue):** Contains the task for the second stage.

* "Questions: ask question?"

* "Answer: Answer with reasoning from LLM." (This text is in blue, indicating it's the input from the previous stage).

* "Task: Write a Python code to answer the last question by following the below instructions."

* "Instructions:" (followed by a list in pink text)

* "Define the Python variable which must begin with a character."

* "Assign values to variables required for the calculation."

* "Create Python variable "ans" and assign the final answer (bool/float) to the variable "ans"."

* "Don't include non-executable statements and include them as part of comments. #Comment: ..."

* "Python executable code is:"

* "#Python" (in orange text)

* **Process Icon:** A second, identical LLM icon.

* **Output Block (Pink):** The final output of the pipeline.

* Text: "Python program generated by the LLM."

* **Flow:** A thick, curved grey arrow connects the Output Block of the "Reasoning extraction" section to the Input Block of the "Program generation" section. Within the right section, arrows flow from the Input Block to the LLM icon, and from the LLM icon to the final Output Block.

**3. Legend (Bottom Center)**

A horizontal legend defines the color coding:

* **Black Square:** "Signifier"

* **Orange Square:** "Memetic proxy"

* **Pink Square:** "Constraining behavior"

* **Green Square:** "Meta prompt"

* **Blue Square:** "Input"

### Detailed Analysis

The diagram explicitly maps the color legend to specific text elements within the flowchart:

* **Blue (Input):** The text "Answer: Answer with reasoning from LLM." in the right-hand input block is colored blue, marking it as the direct input carried over from the first stage's output.

* **Orange (Memetic proxy):** The labels "Answer:" (left) and "#Python" (right) are in orange. These appear to be placeholders or signposts for the type of content expected or generated.

* **Pink (Constraining behavior):** The entire list of "Instructions" in the right-hand block is in pink text. This color also fills the two main output blocks ("Answer with reasoning from LLM." and "Python program generated by the LLM."), suggesting these outputs are the constrained results of the process.

* **Green (Meta prompt):** The instructional sentences within the light blue boxes (e.g., "Read the following passage...", "Write a Python code...") are in green text, identifying them as meta-prompts guiding the LLM's behavior.

* **Black (Signifier):** Used for the majority of the standard descriptive text and labels.

### Key Observations

1. **Sequential Pipeline:** The process is strictly sequential. The reasoning extracted in the first stage is a mandatory input for the second stage's code generation task.

2. **Constraint-Driven Generation:** The second stage is heavily governed by a set of explicit, pink-colored "Constraining behavior" instructions that dictate the structure and content of the desired Python code (e.g., variable naming rules, use of comments).

3. **Role of the LLM:** The LLM is depicted as the central processing unit in both stages, transforming unstructured text and instructions into structured reasoning and then into executable code.

4. **Color-Coded Semantics:** The diagram uses color not just for aesthetics but to semantically classify different parts of the prompt and output, providing a meta-layer of information about the function of each text element.

### Interpretation

This diagram outlines a sophisticated method for leveraging an LLM in a multi-step, constrained problem-solving workflow. It moves beyond simple question-answering to a pipeline that first performs **analytical reasoning** (extracting and calculating from text/tables) and then **synthetic generation** (writing code based on that reasoning).

The color legend is particularly insightful. It suggests a framework for prompt engineering where:

* **Meta prompts (green)** set the overall task.

* **Inputs (blue)** are the data to process.

* **Constraining behaviors (pink)** are critical rules that shape the output to be useful and executable, preventing the LLM from generating arbitrary or non-compliant responses.

* **Memetic proxies (orange)** act as symbolic anchors within the text.

The pipeline's goal is to automate the translation of a natural language problem into a verifiable, executable program. The first stage ensures the logic is sound, and the second stage codifies that logic. This approach could be used for educational tools (generating solution code), data analysis automation, or converting specification documents into functional scripts. The explicit separation of reasoning from coding highlights an understanding that reliable code generation depends on first having a clear, step-by-step reasoning process.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: LLM Workflow for Reasoning and Code Generation

### Overview

The diagram illustrates a two-stage process for utilizing a Large Language Model (LLM) to extract reasoning from text and generate executable Python code. The workflow is divided into **Reasoning Extraction** (left) and **Program Generation** (right), with bidirectional arrows indicating iterative refinement between stages.

---

### Components/Axes

1. **Header**:

- Title: "Reasoning extraction" (left) and "Program generation" (right), both in bold yellow boxes.

2. **Main Sections**:

- **Reasoning Extraction**:

- Input: "Read the following passage and then answer the questions: Passage: text + table"

- Output: "Answer with reasoning from LLM."

- Includes a flowchart with interconnected nodes (colored circles) representing LLM processing steps.

- **Program Generation**:

- Input: "Questions: ask question?" and "Answer: Answer with reasoning from LLM."

- Task: "Write a Python code to answer the last question by following the below instructions."

- Instructions include variable naming rules (e.g., "Define the Python variables required for the calculation") and constraints (e.g., "Don’t include non-executable statements").

3. **Legend** (bottom center):

- Colors and labels:

- **Signifier**: Black (not visibly used in diagram).

- **Mementic proxy**: Orange (not visibly used).

- **Constraining behavior**: Pink (used for "Answer with reasoning from LLM" text).

- **Meta prompt**: Green (not visibly used).

- **Input**: Blue (used for LLM icons and text boxes).

---

### Detailed Analysis

1. **Reasoning Extraction**:

- **Input**: Text + table (unspecified content).

- **Process**: LLM analyzes the passage to identify relevant values and perform step-by-step calculations.

- **Output**: Structured answer with embedded reasoning (e.g., "Answer: [LLM-generated text]").

2. **Program Generation**:

- **Input**: Previous LLM answer (e.g., "Answer: ...").

- **Task**: Generate Python code adhering to strict formatting rules:

- Variables must start with a character.

- Final answer assigned to variable `ans`.

- Comments must include `#Comment: ...` and exclude non-executable code.

- **Output**: Python program generated by LLM (e.g., "Python program generated by the LLM").

---

### Key Observations

1. **Bidirectional Flow**: Arrows between sections suggest iterative refinement (e.g., revising reasoning to improve code generation).

2. **Color Coding**:

- Pink highlights critical outputs ("Answer with reasoning from LLM").

- Blue dominates LLM-related elements (icons, text boxes).

3. **Constraints**: Explicit rules for code generation (e.g., variable naming, comment formatting) emphasize precision.

---

### Interpretation

This diagram outlines a structured workflow for leveraging LLMs in technical tasks:

1. **Reasoning Extraction** focuses on deriving logical steps from unstructured text/tables.

2. **Program Generation** translates these steps into executable code, enforcing syntactic and semantic constraints to ensure validity.

3. The use of color-coded labels (e.g., "Constraining behavior") suggests a framework for controlling LLM outputs, balancing creativity with adherence to technical requirements.

The process emphasizes **iterative refinement**, where reasoning and code generation are interdependent. For example, ambiguous reasoning might require revisiting the text passage, while code errors could prompt revisiting the LLM’s initial answer. This mirrors real-world scenarios where LLMs assist in bridging natural language understanding and technical implementation.

DECODING INTELLIGENCE...