## Diagrams: Transformer Architectures Comparison

### Overview

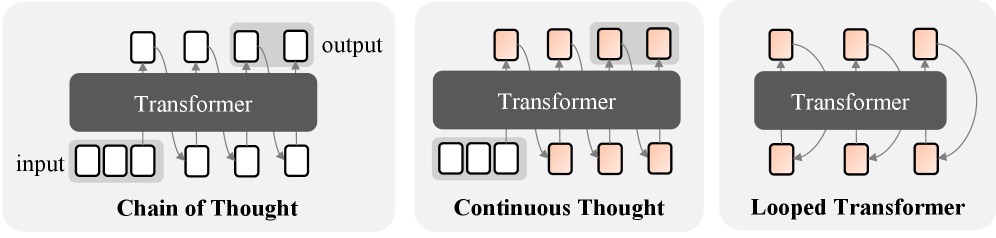

The image presents three side-by-side diagrams illustrating variations of Transformer-based architectures: **Chain of Thought**, **Continuous Thought**, and **Looped Transformer**. Each diagram depicts a "Transformer" block with distinct input-output configurations, emphasizing differences in processing flow and structural design.

### Components/Axes

1. **Chain of Thought**:

- **Input**: Three white boxes labeled "input" connected to the Transformer.

- **Transformer**: Central gray block labeled "Transformer."

- **Output**: Three white boxes labeled "output" receiving processed data.

- **Flow**: Linear progression from input → Transformer → output.

2. **Continuous Thought**:

- **Input**: Three white boxes labeled "input" connected to the Transformer.

- **Transformer**: Central gray block labeled "Transformer."

- **Output**: Four beige boxes labeled "output," with two highlighted in light gray.

- **Flow**: Linear input → Transformer → output, with additional intermediate processing steps (beige boxes).

3. **Looped Transformer**:

- **Input**: Three beige boxes connected to the Transformer.

- **Transformer**: Central gray block labeled "Transformer."

- **Output**: Three beige boxes with a feedback loop (curved arrow) connecting the Transformer to the output.

- **Flow**: Input → Transformer → output, with a recursive loop enabling iterative processing.

### Detailed Analysis

- **Chain of Thought**:

- Simplest architecture with direct input-output mapping.

- No feedback or intermediate steps.

- All boxes are uniformly white, emphasizing a static, one-pass processing model.

- **Continuous Thought**:

- Introduces **intermediate processing steps** (beige boxes) between the Transformer and output.

- Two output boxes are highlighted in light gray, suggesting prioritization or selective processing.

- Maintains linear flow but adds complexity via additional computational stages.

- **Looped Transformer**:

- Features a **feedback loop** (curved arrow) from the Transformer to the output, enabling iterative refinement.

- Input and output boxes are beige, potentially indicating dynamic or adaptive data handling.

- Loop suggests memory retention or recurrent processing capabilities.

### Key Observations

1. **Structural Complexity**:

- Chain of Thought is the most basic, while Looped Transformer introduces recursion.

- Continuous Thought bridges the two with intermediate steps but no feedback.

2. **Color Coding**:

- White boxes (Chain of Thought) vs. beige boxes (Continuous/Looped) may denote input/output types or processing stages.

- Highlighted gray boxes in Continuous Thought imply selective focus.

3. **Flow Direction**:

- All diagrams use top-to-bottom flow, but Looped Transformer adds lateral feedback.

### Interpretation

These diagrams likely represent theoretical or conceptual models for enhancing Transformer architectures:

- **Chain of Thought** aligns with traditional, non-recurrent models.

- **Continuous Thought** introduces modular processing, possibly for tasks requiring staged computation (e.g., multi-step reasoning).

- **Looped Transformer** incorporates feedback loops, suggesting applications in dynamic environments (e.g., real-time adaptation, memory-augmented systems).

The progression from linear to recursive architectures highlights efforts to improve context retention, iterative learning, or task-specific optimization in Transformer-based systems. The absence of numerical data implies these are conceptual frameworks rather than empirical results.