## Technical Diagram: Decoding Process Comparison

### Overview

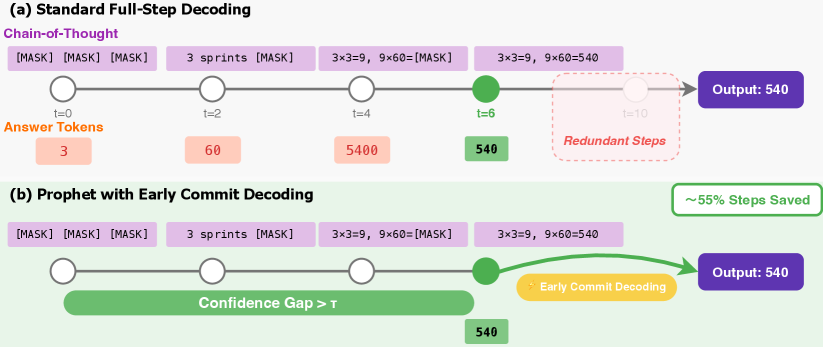

The image is a technical diagram comparing two decoding methods for a language model or similar AI system. It visually contrasts a standard approach with an optimized method called "Prophet with Early Commit Decoding," highlighting a reduction in computational steps. The diagram is divided into two horizontal panels labeled (a) and (b).

### Components/Axes

The diagram uses a horizontal timeline format to represent sequential decoding steps.

**Panel (a): Standard Full-Step Decoding**

* **Title:** "(a) Standard Full-Step Decoding"

* **Process Label:** "Chain-of-Thought" (purple text, top-left).

* **Timeline:** A horizontal line with circular nodes at discrete time steps: `t=0`, `t=2`, `t=4`, `t=6`, and a faded node at `t=10`.

* **Input/Output Tokens (Purple Boxes):** Above the timeline, purple boxes show the sequence of generated tokens:

* At `t=0`: `[MASK] [MASK] [MASK]`

* At `t=2`: `3 sprints [MASK]`

* At `t=4`: `3x3=9, 9x60=[MASK]`

* At `t=6`: `3x3=9, 9x60=540`

* **Answer Tokens (Orange Boxes):** Below the timeline, orange boxes labeled "Answer Tokens" show intermediate numerical results:

* Below `t=0`: `3`

* Below `t=2`: `60`

* Below `t=4`: `5400`

* Below `t=6`: `540` (This box is green, matching the final output).

* **Final Output:** A dark purple box on the far right labeled "Output: 540".

* **Redundant Steps:** A red dashed box encloses the timeline segment from `t=6` to `t=10`, labeled "Redundant Steps".

**Panel (b): Prophet with Early Commit Decoding**

* **Title:** "(b) Prophet with Early Commit Decoding"

* **Timeline & Input/Output Tokens:** The sequence of purple token boxes is identical to panel (a).

* **Confidence Indicator:** A long green bar spans the timeline from `t=0` to just before `t=6`, labeled "Confidence Gap > τ".

* **Early Commit Point:** The green node at `t=6` is present. A green arrow originates from this node, curving upward and then rightward, bypassing the subsequent steps.

* **Early Commit Label:** A yellow box along the green arrow is labeled "Early Commit Decoding".

* **Final Output:** The same dark purple "Output: 540" box is connected directly by the green arrow.

* **Efficiency Metric:** A green-outlined box in the top-right corner states "~55% Steps Saved".

### Detailed Analysis

The diagram illustrates a computational process solving the problem: `3 sprints * (3x3=9, 9x60=540)`. The core difference lies in when the final answer (`540`) is committed to.

* **Standard Method (a):** The model generates tokens sequentially. It produces the final answer token `540` at step `t=6`. However, it continues generating for four more steps (until `t=10`), which are labeled as "Redundant Steps." The "Answer Tokens" show intermediate calculations (`3`, `60`, `5400`) that appear to be part of an internal reasoning chain, with the final correct answer (`540`) appearing at `t=6`.

* **Prophet Method (b):** The process is identical up to step `t=6`. The key difference is the "Confidence Gap > τ" bar, indicating the system monitors a confidence metric. Once confidence exceeds a threshold (τ) at `t=6`, it triggers "Early Commit Decoding." The model then skips the redundant steps (`t=7` to `t=10`) and directly outputs the final answer, saving approximately 55% of the decoding steps.

### Key Observations

1. **Spatial Grounding:** The "Redundant Steps" box in (a) and the "Early Commit Decoding" arrow in (b) occupy the same spatial region (right side of the timeline), visually emphasizing the replacement of wasted computation with an efficient shortcut.

2. **Color Consistency:** The final answer token `540` is consistently colored green in both panels (in the "Answer Tokens" row at `t=6`), linking it to the green "Confidence" bar and the green "Early Commit" arrow in panel (b).

3. **Trend Verification:** Both panels show the same upward trend in the complexity of generated tokens (from `[MASK]` to a full equation). The divergence is in the *termination* of the process, not in the content generated up to the commit point.

4. **Mathematical Example:** The embedded calculation (`3x3=9, 9x60=540`) serves as a concrete example of a multi-step reasoning task where the model could potentially determine the final answer before finishing all planned generation steps.

### Interpretation

This diagram argues for the efficiency of the "Prophet" decoding method. It suggests that standard autoregressive decoding often performs unnecessary computation after the model has already confidently determined the correct output. The "Confidence Gap > τ" is the critical mechanism; it acts as an internal monitor that, when triggered, allows the system to abort the planned decoding schedule early.

The **~55% Steps Saved** metric is a direct consequence of skipping the steps from `t=7` to `t=10`. This has significant implications for reducing latency and computational cost in real-time AI applications. The diagram implies that the "Prophet" method maintains output quality (the same "Output: 540") while dramatically improving efficiency. The use of a simple arithmetic problem makes the concept accessible, but the principle is applicable to any generative task where confidence can be measured, such as translation, summarization, or code generation. The core insight is that more generation is not always better; optimal generation should be *just enough*.