\n

## Diagram: Retrieval Augmented Generation (RAG) Architectures

### Overview

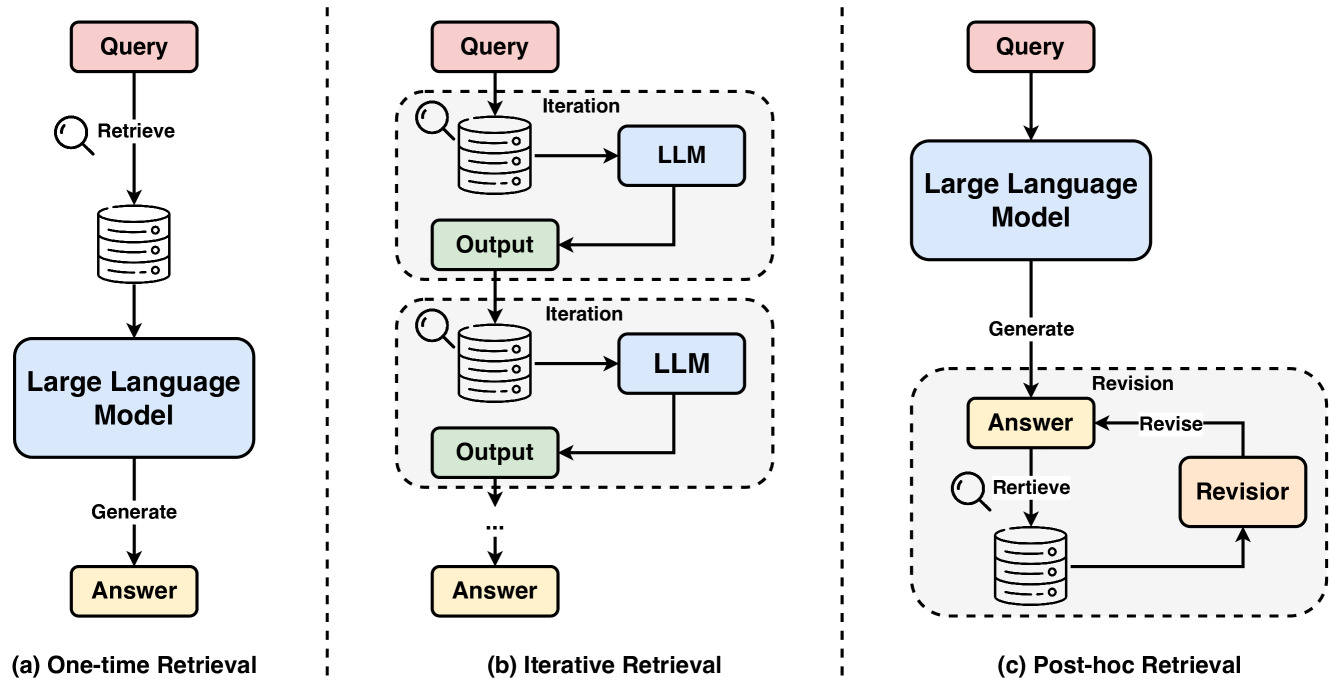

The image presents a comparative diagram illustrating three different architectures for Retrieval Augmented Generation (RAG) systems. These architectures are labeled (a) One-time Retrieval, (b) Iterative Retrieval, and (c) Post-hoc Retrieval. Each architecture depicts the flow of information from a "Query" to an "Answer" through various components including a database, a Large Language Model (LLM), and in some cases, a "Revisor". The diagrams are separated by vertical dashed lines.

### Components/Axes

The key components present in the diagrams are:

* **Query:** Represented by a red rectangle.

* **Retrieve:** Represented by a circular arrow.

* **Database:** Represented by a cylinder with stacked disks.

* **LLM (Large Language Model):** Represented by a rectangle labeled "LLM".

* **Output:** Represented by a rectangle labeled "Output".

* **Answer:** Represented by a rectangle labeled "Answer".

* **Iteration:** Label indicating a loop in the process.

* **Generate:** Label indicating the LLM generating an answer.

* **Revision:** Label indicating the revisor revising the answer.

* **Revisor:** Represented by a rectangle labeled "Revisor".

### Detailed Analysis or Content Details

**(a) One-time Retrieval:**

* The process begins with a "Query".

* A "Retrieve" operation fetches data from the "Database".

* The retrieved data is fed into the "Large Language Model".

* The "Large Language Model" then "Generate"s an "Answer".

* The flow is linear and unidirectional.

**(b) Iterative Retrieval:**

* The process starts with a "Query".

* A "Retrieve" operation fetches data from the "Database".

* The retrieved data and the "Query" are fed into the "LLM".

* The "LLM" produces an "Output".

* This "Output" is then used in an "Iteration" loop, where it is used as a new "Query" to "Retrieve" more data from the "Database".

* This iterative process continues, refining the "Output" with each iteration, until an "Answer" is generated.

* The iteration is visually represented by a dashed loop encompassing the "Retrieve", "LLM", and "Output" components.

**(c) Post-hoc Retrieval:**

* The process begins with a "Query".

* The "Large Language Model" "Generate"s an initial "Answer".

* The "Answer" is then sent to a "Revisor".

* The "Revisor" initiates a "Retrieve" operation from the "Database".

* The retrieved data is used by the "Revisor" to "Revise" the initial "Answer".

* The flow includes a feedback loop from the "Revisor" back to the "Retrieve" operation, indicated by a dashed arrow.

### Key Observations

* The diagrams highlight different strategies for integrating external knowledge (from the database) into the LLM's response generation process.

* The "Iterative Retrieval" architecture emphasizes a continuous refinement of the output through multiple retrieval-generation cycles.

* The "Post-hoc Retrieval" architecture separates the initial answer generation from the knowledge retrieval and revision stages.

* All three architectures share the common components of "Query", "Database", "LLM", and "Answer".

### Interpretation

The diagram illustrates the evolution of RAG architectures. The "One-time Retrieval" represents the simplest approach, where the LLM receives all necessary information upfront. The "Iterative Retrieval" demonstrates a more sophisticated approach, allowing the LLM to refine its understanding and output through multiple rounds of interaction with the knowledge source. The "Post-hoc Retrieval" suggests a modular design, where a separate revisor component can leverage external knowledge to improve the quality of the LLM's initial response.

The choice of architecture depends on the specific application and the characteristics of the knowledge source. Iterative retrieval might be suitable for complex queries requiring nuanced understanding, while post-hoc retrieval could be beneficial for scenarios where the LLM’s initial response needs to be fact-checked or augmented with additional information. The diagrams effectively communicate the core principles and trade-offs of each approach, providing a valuable visual aid for understanding the landscape of RAG techniques.