## Diagram: Skill-Based Reasoning Process for an LLM

### Overview

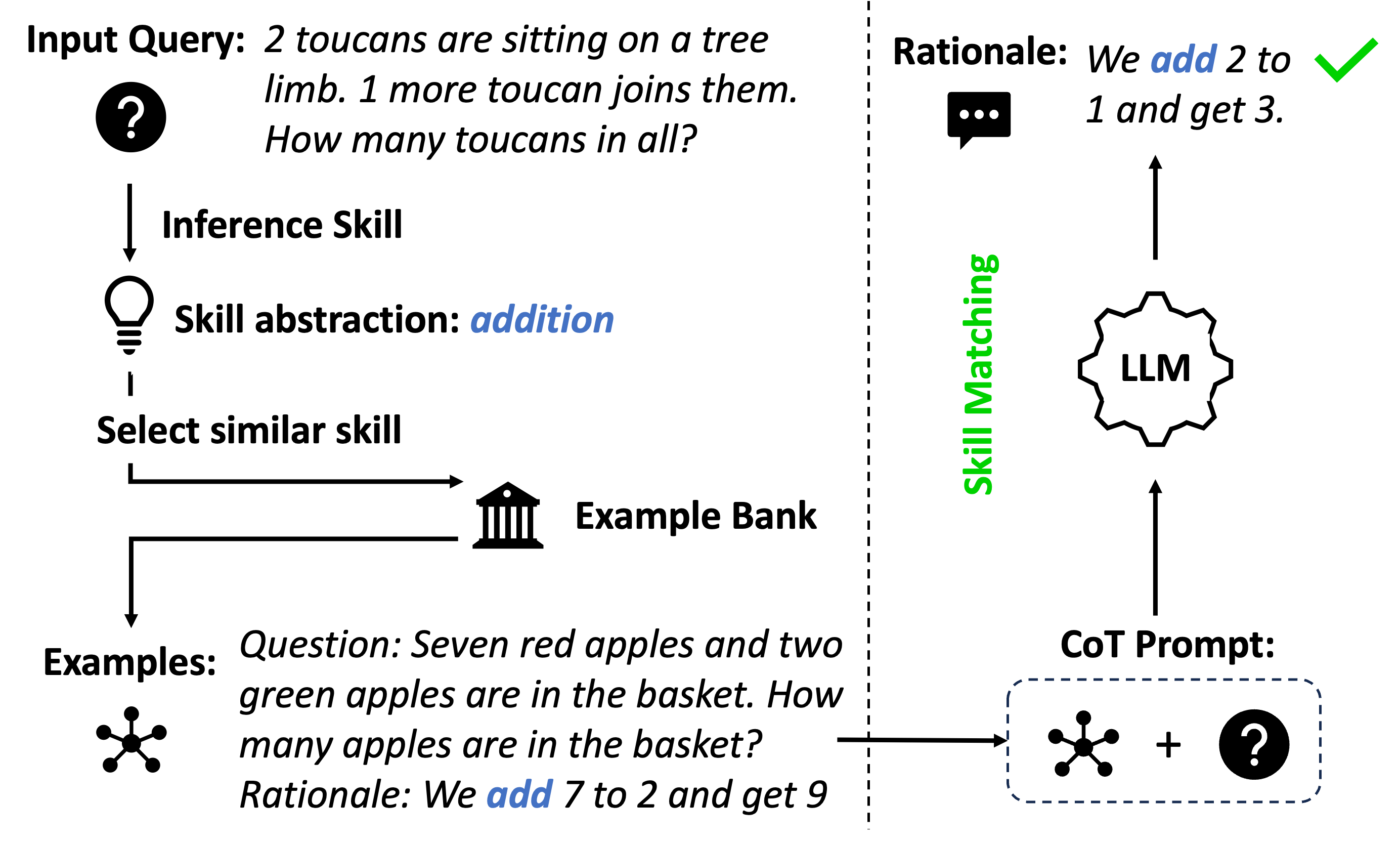

The image is a flowchart diagram illustrating a process for solving a simple arithmetic word problem using a skill-based reasoning approach with a Large Language Model (LLM). The diagram is divided into two main sections by a vertical dashed line. The left side details the process of abstracting a skill from an input query and retrieving relevant examples. The right side shows how these elements are used to prompt an LLM to generate a rationale and answer.

### Components/Axes

The diagram is composed of text labels, icons, and directional arrows on a light gray background.

**Left Section (Pre-processing / Skill Retrieval):**

1. **Top-Left:** An icon of a question mark inside a circle, labeled **"Input Query:"**. The query text is: *"2 toucans are sitting on a tree limb. 1 more toucan joins them. How many toucans in all?"*

2. **Below Input Query:** A downward arrow points to a box labeled **"Inference Skill"**.

3. **Below Inference Skill:** A lightbulb icon next to a box labeled **"Skill abstraction: addition"**. The word "addition" is in blue italic font.

4. **Below Skill Abstraction:** A box labeled **"Select similar skill"**.

5. **Right of Select Similar Skill:** A horizontal arrow points to an icon of a classical building (representing a bank or repository) labeled **"Example Bank"**.

6. **Below Example Bank:** A downward arrow points to a section labeled **"Examples:"** with a network/node icon. The example text is:

* *"Question: Seven red apples and two green apples are in the basket. How many apples are in the basket?"*

* *"Rationale: We add 7 to 2 and get 9"*. The word "add" is in blue italic font.

**Right Section (LLM Processing / Output):**

1. **Vertical Divider:** A dashed line separates the left and right sections. The text **"Skill Matching"** is written vertically in green along this line.

2. **Bottom-Right:** A box labeled **"CoT Prompt:"** (Chain-of-Thought Prompt). Inside a dashed rectangle, it shows the network/node icon (from "Examples") plus the question mark icon (from "Input Query"), connected by a plus sign.

3. **Above CoT Prompt:** An upward arrow points to a gear-shaped icon labeled **"LLM"**.

4. **Above LLM:** An upward arrow points to a speech bubble icon with three dots, labeled **"Rationale:"**. The rationale text is: *"We add 2 to 1 and get 3."* The word "add" is in blue italic font. A green checkmark is placed to the right of this text.

### Detailed Analysis

The diagram outlines a multi-step workflow:

1. **Query Ingestion:** The process begins with a natural language input query about toucans.

2. **Skill Abstraction:** The system performs "Inference Skill" to abstract the core mathematical skill required, which is identified as "addition".

3. **Example Retrieval:** Using the abstracted skill ("Select similar skill"), the system queries an "Example Bank" to find relevant demonstration examples. The retrieved example is a similar addition problem about apples.

4. **Prompt Construction:** A Chain-of-Thought (CoT) prompt is constructed by combining the retrieved example(s) with the original input query. This is represented by the network icon (examples) + question mark icon (query).

5. **LLM Inference:** This combined prompt is fed into an "LLM".

6. **Output Generation:** The LLM processes the prompt and generates a "Rationale" that mirrors the structure of the example, providing a step-by-step reasoning ("We add 2 to 1") and the final answer ("and get 3"), which is marked as correct with a green checkmark.

### Key Observations

* **Color Coding:** Blue italics are consistently used to highlight the key operational verb ("add"/"addition") across the abstraction, example, and final rationale. Green is used for the "Skill Matching" label and the success checkmark.

* **Iconography:** Simple, universal icons (question mark, lightbulb, building, network, gear, speech bubble) are used to represent abstract concepts like query, idea, repository, data, processing, and output.

* **Flow Direction:** The flow is primarily top-to-bottom on the left (processing the query) and bottom-to-top on the right (generating the output), connected by the central "Skill Matching" process.

* **Structural Mirroring:** The final rationale ("We add 2 to 1 and get 3.") directly mirrors the format of the retrieved example's rationale ("We add 7 to 2 and get 9."), demonstrating the in-context learning mechanism.

### Interpretation

This diagram demonstrates a **modular, skill-augmented reasoning framework** for LLMs. Instead of relying solely on the LLM's parametric knowledge to solve a problem from scratch, the system:

1. **Decomposes** the problem into an abstract skill ("addition").

2. **Retrieves** a concrete, relevant example from an external bank that demonstrates that skill.

3. **Augments** the prompt with this example, providing the LLM with a clear template for the desired reasoning process (Chain-of-Thought).

The core insight is that by matching the *skill* required by a new query to *examples* of that skill, the system can guide the LLM to produce more reliable and structured reasoning. The green checkmark signifies that this process leads to a correct answer. This approach aims to improve performance on reasoning tasks by reducing the cognitive load on the LLM and providing explicit, task-specific guidance through retrieved examples. It represents a move towards more controlled and interpretable AI reasoning systems.