\n

## Diagram: Large Language Model with Chain-of-Thought (CoT) Prompting

### Overview

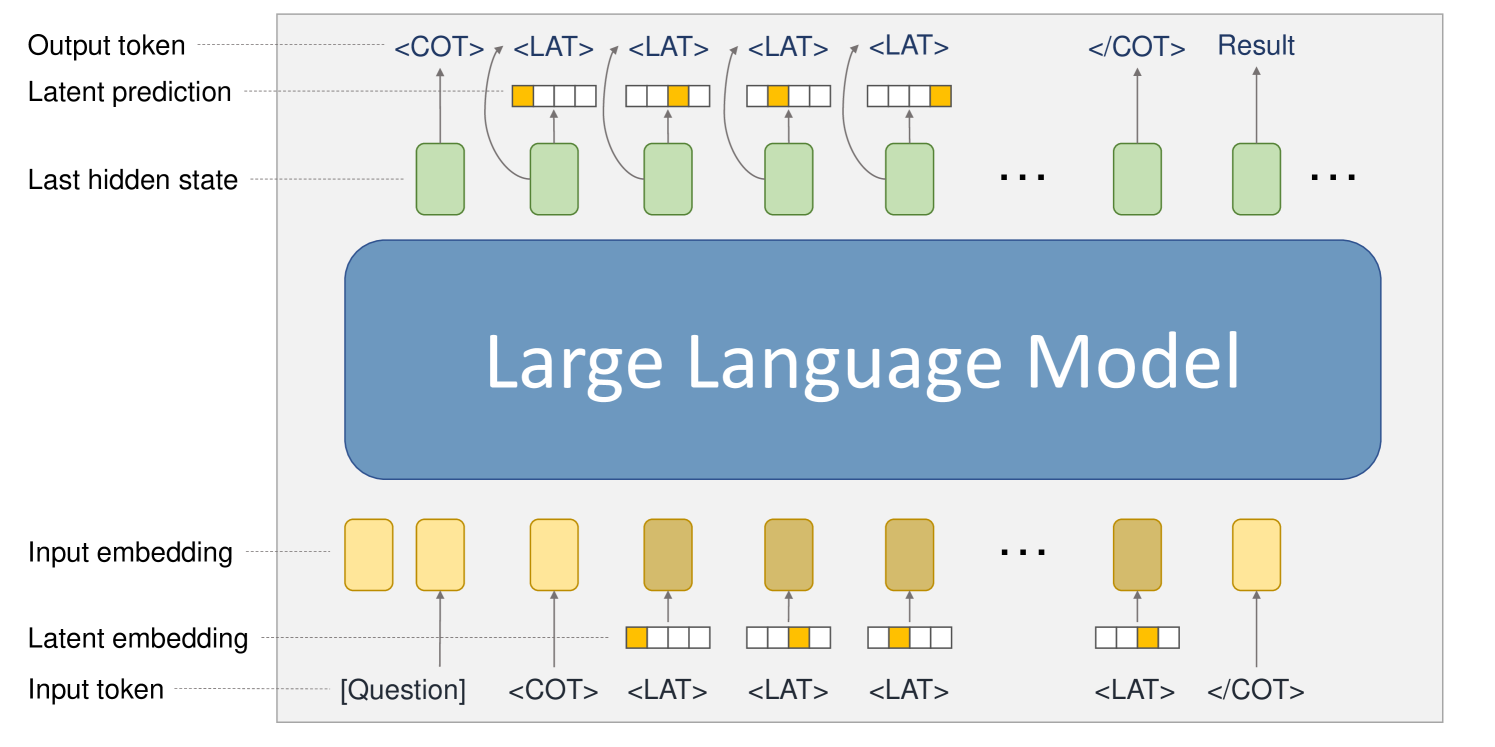

This diagram illustrates the process of a Large Language Model (LLM) generating a result using Chain-of-Thought (CoT) prompting. It depicts the flow of information from input tokens through the LLM's hidden states to output tokens, highlighting the role of latent embeddings and CoT tokens.

### Components/Axes

The diagram consists of three main sections:

1. **Input Layer:** Represents the input to the LLM, including the initial question and CoT tokens.

2. **Large Language Model (LLM):** A large blue rectangle representing the core of the model.

3. **Output Layer:** Represents the generated output tokens, leading to the final result.

Key labels include:

* "Large Language Model"

* "Input token"

* "Latent embedding"

* "Last hidden state"

* "Latent prediction"

* "Output token"

* "[Question]"

* "<COT>"

* "<LAT>"

* "Result"

### Detailed Analysis or Content Details

The diagram shows a sequential process.

**Input Layer:**

* The input begins with "[Question]", represented by a yellow rounded rectangle.

* Following the question, a series of "<COT>" and "<LAT>" tokens are added. "<COT>" appears to be a Chain-of-Thought token, and "<LAT>" appears to be a Latent token.

* Below the tokens is the label "Input token".

* Above the tokens is the label "Latent embedding".

* Arrows point upwards from the "Input token" to the "Latent embedding".

**Large Language Model (LLM):**

* The LLM is represented as a large blue rectangle.

* Inside the LLM, a series of green rounded rectangles represent "Last hidden state".

* Above each "Last hidden state" is a yellow rounded rectangle representing "Latent prediction".

* Arrows connect the "Latent prediction" to the "Last hidden state", indicating a feedback loop or iterative refinement.

* The "<COT>" and "<LAT>" tokens are fed into the LLM, influencing the hidden states.

**Output Layer:**

* The output consists of a sequence of "<COT>" and "<LAT>" tokens, followed by "<COT>" and "Result".

* Above the tokens is the label "Output token".

* Arrows point downwards from the "Latent prediction" to the "Output token".

* The final output is labeled "Result".

The diagram uses "..." to indicate that the sequence of hidden states and tokens continues beyond what is explicitly shown.

### Key Observations

* The diagram emphasizes the iterative nature of LLM processing, with latent predictions influencing subsequent hidden states.

* The inclusion of "<COT>" and "<LAT>" tokens suggests a specific prompting strategy designed to elicit chain-of-thought reasoning and latent knowledge from the model.

* The diagram does not provide any numerical data or specific values. It is a conceptual illustration of the process.

### Interpretation

This diagram demonstrates how Chain-of-Thought (CoT) prompting can be integrated into the input sequence of a Large Language Model to guide its reasoning process. The "<COT>" tokens likely serve as cues for the model to generate intermediate reasoning steps, while the "<LAT>" tokens may represent latent knowledge or concepts that are relevant to the question. The iterative feedback loop between latent predictions and hidden states suggests that the model refines its understanding and reasoning over multiple steps. The final "Result" is the output of this process, informed by both the initial question and the CoT/latent prompting strategy. The diagram highlights the importance of carefully crafting prompts to elicit desired behaviors from LLMs. The diagram is a high-level conceptual overview and does not delve into the specific architecture or parameters of the LLM.