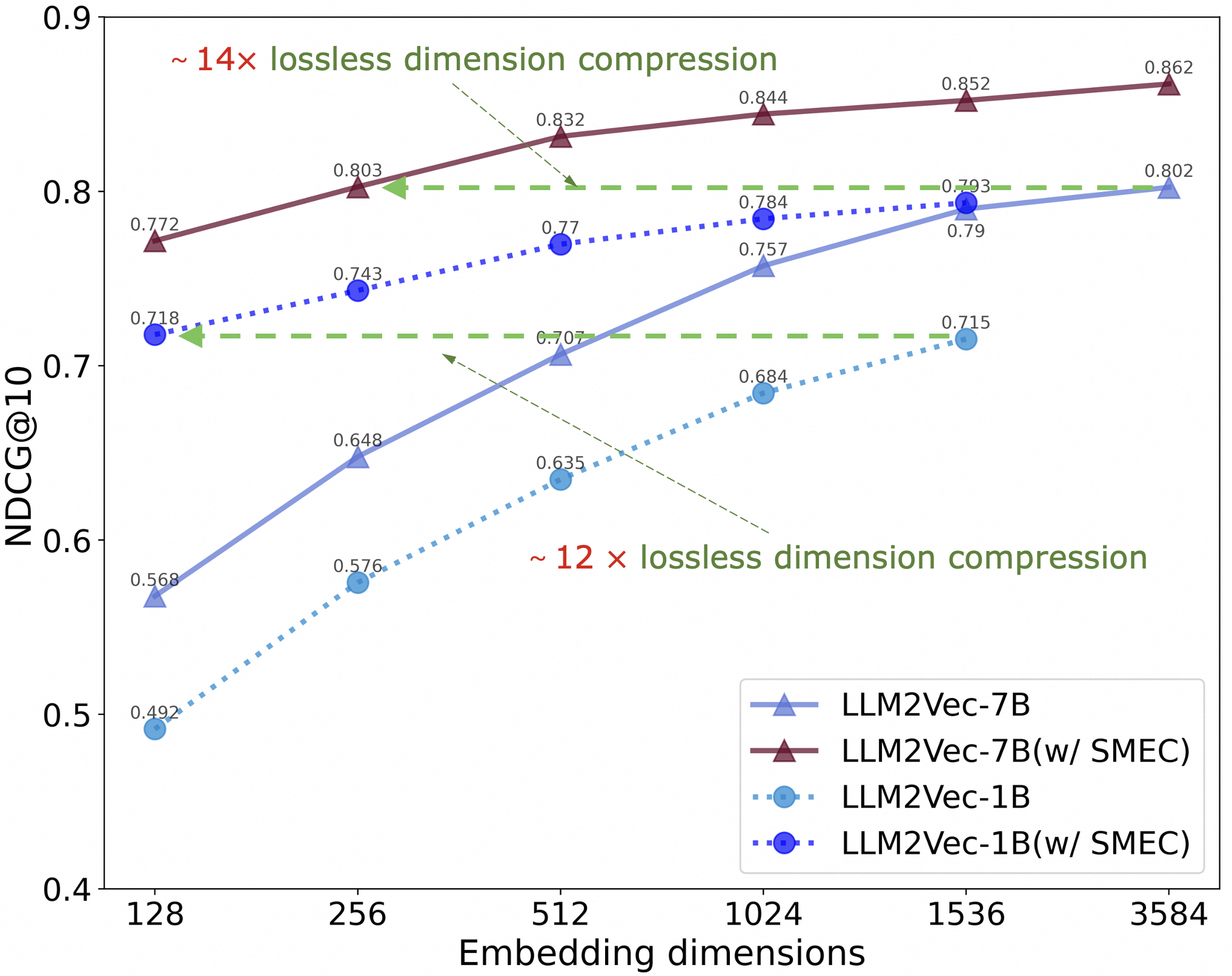

## Line Chart: Performance of LLM2Vec Models with and without SMEC Across Embedding Dimensions

### Overview

This image is a line chart comparing the performance (measured by NDCG@10) of four different model configurations as the embedding dimension increases. The chart demonstrates the impact of a technique called "SMEC" on two base models (LLM2Vec-7B and LLM2Vec-1B) and highlights significant "lossless dimension compression" capabilities.

### Components/Axes

* **X-Axis (Horizontal):** Labeled "Embedding dimensions". It has discrete, non-linearly spaced tick marks at the values: 128, 256, 512, 1024, 1536, and 3584.

* **Y-Axis (Vertical):** Labeled "NDCG@10". It is a linear scale ranging from 0.4 to 0.9, with major tick marks at 0.1 intervals (0.4, 0.5, 0.6, 0.7, 0.8, 0.9).

* **Legend:** Located in the bottom-right corner. It defines four data series:

1. `LLM2Vec-7B`: Represented by a solid light blue line with upward-pointing triangle markers.

2. `LLM2Vec-7B(w/ SMEC)`: Represented by a solid dark red/brown line with upward-pointing triangle markers.

3. `LLM2Vec-1B`: Represented by a dotted light blue line with circle markers.

4. `LLM2Vec-1B(w/ SMEC)`: Represented by a dotted dark blue line with circle markers.

* **Annotations:**

* Top-left area: Text "~ 14× lossless dimension compression" in dark red/brown, with a dashed green arrow pointing from the `LLM2Vec-7B(w/ SMEC)` line at dimension 128 to the `LLM2Vec-7B` line at dimension 1536.

* Center-right area: Text "~ 12 × lossless dimension compression" in dark red/brown, with a dashed green arrow pointing from the `LLM2Vec-1B(w/ SMEC)` line at dimension 128 to the `LLM2Vec-1B` line at dimension 1536.

### Detailed Analysis

**Data Series and Trends:**

1. **LLM2Vec-7B (Light Blue, Solid Line, Triangles):**

* **Trend:** Slopes steeply upward, showing significant performance gains as dimensions increase, with the rate of improvement slowing at higher dimensions.

* **Data Points:**

* Dimension 128: NDCG@10 ≈ 0.568

* Dimension 256: NDCG@10 ≈ 0.648

* Dimension 512: NDCG@10 ≈ 0.707

* Dimension 1024: NDCG@10 ≈ 0.757

* Dimension 1536: NDCG@10 ≈ 0.79

* Dimension 3584: NDCG@10 ≈ 0.802

2. **LLM2Vec-7B(w/ SMEC) (Dark Red/Brown, Solid Line, Triangles):**

* **Trend:** Slopes gently upward. It starts at a much higher performance level than the base model and maintains a consistent lead across all dimensions.

* **Data Points:**

* Dimension 128: NDCG@10 ≈ 0.772

* Dimension 256: NDCG@10 ≈ 0.803

* Dimension 512: NDCG@10 ≈ 0.832

* Dimension 1024: NDCG@10 ≈ 0.844

* Dimension 1536: NDCG@10 ≈ 0.852

* Dimension 3584: NDCG@10 ≈ 0.862

3. **LLM2Vec-1B (Light Blue, Dotted Line, Circles):**

* **Trend:** Slopes upward, but its performance is consistently lower than the 7B models. The curve is less steep than the base 7B model.

* **Data Points:**

* Dimension 128: NDCG@10 ≈ 0.492

* Dimension 256: NDCG@10 ≈ 0.576

* Dimension 512: NDCG@10 ≈ 0.635

* Dimension 1024: NDCG@10 ≈ 0.684

* Dimension 1536: NDCG@10 ≈ 0.715

4. **LLM2Vec-1B(w/ SMEC) (Dark Blue, Dotted Line, Circles):**

* **Trend:** Slopes gently upward. Similar to the 7B SMEC variant, it starts at a much higher performance than its base model and maintains a lead.

* **Data Points:**

* Dimension 128: NDCG@10 ≈ 0.718

* Dimension 256: NDCG@10 ≈ 0.743

* Dimension 512: NDCG@10 ≈ 0.77

* Dimension 1024: NDCG@10 ≈ 0.784

* Dimension 1536: NDCG@10 ≈ 0.793

### Key Observations

1. **SMEC Provides a Major Boost:** For both the 1B and 7B models, applying SMEC results in a substantial performance increase at every embedding dimension. The SMEC variants (dark lines) are always above their corresponding base models (light lines).

2. **Performance at Low Dimensions:** The most dramatic relative improvement is at the lowest dimension (128). For example, LLM2Vec-7B(w/ SMEC) at 128 dimensions (0.772) outperforms the base LLM2Vec-7B at 1536 dimensions (0.79) by a small margin, achieving comparable performance with ~12x fewer dimensions.

3. **Diminishing Returns:** All curves show diminishing returns; the performance gain from doubling the dimension decreases as the dimension grows larger.

4. **Model Size Comparison:** The 7B models consistently outperform their 1B counterparts, both with and without SMEC, indicating that larger base model capacity leads to better retrieval performance.

5. **Compression Claims:** The annotations explicitly state that SMEC enables "~14×" and "~12×" lossless dimension compression for the 7B and 1B models, respectively. This is visually supported by the dashed green arrows connecting a high-performing low-dimension SMEC point to a similarly performing high-dimension base model point.

### Interpretation

This chart presents a compelling case for the effectiveness of the SMEC technique in the context of dense retrieval models (LLM2Vec). The core message is one of **efficiency without sacrifice**.

* **What the data suggests:** SMEC allows models to achieve high retrieval performance (high NDCG@10) using drastically fewer embedding dimensions. This is "lossless" in the sense that a compressed model (e.g., 7B with SMEC at 128 dims) can match or exceed the performance of a much larger uncompressed model (e.g., 7B without SMEC at 1536 dims).

* **How elements relate:** The x-axis (dimensions) is a proxy for memory and computational cost. The y-axis (NDCG@10) is a proxy for quality. The SMEC lines demonstrate a superior Pareto frontier—better quality at every cost point. The gap between the SMEC and non-SMEC lines for a given model size quantifies the "free" performance gain from the technique.

* **Notable Implications:** The practical implication is significant. Deploying a retrieval system with SMEC could reduce storage requirements for vector databases by an order of magnitude (12-14x) and lower the computational cost of similarity search, all while maintaining or improving result quality. This makes high-performance retrieval more feasible for resource-constrained environments. The consistent results across two different model scales (1B and 7B) suggest the technique is robust.