## Diagram: Neural Network Layer Connectivity Pattern

### Overview

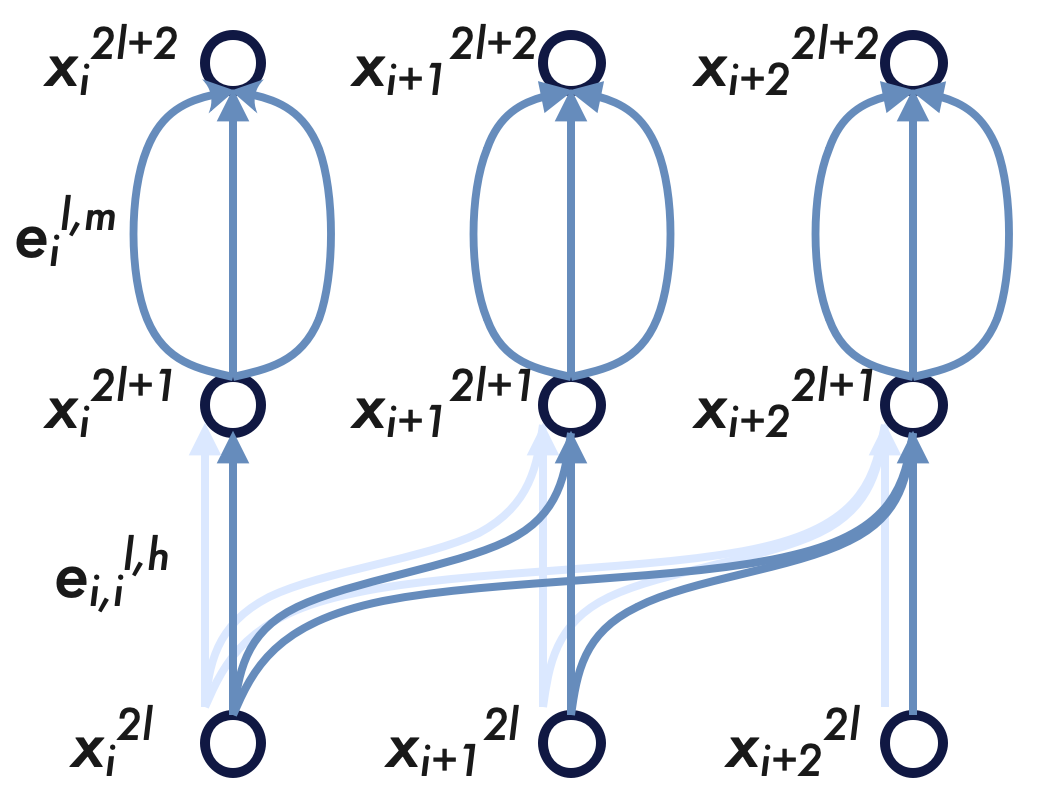

The image displays a technical diagram illustrating a connectivity pattern within a neural network architecture, likely depicting a specific layer or block. It shows three parallel processing units (columns) across three sequential layers or time steps. The diagram emphasizes both vertical (within-column) and horizontal (cross-column) connections, with distinct labeling for nodes and edges.

### Components/Axes

The diagram is organized into three vertical columns and three horizontal layers (rows). There are no traditional axes, but the structure implies a flow from bottom to top.

**Nodes (Circles):**

* **Bottom Row (Layer 2l):** Three nodes labeled from left to right:

* `x_i^{2l}`

* `x_{i+1}^{2l}`

* `x_{i+2}^{2l}`

* **Middle Row (Layer 2l+1):** Three nodes labeled from left to right:

* `x_i^{2l+1}`

* `x_{i+1}^{2l+1}`

* `x_{i+2}^{2l+1}`

* **Top Row (Layer 2l+2):** Three nodes labeled from left to right:

* `x_i^{2l+2}`

* `x_{i+1}^{2l+2}`

* `x_{i+2}^{2l+2}`

**Edges (Connections):**

* **Vertical Connections (Solid Blue Arrows):** Each node in the bottom row connects directly upward to the node directly above it in the middle row. Similarly, each node in the middle row connects directly upward to the node directly above it in the top row.

* **Horizontal/Skip Connections (Light Blue, Dashed Arrows):** These originate from the bottom row nodes and connect to middle row nodes in *different* columns.

* From `x_i^{2l}` (bottom-left): Arrows point to `x_{i+1}^{2l+1}` (middle-center) and `x_{i+2}^{2l+1}` (middle-right).

* From `x_{i+1}^{2l}` (bottom-center): An arrow points to `x_{i+2}^{2l+1}` (middle-right).

* From `x_{i+2}^{2l}` (bottom-right): No outgoing horizontal connections are shown.

* **Loopback/Oval Connections (Solid Blue Ovals):** Each node in the middle row is enclosed within a vertical oval that connects back to itself, suggesting a recurrent or self-attention mechanism. This oval is labeled `e_i^{l,m}` for the leftmost column.

* **Edge Labels:**

* The horizontal/skip connections are collectively labeled `e_{i,i}^{l,h}` near the bottom-left of the diagram.

* The oval connection in the first column is labeled `e_i^{l,m}`.

### Detailed Analysis

**Spatial Grounding & Component Isolation:**

* **Header/Top Region (Layer 2l+2):** Contains the three output nodes (`x_i^{2l+2}`, `x_{i+1}^{2l+2}`, `x_{i+2}^{2l+2}`). Each receives a single vertical input from the node below it.

* **Main Chart/Middle Region (Layer 2l+1):** Contains the three intermediate nodes (`x_i^{2l+1}`, `x_{i+1}^{2l+1}`, `x_{i+2}^{2l+1}`). Each node has:

1. One vertical input from below.

2. Multiple horizontal/skip inputs from the bottom row (for the center and right nodes).

3. A self-looping oval connection.

* **Footer/Bottom Region (Layer 2l):** Contains the three input nodes (`x_i^{2l}`, `x_{i+1}^{2l}`, `x_{i+2}^{2l}`). Each node serves as a source for:

1. One vertical connection to the node above.

2. Multiple horizontal/skip connections to nodes in the middle row of subsequent columns (for the left and center nodes).

**Flow and Relationships:**

The primary data flow is vertical, from layer `2l` to `2l+1` to `2l+2`. The horizontal connections (`e_{i,i}^{l,h}`) introduce cross-talk or information sharing between columns at the `2l` to `2l+1` transition. The ovals (`e_i^{l,m}`) indicate a separate, likely recurrent or intra-column, processing step applied at the `2l+1` layer before passing information to `2l+2`.

### Key Observations

1. **Asymmetric Horizontal Connectivity:** The skip connections are not all-to-all. Information flows forward (from lower index `i` to higher indices `i+1`, `i+2`), but not backward. The rightmost column (`i+2`) receives horizontal inputs but does not send any.

2. **Dual Processing at Middle Layer:** Nodes at layer `2l+1` are subject to two distinct operations: integration of vertical and horizontal inputs, followed by a self-looping operation (`e_i^{l,m}`).

3. **Consistent Labeling Scheme:** The superscripts (`2l`, `2l+1`, `2l+2`) clearly denote sequential layers. Subscripts (`i`, `i+1`, `i+2`) denote column or feature index. The edge labels use `l` for layer context, `h` for horizontal, and `m` for the middle-layer operation.

### Interpretation

This diagram represents a **neural network building block with mixed connectivity**. It combines:

* **Standard Feedforward Pathways:** The vertical connections.

* **Cross-Column Gating or Mixing:** The forward-directed horizontal connections (`e_{i,i}^{l,h}`) allow information from earlier positions to influence later positions in the subsequent layer, akin to mechanisms in convolutional or transformer models.

* **Intra-Column Recurrence or Refinement:** The self-looping ovals (`e_i^{l,m}`) suggest that each intermediate node undergoes an iterative update or self-attention process before its output is finalized.

The structure is reminiscent of architectures designed for sequence processing (like Temporal Convolutional Networks or certain Transformer variants) or graph neural networks where nodes aggregate information from neighbors. The asymmetry in horizontal connections implies a causal or directional constraint, preventing information from future positions (`i+2`) from affecting past ones (`i`, `i+1`) at this specific stage. The diagram meticulously details how information is routed, transformed, and shared across both depth (layers) and width (columns/features).