## Line Chart: Model Accuracy on Math Problems

### Overview

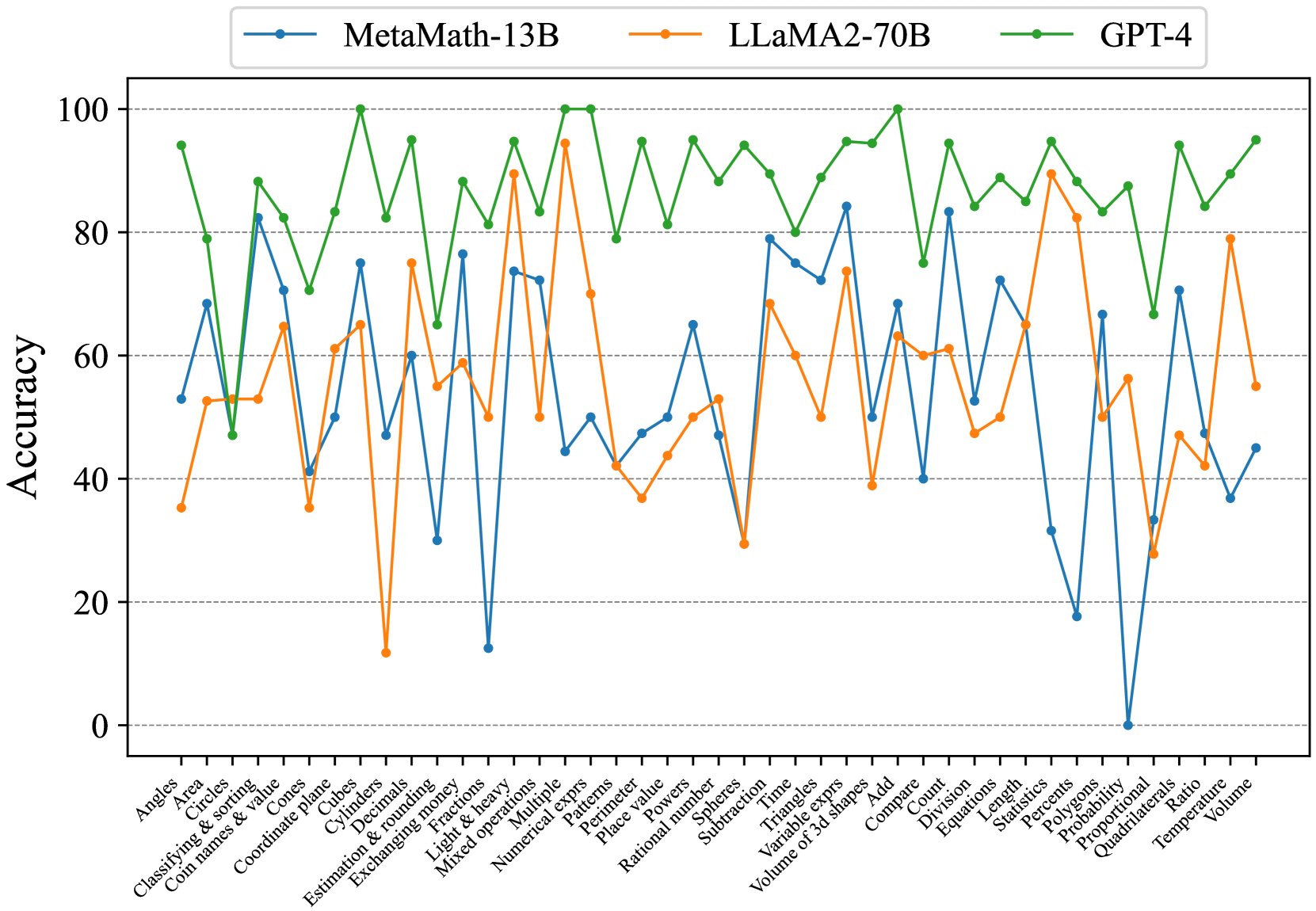

The image is a line chart comparing the accuracy of three different language models (MetaMath-13B, LLaMA2-70B, and GPT-4) on a variety of math-related tasks. The x-axis represents different math problem types, and the y-axis represents the accuracy score (from 0 to 100).

### Components/Axes

* **Title:** (Implicit) Model Accuracy on Math Problems

* **X-axis:** Math Problem Types (listed below)

* **Y-axis:** Accuracy (ranging from 0 to 100, with gridlines at intervals of 20)

* **Legend:** Located at the top of the chart.

* Blue line: MetaMath-13B

* Orange line: LLaMA2-70B

* Green line: GPT-4

### Detailed Analysis

**X-Axis Categories (Math Problem Types):**

1. Angles

2. Area

3. Circles

4. Classifying & sorting

5. Coin names & value

6. Cones

7. Coordinate plane

8. Cubes

9. Cylinders

10. Decimals

11. Estimation & rounding

12. Exchanging money

13. Fractions

14. Light & heavy

15. Mixed operations

16. Multiple

17. Numerical exprs

18. Patterns

19. Perimeter

20. Place value

21. Powers

22. Rational number

23. Spheres

24. Subtraction

25. Time

26. Triangles

27. Variable exprs

28. Volume of 3d shapes

29. Add

30. Compare

31. Count

32. Division

33. Equations

34. Length

35. Percents

36. Polygons

37. Probability

38. Proportional

39. Quadrilaterals

40. Ratio

41. Temperature

42. Volume

**Data Series Analysis:**

* **MetaMath-13B (Blue):** The accuracy fluctuates significantly across different problem types. It starts around 50-60%, dips to around 30% for "Cylinders", peaks around 70-80% for "Exchanging money", then drops sharply to near 0% for "Quadrilaterals", before recovering to around 50% for "Volume".

* Angles: ~53%

* Area: ~53%

* Circles: ~70%

* Classifying & sorting: ~70%

* Coin names & value: ~63%

* Cones: ~40%

* Coordinate plane: ~63%

* Cubes: ~30%

* Cylinders: ~30%

* Decimals: ~33%

* Estimation & rounding: ~73%

* Exchanging money: ~73%

* Fractions: ~50%

* Light & heavy: ~53%

* Mixed operations: ~47%

* Multiple: ~50%

* Numerical exprs: ~53%

* Patterns: ~77%

* Perimeter: ~73%

* Place value: ~50%

* Powers: ~77%

* Rational number: ~80%

* Spheres: ~77%

* Subtraction: ~73%

* Time: ~60%

* Triangles: ~43%

* Variable exprs: ~60%

* Volume of 3d shapes: ~43%

* Add: ~43%

* Compare: ~43%

* Count: ~63%

* Division: ~60%

* Equations: ~40%

* Length: ~33%

* Percents: ~13%

* Polygons: ~33%

* Probability: ~0%

* Proportional: ~70%

* Quadrilaterals: ~0%

* Ratio: ~43%

* Temperature: ~43%

* Volume: ~50%

* **LLaMA2-70B (Orange):** The accuracy also fluctuates, but generally stays between 35% and 95%. It has a low point around 10% for "Decimals" and peaks around 95% for "Place Value".

* Angles: ~35%

* Area: ~53%

* Circles: ~53%

* Classifying & sorting: ~63%

* Coin names & value: ~40%

* Cones: ~63%

* Coordinate plane: ~37%

* Cubes: ~13%

* Cylinders: ~13%

* Decimals: ~13%

* Estimation & rounding: ~67%

* Exchanging money: ~67%

* Fractions: ~40%

* Light & heavy: ~53%

* Mixed operations: ~93%

* Multiple: ~93%

* Numerical exprs: ~77%

* Patterns: ~93%

* Perimeter: ~93%

* Place value: ~93%

* Powers: ~40%

* Rational number: ~53%

* Spheres: ~53%

* Subtraction: ~77%

* Time: ~77%

* Triangles: ~40%

* Variable exprs: ~53%

* Volume of 3d shapes: ~20%

* Add: ~60%

* Compare: ~77%

* Count: ~60%

* Division: ~57%

* Equations: ~87%

* Length: ~93%

* Percents: ~87%

* Polygons: ~57%

* Probability: ~57%

* Proportional: ~57%

* Quadrilaterals: ~57%

* Ratio: ~57%

* Temperature: ~57%

* Volume: ~57%

* **GPT-4 (Green):** The accuracy is generally high and more consistent than the other two models, mostly staying above 80%. It peaks at 100% for several categories and dips to around 50% for "Coordinate plane".

* Angles: ~77%

* Area: ~93%

* Circles: ~77%

* Classifying & sorting: ~87%

* Coin names & value: ~50%

* Cones: ~87%

* Coordinate plane: ~50%

* Cubes: ~87%

* Cylinders: ~87%

* Decimals: ~93%

* Estimation & rounding: ~93%

* Exchanging money: ~93%

* Fractions: ~100%

* Light & heavy: ~93%

* Mixed operations: ~93%

* Multiple: ~93%

* Numerical exprs: ~93%

* Patterns: ~93%

* Perimeter: ~93%

* Place value: ~93%

* Powers: ~93%

* Rational number: ~93%

* Spheres: ~93%

* Subtraction: ~93%

* Time: ~93%

* Triangles: ~93%

* Variable exprs: ~93%

* Volume of 3d shapes: ~93%

* Add: ~93%

* Compare: ~93%

* Count: ~93%

* Division: ~93%

* Equations: ~93%

* Length: ~93%

* Percents: ~93%

* Polygons: ~93%

* Probability: ~93%

* Proportional: ~93%

* Quadrilaterals: ~93%

* Ratio: ~93%

* Temperature: ~93%

* Volume: ~93%

### Key Observations

* GPT-4 consistently outperforms MetaMath-13B and LLaMA2-70B across almost all math problem types.

* MetaMath-13B shows significant weaknesses in "Quadrilaterals" problems.

* LLaMA2-70B has a low accuracy on "Decimals" problems.

* All models show variability in accuracy depending on the problem type.

### Interpretation

The chart demonstrates the relative strengths and weaknesses of different language models in solving various math problems. GPT-4's consistently high accuracy suggests it has a more robust understanding of mathematical concepts compared to MetaMath-13B and LLaMA2-70B. The specific areas where each model struggles (e.g., MetaMath-13B with "Quadrilaterals") could indicate areas for further model training and improvement. The variability in accuracy across problem types highlights the complexity of mathematical reasoning and the challenges in developing AI models that can generalize across different mathematical domains.