## Diagram: Federated Learning Process

### Overview

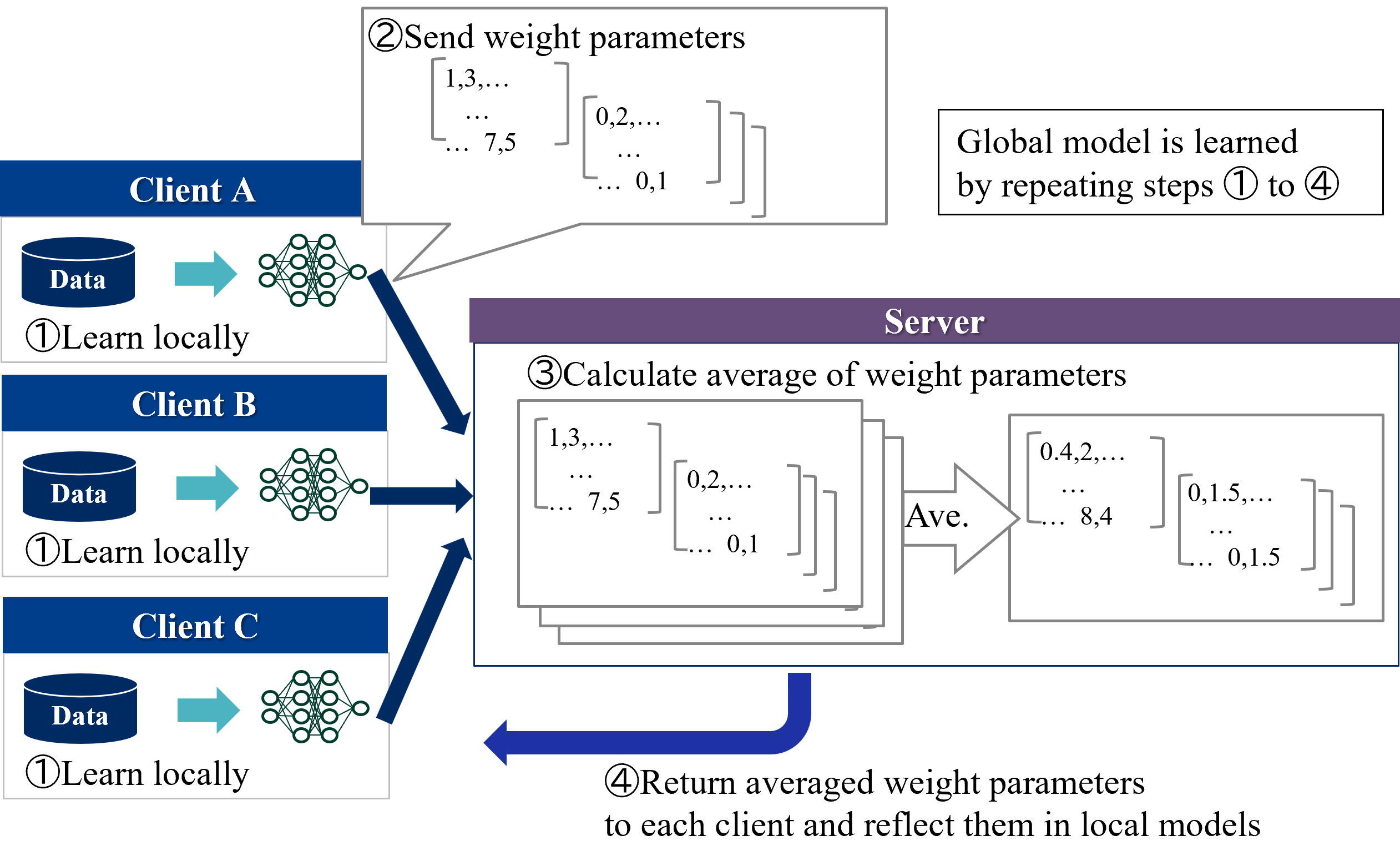

The image illustrates the federated learning process, where multiple clients train models locally on their data and then send weight parameters to a central server. The server averages these parameters and sends the averaged parameters back to the clients, who update their local models. This process is repeated to learn a global model.

### Components/Axes

* **Clients:** Client A, Client B, and Client C. Each client has its own data and a local model (represented as a neural network).

* **Server:** A central server that aggregates the weight parameters from the clients.

* **Steps:**

1. Learn locally: Each client trains a model locally on its data.

2. Send weight parameters: Each client sends its model's weight parameters to the server.

3. Calculate average of weight parameters: The server calculates the average of the received weight parameters.

4. Return averaged weight parameters: The server sends the averaged weight parameters back to each client, who then reflects them in their local models.

* **Data Representation:** Weight parameters are represented as matrices. Examples of values are shown, such as 1.3, 7.5, 0.2, 0.1, 0.4, 8.4, 0.1.5, and 0.1.5.

### Detailed Analysis or Content Details

* **Client A:**

* Has a "Data" storage icon.

* Performs "①Learn locally" using its data to train a neural network.

* Sends weight parameters to the server in step "② Send weight parameters". The parameters are represented as matrices with example values like 1.3, 7.5, 0.2, and 0.1.

* **Client B:**

* Has a "Data" storage icon.

* Performs "①Learn locally" using its data to train a neural network.

* Sends weight parameters to the server.

* **Client C:**

* Has a "Data" storage icon.

* Performs "①Learn locally" using its data to train a neural network.

* Sends weight parameters to the server.

* **Server:**

* Receives weight parameters from all clients.

* Performs "③ Calculate average of weight parameters".

* Averages the weight parameters. The averaged parameters are represented as matrices with example values like 0.4, 8.4, 0.1.5, and 0.1.5.

* Performs "④ Return averaged weight parameters to each client and reflect them in local models".

### Key Observations

* The process is iterative, with steps 1 to 4 being repeated to refine the global model.

* The weight parameters are represented as matrices, and the server calculates the average of these matrices.

* The clients update their local models with the averaged weight parameters.

### Interpretation

The diagram illustrates the federated learning process, which allows multiple clients to collaboratively train a global model without sharing their raw data. This is achieved by averaging the weight parameters of the local models on a central server. The process is iterative, with the clients and server repeatedly exchanging weight parameters until the global model converges. This approach is particularly useful when data is distributed across multiple devices or organizations and cannot be easily centralized due to privacy or regulatory constraints. The example values provided for the weight parameters are illustrative and would vary depending on the specific model and data being used.