## Federated Learning Architecture Diagram

### Overview

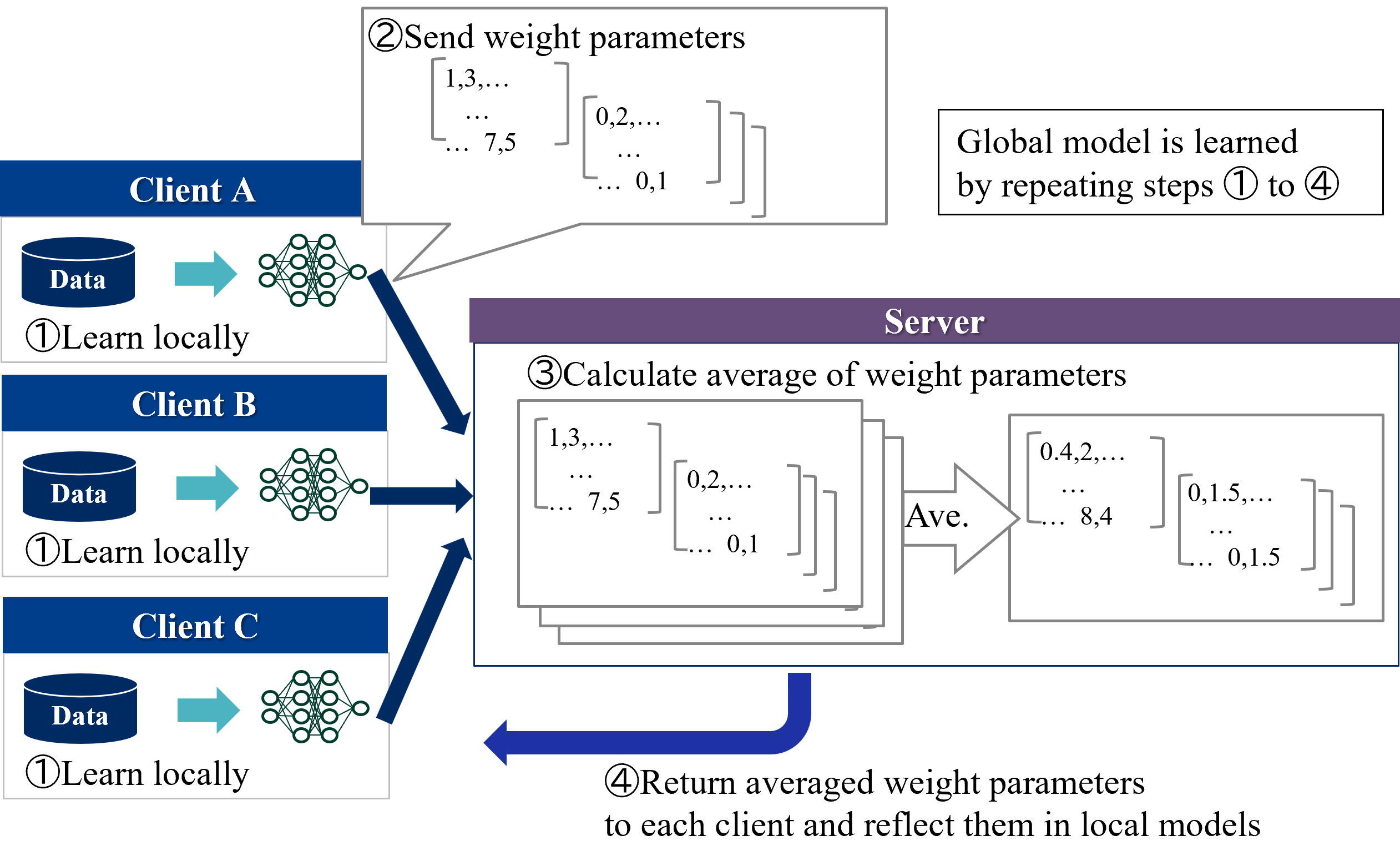

The diagram illustrates a federated learning workflow involving three clients (A, B, C) and a central server. It depicts four iterative steps for collaborative model training without centralized data sharing.

### Components/Axes

1. **Clients (A, B, C)**:

- Positioned left-aligned in vertical stack

- Each contains:

- Data container (blue cylinder icon)

- Neural network diagram (green nodes/edges)

- Step 1 label: "Learn locally" (①)

2. **Server**:

- Positioned right-aligned

- Contains:

- Step 2: "Send weight parameters" (②)

- Step 3: "Calculate average of weight parameters" (③)

- Step 4: "Return averaged weight parameters" (④)

3. **Flow Arrows**:

- Blue arrows connect clients to server (Step 2)

- Purple arrow connects server back to clients (Step 4)

- Dashed gray arrow indicates iterative repetition

4. **Text Elements**:

- Speech bubble in Step 2 shows parameter examples:

- `[1,3,...]`, `[0,2,...]`, `[7,5]`, `[... 0,1]`

- Server output shows averaged parameters:

- `[0.4,2,...]`, `[0,1.5,...]`, `[8,4]`, `[... 0,1.5]`

### Detailed Analysis

- **Client Operations**:

- Each client independently trains a local model (①)

- Neural network architecture appears identical across clients

- Data containers suggest heterogeneous data sources

- **Server Operations**:

- Aggregates weight parameters from all clients (③)

- Calculates weighted averages (e.g., 0.4 vs original 1.0)

- Returns updated parameters to all participants (④)

- **Iterative Process**:

- Dashed arrow indicates cyclical nature of steps ①-④

- Implies continuous model improvement through repetition

### Key Observations

1. **Decentralized Data Handling**:

- No raw data leaves client devices (only model parameters)

- Preserves privacy while enabling collaboration

2. **Parameter Averaging**:

- Server uses weighted averaging (not simple mean)

- Example: 1.0 → 0.4 suggests weighting by client contribution

3. **Architectural Symmetry**:

- Identical neural network structures across clients

- Uniform communication protocol with server

### Interpretation

This diagram demonstrates the core mechanics of federated learning:

1. **Privacy Preservation**: Clients retain raw data while sharing only model updates

2. **Collaborative Intelligence**: Diverse data sources contribute to a shared model

3. **Iterative Refinement**: Repeated cycles improve model accuracy through aggregation

The architecture suggests:

- Potential for handling non-IID data distributions

- Scalability to many clients through parameter averaging

- Trade-off between communication overhead and model accuracy

The use of weighted averaging (rather than simple mean) implies consideration of client contribution size, though specific weighting mechanisms aren't shown. The neural network complexity appears moderate (multiple hidden layers), suggesting capability for complex pattern recognition while maintaining communication efficiency.