## Diagram: Neural Architecture Interpretability Comparison

### Overview

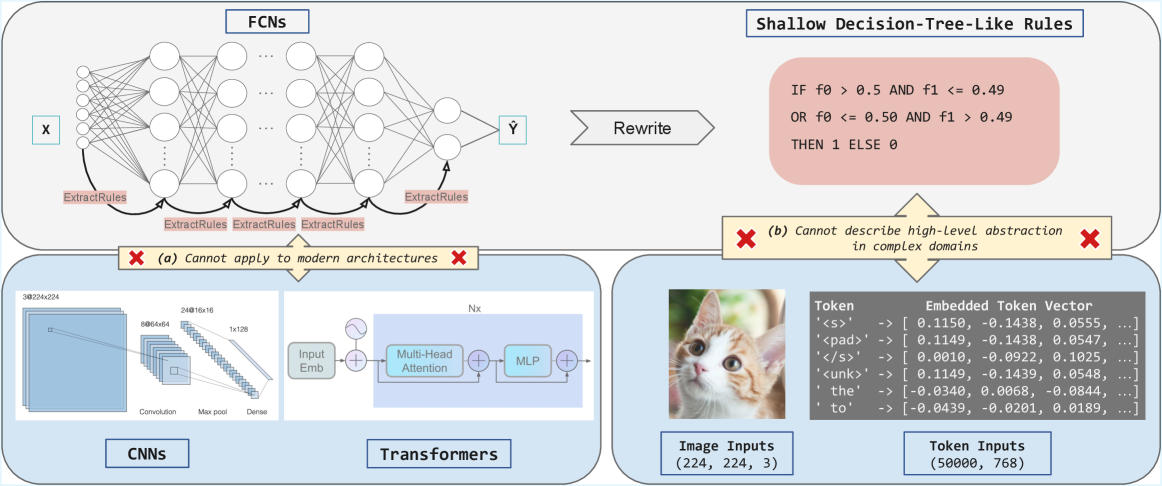

The diagram compares the interpretability of different neural network architectures (FCNs, CNNs, Transformers) against shallow decision-tree-like rules. It highlights limitations in describing high-level abstractions across domains and includes visual examples of input types (image, tokens) and embedded vectors.

### Components/Axes

1. **FCNs Section**:

- **Input**: Labeled "X" with multiple nodes feeding into a network.

- **Output**: Labeled "Ŷ" with arrows indicating rule extraction ("ExtractRules").

- **Flow**: Nodes are interconnected with bidirectional arrows, emphasizing rule extraction at multiple layers.

2. **Shallow Decision-Tree-Like Rules**:

- **Condition**: "IF f0 > 0.5 AND f1 <= 0.49 OR f0 <= 0.50 AND f1 > 0.49 THEN 1 ELSE 0".

- **Flow**: Arrows lead to a "Rewrite" step, with a red X indicating inability to describe high-level abstractions.

3. **CNNs Section**:

- **Layers**:

- Input: 3x224x224 (RGB image).

- Convolutional layers: 24x16x16, 48x8x8, 64x4x4.

- Max pooling and dense layers.

- **Flow**: Sequential processing from input to output.

4. **Transformers Section**:

- **Components**:

- Input embedding → Multi-head attention → MLP.

- **Flow**: Parallel processing with additive connections.

5. **Token Inputs**:

- **Table**:

- Tokens: `<s>`, `<pad>`, `<unk>`, `the`, `to`.

- Embedded vectors (e.g., `<s>`: [0.1150, -0.1438, 0.0555, ...]).

- **Dimensions**: (50000, 768) for token embeddings.

6. **Image Inputs**:

- Example: Cat image with dimensions (224, 224, 3).

### Detailed Analysis

- **FCNs**: Rule extraction occurs at multiple layers, but the complexity obscures high-level abstractions (red X).

- **CNNs**: Hierarchical feature extraction via convolution and pooling, but rules remain low-level (red X).

- **Transformers**: Attention mechanisms capture context but lack explicit rule extraction (red X).

- **Decision-Tree Rules**: Simple conditional logic but limited to shallow abstractions (red X).

### Key Observations

- All architectures struggle with high-level abstraction description in complex domains.

- Token embeddings show dense, high-dimensional representations (768 dimensions).

- Image inputs use standardized preprocessing (224x224x3).

- Decision-tree rules use binary thresholds (0.5, 0.49) for simplicity.

### Interpretation

The diagram underscores the **interpretability-complexity tradeoff** in modern AI systems. While FCNs, CNNs, and Transformers achieve high performance, their internal rule extraction mechanisms remain opaque, limiting transparency. The shallow decision-tree rules, though interpretable, fail to capture nuanced patterns in complex data (e.g., image semantics or token context). This highlights the need for hybrid approaches that balance model depth with explainability, particularly in safety-critical applications like healthcare or autonomous systems.