## Bar Charts: LLM Prompt Analysis

### Overview

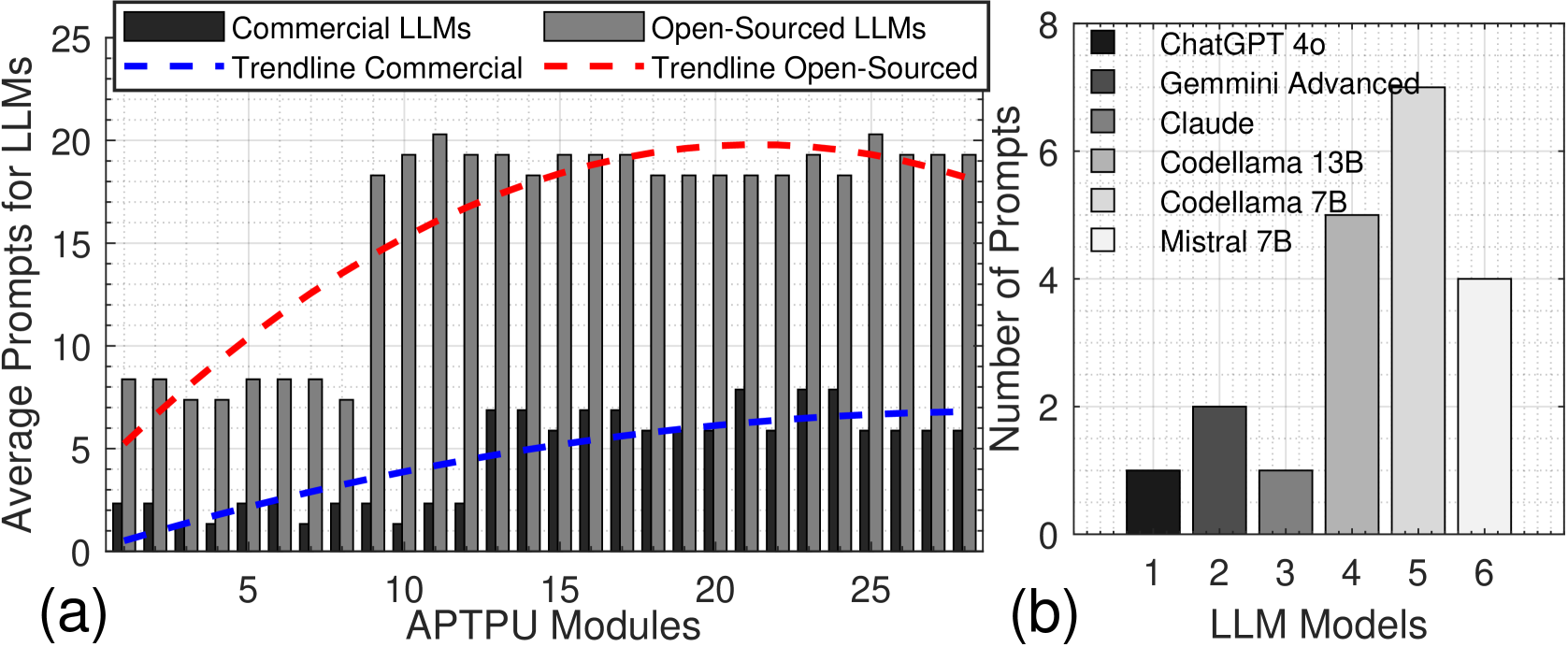

The image presents two bar charts comparing the average prompts for different Large Language Models (LLMs). Chart (a) compares commercial and open-sourced LLMs against the number of APTPU modules. Chart (b) compares specific LLM models based on the number of prompts.

### Components/Axes

**Chart (a):**

* **Title:** Average Prompts for LLMs vs. APTPU Modules

* **X-axis:** APTPU Modules, ranging from 1 to 25 in increments of 5.

* **Y-axis:** Average Prompts for LLMs, ranging from 0 to 25 in increments of 5.

* **Data Series:**

* Commercial LLMs (dark gray bars)

* Open-Sourced LLMs (light gray bars)

* Trendline Commercial (blue dashed line)

* Trendline Open-Sourced (red dashed line)

* **Legend:** Located at the top of chart (a).

**Chart (b):**

* **Title:** Number of Prompts vs. LLM Models

* **X-axis:** LLM Models, numbered 1 to 6.

* **Y-axis:** Number of Prompts, ranging from 0 to 8 in increments of 2.

* **Data Series:**

* Model 1: ChatGPT 4o (dark gray bar)

* Model 2: Gemini Advanced (gray bar)

* Model 3: Claude (light gray bar)

* Model 4: Codellama 13B (lighter gray bar)

* Model 5: Codellama 7B (even lighter gray bar)

* Model 6: Mistral 7B (white bar)

* **Legend:** Located on the right side of chart (b).

### Detailed Analysis

**Chart (a):**

* **Commercial LLMs:** The dark gray bars representing commercial LLMs show a generally low number of average prompts, ranging from approximately 1 to 3 across different APTPU Modules. The trendline (blue dashed line) shows a slight upward slope, indicating a marginal increase in average prompts as the number of APTPU Modules increases.

* APTPU Modules = 1: ~1.5 prompts

* APTPU Modules = 5: ~2 prompts

* APTPU Modules = 10: ~2.5 prompts

* APTPU Modules = 15: ~2.7 prompts

* APTPU Modules = 20: ~3 prompts

* APTPU Modules = 25: ~3.2 prompts

* **Open-Sourced LLMs:** The light gray bars representing open-sourced LLMs show a significantly higher number of average prompts compared to commercial LLMs. The values range from approximately 8 to 20. The trendline (red dashed line) shows an initial steep upward slope, peaking around 15 APTPU Modules, then slightly decreasing.

* APTPU Modules = 1: ~8 prompts

* APTPU Modules = 5: ~8 prompts

* APTPU Modules = 10: ~18 prompts

* APTPU Modules = 15: ~20 prompts

* APTPU Modules = 20: ~18 prompts

* APTPU Modules = 25: ~19 prompts

**Chart (b):**

* **ChatGPT 4o (Model 1):** The dark gray bar indicates approximately 1 prompt.

* **Gemini Advanced (Model 2):** The gray bar indicates approximately 2 prompts.

* **Claude (Model 3):** The light gray bar indicates approximately 2 prompts.

* **Codellama 13B (Model 4):** The lighter gray bar indicates approximately 7 prompts.

* **Codellama 7B (Model 5):** The even lighter gray bar indicates approximately 5 prompts.

* **Mistral 7B (Model 6):** The white bar indicates approximately 4 prompts.

### Key Observations

* Open-sourced LLMs generally require more prompts than commercial LLMs, especially in the range of 10-20 APTPU Modules.

* The number of APTU Modules has a more significant impact on Open-Sourced LLMs than Commercial LLMs.

* Codellama 13B (Model 4) requires the most prompts among the specific LLM models compared in chart (b).

* ChatGPT 4o (Model 1) requires the fewest prompts among the specific LLM models compared in chart (b).

### Interpretation

The data suggests that open-sourced LLMs may be less efficient or require more interaction (prompts) to achieve desired results compared to commercial LLMs, particularly as the number of APTPU modules increases. This could be due to differences in model architecture, training data, or optimization strategies. The trendline for open-sourced LLMs indicates a non-linear relationship with APTPU modules, suggesting that there may be an optimal range for prompt efficiency.

The comparison of specific LLM models in chart (b) highlights the variability in prompt requirements across different models. Codellama 13B stands out as requiring significantly more prompts than other models, while ChatGPT 4o requires the fewest. This information could be valuable for users selecting an LLM based on their specific needs and resource constraints.