TECHNICAL ASSET FINGERPRINT

37812a8179ad4c8b4ebc2037

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

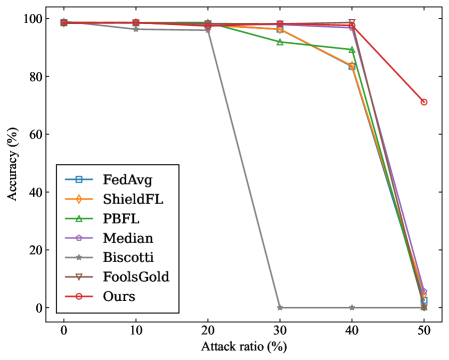

## Chart: Accuracy vs. Attack Ratio for Different Federated Learning Algorithms

### Overview

The image is a line chart comparing the accuracy of different federated learning algorithms as the attack ratio increases. The x-axis represents the attack ratio (percentage), and the y-axis represents the accuracy (percentage). Several algorithms are compared: FedAvg, ShieldFL, PBFL, Median, Biscotti, FoolsGold, and Ours.

### Components/Axes

* **X-axis:** Attack ratio (%), with markers at 0, 10, 20, 30, 40, and 50.

* **Y-axis:** Accuracy (%), with markers at 0, 20, 40, 60, 80, and 100.

* **Legend:** Located on the left side of the chart, listing the algorithms and their corresponding line colors and markers:

* FedAvg (blue, square marker)

* ShieldFL (orange, diamond marker)

* PBFL (green, triangle marker)

* Median (purple, pentagon marker)

* Biscotti (gray, star marker)

* FoolsGold (brown/dark red, inverted triangle marker)

* Ours (red, circle marker)

### Detailed Analysis

* **FedAvg (blue, square marker):** The accuracy remains relatively stable around 98% until an attack ratio of 40%, then drops sharply to approximately 2% at 50%.

* (0, 98)

* (10, 98)

* (20, 98)

* (30, 97)

* (40, 84)

* (50, 2)

* **ShieldFL (orange, diamond marker):** The accuracy remains relatively stable around 98% until an attack ratio of 40%, then drops sharply to approximately 3% at 50%.

* (0, 98)

* (10, 97)

* (20, 98)

* (30, 97)

* (40, 84)

* (50, 3)

* **PBFL (green, triangle marker):** The accuracy remains relatively stable around 98% until an attack ratio of 40%, then drops sharply to approximately 3% at 50%.

* (0, 98)

* (10, 97)

* (20, 97)

* (30, 92)

* (40, 83)

* (50, 3)

* **Median (purple, pentagon marker):** The accuracy remains relatively stable around 98% until an attack ratio of 40%, then drops sharply to approximately 2% at 50%.

* (0, 98)

* (10, 97)

* (20, 98)

* (30, 97)

* (40, 84)

* (50, 2)

* **Biscotti (gray, star marker):** The accuracy drops sharply from approximately 98% at 20% attack ratio to 0% at 30% attack ratio, remaining at 0% for higher attack ratios.

* (0, 98)

* (10, 97)

* (20, 98)

* (30, 0)

* (40, 0)

* (50, 0)

* **FoolsGold (brown/dark red, inverted triangle marker):** The accuracy remains relatively stable around 98% until an attack ratio of 40%, then drops sharply to approximately 5% at 50%.

* (0, 98)

* (10, 98)

* (20, 98)

* (30, 98)

* (40, 98)

* (50, 5)

* **Ours (red, circle marker):** The accuracy remains relatively stable around 98% until an attack ratio of 40%, then drops to approximately 72% at 50%.

* (0, 98)

* (10, 98)

* (20, 98)

* (30, 98)

* (40, 98)

* (50, 72)

### Key Observations

* The Biscotti algorithm is highly vulnerable to attacks, with its accuracy dropping to 0% at a 30% attack ratio.

* The "Ours" algorithm maintains the highest accuracy at a 50% attack ratio compared to the other algorithms.

* FedAvg, ShieldFL, PBFL, and Median algorithms exhibit similar performance, maintaining high accuracy until a 40% attack ratio, after which their accuracy drops sharply.

* FoolsGold maintains high accuracy until a 40% attack ratio, then drops sharply, but not as low as FedAvg, ShieldFL, PBFL, and Median.

### Interpretation

The chart demonstrates the vulnerability of different federated learning algorithms to attacks. The "Ours" algorithm appears to be the most robust against attacks, maintaining a significantly higher accuracy at a 50% attack ratio compared to the other algorithms. Biscotti is the least robust, failing at a lower attack ratio. The other algorithms show similar vulnerabilities, with a sharp decline in accuracy beyond a 40% attack ratio. This suggests that the "Ours" algorithm has a mechanism to mitigate the impact of attacks, making it a potentially more reliable choice in adversarial environments.

DECODING INTELLIGENCE...

EXPERT: gemini-2.5-flash-free VERSION 1

RUNTIME: google-free/gemini-2.5-flash

INTEL_VERIFIED

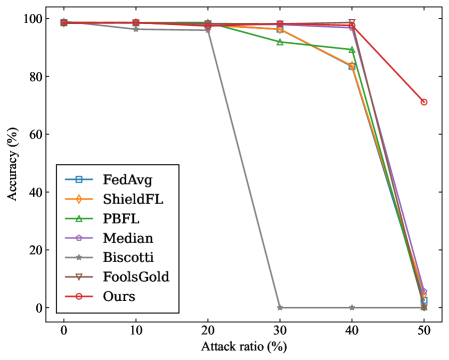

## Chart Type: Line Chart - Accuracy vs. Attack Ratio

### Overview

This image displays a 2D line chart illustrating the performance of seven different methods in terms of "Accuracy (%)" as the "Attack ratio (%)" increases. The chart compares the robustness of these methods against varying levels of attack, with one method ("Ours") demonstrating significantly higher resilience at higher attack ratios compared to the others.

### Components/Axes

The chart is composed of a main plotting area, an X-axis, a Y-axis, and a legend positioned in the bottom-left corner.

* **X-axis**: Labeled "Attack ratio (%)".

* Range: 0% to 50%.

* Major ticks are marked at 0, 10, 20, 30, 40, and 50.

* **Y-axis**: Labeled "Accuracy (%)".

* Range: 0% to 100%.

* Major ticks are marked at 0, 20, 40, 60, 80, and 100.

* **Legend (bottom-left)**: This box identifies the seven data series plotted on the chart, associating each with a specific color and marker.

* **FedAvg**: Blue line with square markers.

* **ShieldFL**: Orange line with diamond markers.

* **PBFL**: Green line with upward-pointing triangle markers.

* **Median**: Purple line with star markers.

* **Biscotti**: Gray line with downward-pointing triangle markers.

* **FoolsGold**: Brown/Reddish-brown line with filled downward-pointing triangle markers.

* **Ours**: Red line with hollow circle markers.

### Detailed Analysis

The chart plots the accuracy of each method across different attack ratios.

1. **FedAvg (Blue line, square markers)**:

* **Trend**: Starts high, maintains high accuracy up to 30% attack ratio, then experiences a sharp decline.

* **Data Points**:

* 0% Attack ratio: Approximately 99% Accuracy.

* 10% Attack ratio: Approximately 98% Accuracy.

* 20% Attack ratio: Approximately 98% Accuracy.

* 30% Attack ratio: Approximately 97% Accuracy.

* 40% Attack ratio: Approximately 84% Accuracy.

* 50% Attack ratio: Approximately 4% Accuracy.

2. **ShieldFL (Orange line, diamond markers)**:

* **Trend**: Similar to FedAvg, starts high, maintains high accuracy, then drops sharply.

* **Data Points**:

* 0% Attack ratio: Approximately 99% Accuracy.

* 10% Attack ratio: Approximately 98% Accuracy.

* 20% Attack ratio: Approximately 97% Accuracy.

* 30% Attack ratio: Approximately 95% Accuracy.

* 40% Attack ratio: Approximately 83% Accuracy.

* 50% Attack ratio: Approximately 3% Accuracy.

3. **PBFL (Green line, upward triangle markers)**:

* **Trend**: Shows a more gradual decline in accuracy at lower attack ratios compared to FedAvg and ShieldFL, but still drops sharply at higher ratios.

* **Data Points**:

* 0% Attack ratio: Approximately 99% Accuracy.

* 10% Attack ratio: Approximately 95% Accuracy.

* 20% Attack ratio: Approximately 92% Accuracy.

* 30% Attack ratio: Approximately 90% Accuracy.

* 40% Attack ratio: Approximately 88% Accuracy.

* 50% Attack ratio: Approximately 5% Accuracy.

4. **Median (Purple line, star markers)**:

* **Trend**: Closely mirrors FedAvg and FoolsGold, maintaining high accuracy before a steep drop.

* **Data Points**:

* 0% Attack ratio: Approximately 99% Accuracy.

* 10% Attack ratio: Approximately 98% Accuracy.

* 20% Attack ratio: Approximately 98% Accuracy.

* 30% Attack ratio: Approximately 97% Accuracy.

* 40% Attack ratio: Approximately 85% Accuracy.

* 50% Attack ratio: Approximately 6% Accuracy.

5. **Biscotti (Gray line, downward triangle markers)**:

* **Trend**: Starts high, but experiences a very rapid and complete collapse in accuracy between 20% and 30% attack ratio, remaining at 0% thereafter.

* **Data Points**:

* 0% Attack ratio: Approximately 99% Accuracy.

* 10% Attack ratio: Approximately 97% Accuracy.

* 20% Attack ratio: Approximately 97% Accuracy.

* 30% Attack ratio: Approximately 0% Accuracy.

* 40% Attack ratio: Approximately 0% Accuracy.

* 50% Attack ratio: Approximately 0% Accuracy.

6. **FoolsGold (Brown/Reddish-brown line, filled downward triangle markers)**:

* **Trend**: Very similar performance to FedAvg and Median, maintaining high accuracy before a sharp decline.

* **Data Points**:

* 0% Attack ratio: Approximately 99% Accuracy.

* 10% Attack ratio: Approximately 98% Accuracy.

* 20% Attack ratio: Approximately 98% Accuracy.

* 30% Attack ratio: Approximately 97% Accuracy.

* 40% Attack ratio: Approximately 85% Accuracy.

* 50% Attack ratio: Approximately 7% Accuracy.

7. **Ours (Red line, hollow circle markers)**:

* **Trend**: Demonstrates exceptional robustness, maintaining very high accuracy even at high attack ratios, with a noticeable drop only occurring between 40% and 50%.

* **Data Points**:

* 0% Attack ratio: Approximately 99% Accuracy.

* 10% Attack ratio: Approximately 99% Accuracy.

* 20% Attack ratio: Approximately 98% Accuracy.

* 30% Attack ratio: Approximately 98% Accuracy.

* 40% Attack ratio: Approximately 98% Accuracy.

* 50% Attack ratio: Approximately 72% Accuracy.

### Key Observations

* At 0% attack ratio, all methods achieve a very high accuracy, close to 99%.

* The "Ours" method (red line) consistently maintains the highest accuracy across all attack ratios, especially distinguishing itself at 30%, 40%, and 50%.

* Biscotti (gray line) is the least robust method, with its accuracy plummeting to 0% between 20% and 30% attack ratio.

* FedAvg, ShieldFL, Median, and FoolsGold exhibit similar performance profiles, maintaining high accuracy up to 30% attack ratio, then experiencing a steep drop between 30% and 50%, ending with very low accuracy (3-7%).

* PBFL (green line) shows a slightly earlier and more gradual decline than the group mentioned above (FedAvg, etc.) at lower attack ratios (10-30%), but still suffers a sharp drop at higher ratios.

* The most significant divergence in performance occurs after the 20% attack ratio, where Biscotti fails completely, and "Ours" maintains its high performance while others begin to decline.

### Interpretation

The data strongly suggests that the "Ours" method offers superior robustness against increasing attack ratios compared to the other six methods evaluated. While most methods perform well under low attack conditions (0-20%), their accuracy degrades significantly as the attack ratio increases beyond 30-40%. Biscotti, in particular, appears highly vulnerable to attacks, failing completely at moderate attack levels.

The "Ours" method's ability to maintain nearly 98% accuracy even at a 40% attack ratio, and still achieve 72% at a 50% attack ratio, indicates a substantial advantage in scenarios where systems are exposed to a high proportion of malicious or compromised participants. This resilience is a critical factor for applications requiring high reliability in adversarial environments, such as federated learning or distributed systems where data integrity and model accuracy are paramount. The other methods, while potentially effective in benign or low-threat environments, are shown to be much less suitable for robust operation under significant attack pressure.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Line Chart: Accuracy vs. Attack Ratio for Federated Learning Defenses

### Overview

This line chart depicts the accuracy of several Federated Learning (FL) defense mechanisms as a function of the attack ratio. The chart compares the performance of FedAvg, ShieldFL, PBFL, Median, Biscotti, FoolsGold, and a method labeled "Ours". Accuracy is measured in percentage, and the attack ratio is also expressed as a percentage.

### Components/Axes

* **X-axis:** "Attack ratio (%)" - Ranges from 0% to 50%, with markers at 0, 10, 20, 30, 40, and 50.

* **Y-axis:** "Accuracy (%)" - Ranges from 0% to 100%, with markers at 0, 20, 40, 60, 80, and 100.

* **Legend:** Located in the bottom-left corner, listing the following defense mechanisms with corresponding line colors:

* FedAvg (Blue)

* ShieldFL (Orange)

* PBFL (Green)

* Median (Purple)

* Biscotti (Grey)

* FoolsGold (Red)

* Ours (Brown/Maroon)

### Detailed Analysis

Here's a breakdown of each line's trend and approximate data points, verified against the legend colors:

* **FedAvg (Blue):** The line starts at approximately 98% accuracy at 0% attack ratio. It remains relatively stable until approximately 30% attack ratio, where it begins a steep decline, reaching approximately 2% accuracy at 50% attack ratio.

* **ShieldFL (Orange):** Starts at approximately 96% accuracy at 0% attack ratio. It remains stable until approximately 30% attack ratio, then declines to approximately 10% accuracy at 50% attack ratio.

* **PBFL (Green):** Starts at approximately 98% accuracy at 0% attack ratio. It remains stable until approximately 30% attack ratio, then declines to approximately 85% accuracy at 40% attack ratio and approximately 0% accuracy at 50% attack ratio.

* **Median (Purple):** Starts at approximately 98% accuracy at 0% attack ratio. It remains stable until approximately 30% attack ratio, then declines to approximately 80% accuracy at 40% attack ratio and approximately 0% accuracy at 50% attack ratio.

* **Biscotti (Grey):** Starts at approximately 96% accuracy at 0% attack ratio. It remains stable until approximately 30% attack ratio, then declines to approximately 80% accuracy at 40% attack ratio and approximately 0% accuracy at 50% attack ratio.

* **FoolsGold (Red):** Starts at approximately 98% accuracy at 0% attack ratio. It remains stable until approximately 30% attack ratio, then declines to approximately 70% accuracy at 40% attack ratio and approximately 0% accuracy at 50% attack ratio.

* **Ours (Brown/Maroon):** Starts at approximately 99% accuracy at 0% attack ratio. It remains stable until approximately 40% attack ratio, then declines to approximately 70% accuracy at 50% attack ratio.

### Key Observations

* All defense mechanisms exhibit a significant drop in accuracy as the attack ratio increases.

* The "Ours" method maintains higher accuracy than the other methods at higher attack ratios (40-50%).

* The most significant accuracy drops occur between 30% and 50% attack ratio for most methods.

* FedAvg, ShieldFL, PBFL, Median, Biscotti, and FoolsGold all converge to near 0% accuracy at a 50% attack ratio.

### Interpretation

The chart demonstrates the vulnerability of Federated Learning models to attacks, as evidenced by the declining accuracy with increasing attack ratios. The "Ours" method appears to be more robust against attacks, maintaining a higher level of accuracy even at a 50% attack ratio compared to the other defense mechanisms. This suggests that the "Ours" method provides a more effective defense against the type of attacks being simulated. The sharp decline in accuracy for all methods between 30% and 50% attack ratio indicates a critical threshold where the attacks become significantly more effective. The convergence of most methods to near-zero accuracy at 50% suggests a complete compromise of the model's integrity under a high attack load. The data suggests that while all defenses offer some initial protection, they are ultimately susceptible to attacks, and further research is needed to develop more resilient FL defense strategies.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Line Chart: Accuracy vs. Attack Ratio for Federated Learning Methods

### Overview

The image is a line chart comparing the performance (accuracy) of seven different federated learning methods or defenses as the ratio of adversarial attacks increases. The chart demonstrates how each method's accuracy degrades under increasing levels of attack.

### Components/Axes

* **Chart Type:** Multi-line chart with markers.

* **X-Axis:** Labeled **"Attack ratio (%)"**. The scale runs from 0 to 50, with major tick marks at 0, 10, 20, 30, 40, and 50.

* **Y-Axis:** Labeled **"Accuracy (%)"**. The scale runs from 0 to 100, with major tick marks at 0, 20, 40, 60, 80, and 100.

* **Legend:** Positioned in the **center-left** area of the plot. It lists seven data series with corresponding colors and marker symbols:

1. **FedAvg** - Blue line with square markers (□).

2. **ShieldFL** - Orange line with diamond markers (◇).

3. **PBFL** - Green line with upward-pointing triangle markers (△).

4. **Median** - Purple line with circle markers (○).

5. **Biscotti** - Gray line with left-pointing triangle markers (◁).

6. **FoolsGold** - Brown line with downward-pointing triangle markers (▽).

7. **Ours** - Red line with circle markers (○).

### Detailed Analysis

The chart plots accuracy against attack ratio for six data points per series (at 0%, 10%, 20%, 30%, 40%, and 50% attack ratio).

**Trend Verification & Data Points (Approximate):**

1. **FedAvg (Blue, □):** Maintains high accuracy (~98-99%) from 0% to 40% attack ratio. At 50% attack ratio, accuracy plummets to near 0%.

2. **ShieldFL (Orange, ◇):** Follows a nearly identical path to FedAvg. High accuracy (~98-99%) until 40%, then drops to near 0% at 50%.

3. **PBFL (Green, △):** Starts high (~98%). Shows a slight, gradual decline between 20% and 40% attack ratio (to ~90%). Experiences a sharp drop at 50% to near 0%.

4. **Median (Purple, ○):** Very similar to FedAvg and ShieldFL. Maintains ~98-99% accuracy until 40%, then drops to near 0% at 50%.

5. **Biscotti (Gray, ◁):** Exhibits a unique and severe failure mode. Maintains high accuracy (~98%) at 0%, 10%, and 20%. At **30% attack ratio, accuracy drops catastrophically to 0%** and remains at 0% for 40% and 50%.

6. **FoolsGold (Brown, ▽):** Follows the common high-accuracy plateau (~98-99%) until 40%. At 50%, it drops significantly but not to zero, landing at approximately 5-10% accuracy.

7. **Ours (Red, ○):** Demonstrates the most robust performance. Maintains near-perfect accuracy (~99%) from 0% to 40% attack ratio. At 50%, it experiences a decline but retains the highest accuracy of all methods, approximately **70%**.

### Key Observations

* **Common Plateau:** Six of the seven methods (all except Biscotti) maintain very high accuracy (>95%) up to a 40% attack ratio.

* **Critical Threshold:** A severe performance cliff exists for most methods between 40% and 50% attack ratio.

* **Outlier - Biscotti:** This method fails catastrophically at a much lower attack ratio (30%) compared to the others, dropping to 0% accuracy.

* **Best Performer:** The method labeled "Ours" is the clear outlier in robustness, retaining ~70% accuracy at the 50% attack ratio where all others have failed (≤10% accuracy).

* **Worst Performers at 50%:** FedAvg, ShieldFL, PBFL, and Median all converge to near 0% accuracy at the 50% attack ratio.

### Interpretation

This chart is a robustness evaluation, likely from a research paper proposing a new federated learning defense (the "Ours" series). The data suggests that:

1. **Attack Resilience:** Most standard or existing defense methods (FedAvg, ShieldFL, Median, PBFL) are highly effective against low-to-moderate levels of adversarial participation (up to 40%). However, they lack resilience against a majority attack (50%).

2. **Vulnerability of Biscotti:** The Biscotti method appears to have a specific vulnerability or breaking point at a 30% attack ratio, making it unsuitable for environments where attack levels might reach that threshold.

3. **Superiority of Proposed Method:** The primary conclusion the chart is designed to support is that the authors' proposed method ("Ours") offers significantly enhanced robustness. It maintains functional accuracy (70%) even when half of the participants are adversarial, a scenario that completely breaks all other compared methods.

4. **Practical Implication:** The chart argues for the adoption of the "Ours" method in high-risk federated learning deployments where a significant malicious presence is anticipated, as it degrades gracefully rather than failing catastrophically.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Chart: Accuracy vs. Attack Ratio for Federated Learning Methods

### Overview

The chart compares the accuracy retention of eight federated learning methods under varying attack ratios (0-50%). Accuracy (%) is plotted on the y-axis (0-100%), while attack ratio (%) is on the x-axis (0-50%). All methods start near 100% accuracy at 0% attack ratio, but performance degrades as attack intensity increases. The red line labeled "Ours" shows the steepest decline, while other methods exhibit more gradual degradation.

### Components/Axes

- **X-axis (Attack ratio %)**: Labeled "Attack ratio (%)", ranging from 0 to 50% in 10% increments.

- **Y-axis (Accuracy %)**: Labeled "Accuracy (%)", ranging from 0 to 100% in 20% increments.

- **Legend**: Positioned in the bottom-left corner, mapping colors/markers to methods:

- Blue square: FedAvg

- Orange diamond: ShieldFL

- Green triangle: PBFL

- Purple circle: Median

- Gray star: Biscotti

- Brown triangle: FoolsGold

- Red circle: Ours

### Detailed Analysis

1. **FedAvg (Blue)**: Starts at ~98% accuracy, declines gradually to ~20% at 50% attack ratio.

2. **ShieldFL (Orange)**: Begins at ~97%, drops to ~30% at 50% attack ratio.

3. **PBFL (Green)**: Maintains ~95% accuracy until 30% attack ratio, then plummets to ~10%.

4. **Median (Purple)**: Declines steadily from ~96% to ~15% at 50% attack ratio.

5. **Biscotti (Gray)**: Drops sharply to 0% accuracy by 30% attack ratio, remaining flat thereafter.

6. **FoolsGold (Brown)**: Declines from ~95% to ~5% at 50% attack ratio.

7. **Ours (Red)**: Starts at ~99%, freefalls to ~70% at 50% attack ratio—the steepest decline among all methods.

### Key Observations

- **Threshold Effect**: All methods experience a critical drop in accuracy after ~30% attack ratio.

- **Resilience Variance**: FedAvg and ShieldFL retain higher accuracy longer than others, suggesting better robustness.

- **Outlier Performance**: "Ours" method (red line) underperforms significantly compared to baselines, with a 29% accuracy loss at 50% attack ratio versus FedAvg’s 78% loss.

- **Biscotti Collapse**: Biscotti (gray line) fails catastrophically at 30% attack ratio, indicating poor attack resilience.

### Interpretation

The data demonstrates that federated learning methods vary widely in their resistance to adversarial attacks. The "Ours" method’s steep decline suggests it may lack effective defense mechanisms compared to established baselines like FedAvg and ShieldFL. The 30% attack ratio threshold appears to be a tipping point where most methods’ defenses fail, though ShieldFL and FedAvg degrade more gradually. Biscotti’s abrupt collapse highlights vulnerabilities in its design. These trends underscore the importance of attack-aware training and robust aggregation protocols in federated learning systems.

DECODING INTELLIGENCE...