## Log-Log Line Chart: Validation Perplexity vs. Training Steps for Different Recurrence Depths

### Overview

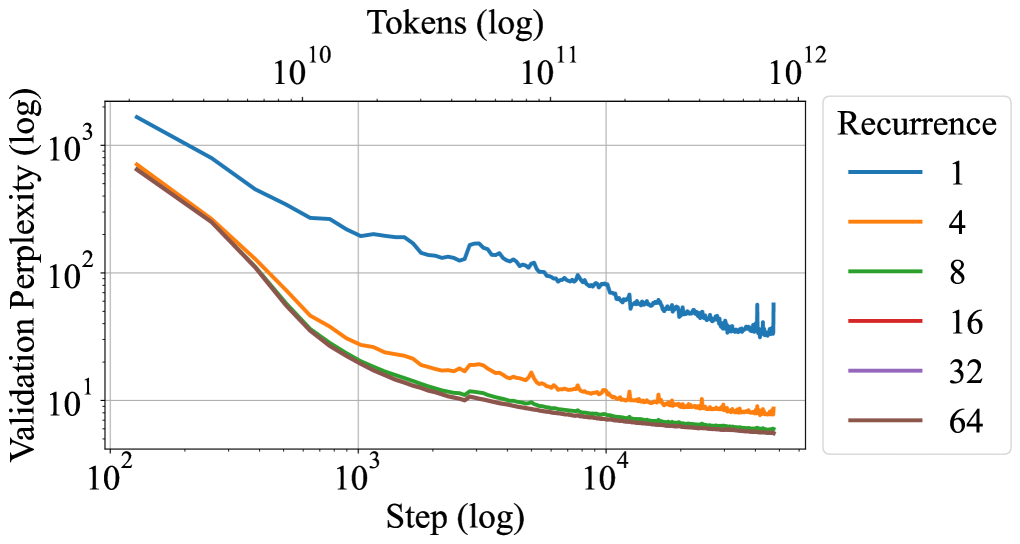

This image is a line chart plotted on a log-log scale. It displays the relationship between training progress (measured in steps and tokens) and model performance (measured by validation perplexity) for neural network models configured with different recurrence depths. The chart demonstrates how increasing the recurrence depth affects the model's learning efficiency and final performance.

### Components/Axes

* **Primary X-Axis (Bottom):** Labeled **"Step (log)"**. It is a logarithmic scale with major tick marks at `10²`, `10³`, and `10⁴`.

* **Secondary X-Axis (Top):** Labeled **"Tokens (log)"**. It is a logarithmic scale with major tick marks at `10¹⁰`, `10¹¹`, and `10¹²`. This axis provides an alternative measure of training data exposure.

* **Y-Axis (Left):** Labeled **"Validation Perplexity (log)"**. It is a logarithmic scale with major tick marks at `10¹`, `10²`, and `10³`. Lower perplexity indicates better model performance.

* **Legend (Right side):** Titled **"Recurrence"**. It contains six entries, each associating a color with a recurrence depth value:

* Blue line: `1`

* Orange line: `4`

* Green line: `8`

* Red line: `16`

* Purple line: `32`

* Brown line: `64`

### Detailed Analysis

The chart plots six data series, each corresponding to a different recurrence depth. All series show a general downward trend, indicating that validation perplexity decreases (performance improves) as training progresses (steps/tokens increase).

1. **Recurrence = 1 (Blue Line):**

* **Trend:** Slopes downward but remains significantly higher than all other lines throughout the entire training process. It exhibits more volatility, especially at higher step counts (around `10⁴` steps), where it shows sharp, small upward spikes.

* **Approximate Values:** Starts near `2 x 10³` perplexity at `10²` steps. Ends in the range of `30-50` perplexity at the final step (approx. `5 x 10⁴`).

2. **Recurrence = 4 (Orange Line):**

* **Trend:** Slopes downward more steeply than the blue line initially. It separates clearly from the cluster of higher recurrence lines (8, 16, 32, 64) after about `5 x 10²` steps and maintains a distinct, higher path.

* **Approximate Values:** Starts near `7 x 10²` perplexity at `10²` steps. Ends near `10¹` (10) perplexity at the final step.

3. **Recurrence = 8, 16, 32, 64 (Green, Red, Purple, Brown Lines):**

* **Trend:** These four lines are tightly clustered together, especially after `10³` steps. They follow a very similar, steep downward trajectory. The lines for recurrence 16, 32, and 64 are nearly indistinguishable for most of the plot. The green line (recurrence 8) is slightly above this tight cluster but converges with them by the end.

* **Approximate Values:** All start in the range of `6-8 x 10²` perplexity at `10²` steps. They converge to a final perplexity value slightly below `10¹` (approximately `6-8`) at the final step.

### Key Observations

* **Performance Hierarchy:** There is a clear performance hierarchy based on recurrence depth. Recurrence=1 performs worst, recurrence=4 is significantly better, and recurrence depths of 8 and above yield the best and very similar performance.

* **Diminishing Returns:** The performance gap between recurrence=4 and recurrence=8 is substantial. However, the gap between recurrence=8 and recurrence=64 is minimal, indicating strong diminishing returns for increasing recurrence beyond 8.

* **Convergence:** The models with recurrence ≥8 not only achieve lower final perplexity but also appear to converge to their final performance level at a similar rate.

* **Stability:** The model with recurrence=1 shows more instability (spikes) in its validation metric during later training stages compared to the smoother curves of models with higher recurrence.

### Interpretation

This chart provides empirical evidence for the benefit of using recurrence (or a similar mechanism like depth in a recurrent neural network) in language modeling. The data suggests that:

1. **Recurrence is Critical:** A model with minimal recurrence (depth=1) is severely limited in its capacity to learn and generalize, as shown by its persistently high perplexity.

2. **Optimal Range Exists:** There is an effective range for this hyperparameter. Increasing recurrence from 1 to 4 to 8 yields dramatic improvements in learning efficiency and final model quality.

3. **Saturation Point:** Beyond a recurrence depth of approximately 8, further increases provide negligible benefit for this specific task and model configuration. The lines for 16, 32, and 64 overlapping suggest the model's capacity or the task's complexity is saturated at that point.

4. **Training Efficiency:** Higher recurrence models not only reach a better final state but also learn faster in the early stages (steeper initial slope), achieving a given perplexity level in fewer steps/tokens.

The use of log-log scales indicates that the relationship between training duration and performance improvement follows a power-law trend, which is common in deep learning scaling laws. The chart effectively communicates that architectural choices (recurrence depth) fundamentally alter the scaling curve of the model.