\n

## Line Chart: Accuracy vs. Thinking Compute

### Overview

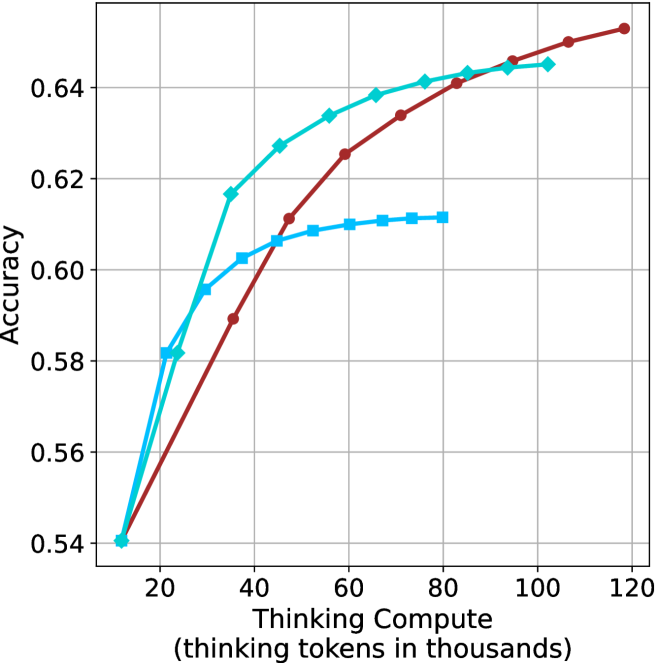

The image is a line chart plotting model accuracy against the amount of "thinking compute" allocated, measured in thousands of thinking tokens. It compares the performance of three distinct reasoning methods. The chart demonstrates that accuracy generally increases with more compute, but the rate of improvement and the point of diminishing returns differ significantly between methods.

### Components/Axes

* **X-Axis (Horizontal):**

* **Label:** "Thinking Compute (thinking tokens in thousands)"

* **Scale:** Linear scale from 0 to 120, with major tick marks every 20 units (0, 20, 40, 60, 80, 100, 120).

* **Y-Axis (Vertical):**

* **Label:** "Accuracy"

* **Scale:** Linear scale from 0.54 to 0.64, with major tick marks every 0.02 units (0.54, 0.56, 0.58, 0.60, 0.62, 0.64).

* **Legend (Top-Left Corner):**

* **Cyan line with diamond markers:** "Chain-of-Thought (CoT)"

* **Blue line with square markers:** "Self-Consistency (SC)"

* **Red line with circle markers:** "Tree-of-Thought (ToT)"

* **Grid:** A light gray grid is present, aligned with the major tick marks on both axes.

### Detailed Analysis

The chart contains three data series, each representing a different method. Their trends and approximate key data points are as follows:

1. **Chain-of-Thought (CoT) - Cyan line with diamonds:**

* **Trend:** Shows a very steep initial increase in accuracy, which then decelerates and begins to plateau at higher compute levels. It is the highest-performing method at low-to-mid compute ranges.

* **Key Data Points (Approximate):**

* (10, 0.54)

* (20, 0.58)

* (30, 0.617)

* (40, 0.627)

* (50, 0.633)

* (60, 0.638)

* (70, 0.641)

* (80, 0.643)

* (90, 0.644)

* (100, 0.645)

2. **Self-Consistency (SC) - Blue line with squares:**

* **Trend:** Increases steadily at first but plateaus much earlier and at a lower accuracy level than the other two methods. It shows the least benefit from additional compute beyond ~50k tokens.

* **Key Data Points (Approximate):**

* (10, 0.54)

* (20, 0.581)

* (30, 0.596)

* (40, 0.602)

* (50, 0.606)

* (60, 0.609)

* (70, 0.610)

* (80, 0.611)

3. **Tree-of-Thought (ToT) - Red line with circles:**

* **Trend:** Starts with a more gradual slope than CoT but maintains a steady, near-linear increase across the entire compute range shown. It surpasses the SC method around 45k tokens and eventually overtakes the CoT method at approximately 85k tokens, becoming the highest-performing method at high compute levels.

* **Key Data Points (Approximate):**

* (10, 0.54)

* (20, 0.56)

* (30, 0.58)

* (40, 0.59)

* (50, 0.611)

* (60, 0.625)

* (70, 0.634)

* (80, 0.641)

* (90, 0.646)

* (100, 0.650)

* (110, 0.653)

### Key Observations

* **Diminishing Returns:** All three methods exhibit diminishing returns; the accuracy gain per additional thousand tokens decreases as compute increases.

* **Crossover Point:** A critical crossover occurs at approximately 85,000 thinking tokens, where the Tree-of-Thought (ToT) method's accuracy surpasses that of Chain-of-Thought (CoT).

* **Early Plateau:** The Self-Consistency (SC) method shows the earliest and most pronounced plateau, suggesting it may not effectively utilize additional computational resources beyond a certain point.

* **Starting Point:** All three methods begin at the same accuracy point (~0.54) at the lowest compute level (10k tokens).

### Interpretation

This chart provides a comparative analysis of the scaling efficiency of different AI reasoning strategies. The data suggests a fundamental trade-off:

* **Chain-of-Thought (CoT)** is highly efficient at lower compute budgets, delivering rapid accuracy gains. It is the optimal choice when computational resources are constrained.

* **Tree-of-Thought (ToT)** demonstrates superior scaling. While less efficient initially, its performance continues to improve steadily with more compute, making it the best choice for high-performance scenarios where maximum accuracy is the goal and computational cost is a secondary concern.

* **Self-Consistency (SC)** appears to have a lower performance ceiling. Its early plateau indicates that the method's core mechanism (likely majority voting over multiple CoT paths) may saturate, and additional compute does not translate into proportionally better reasoning or accuracy.

The implication for system design is clear: the "best" method is context-dependent. One should select CoT for speed and efficiency in resource-limited settings, and invest in ToT for tasks demanding peak accuracy where ample compute is available. The SC method, in this specific comparison, seems outclassed by the other two across most of the compute spectrum.