## Bar Chart: Attack Success Rate Comparison

### Overview

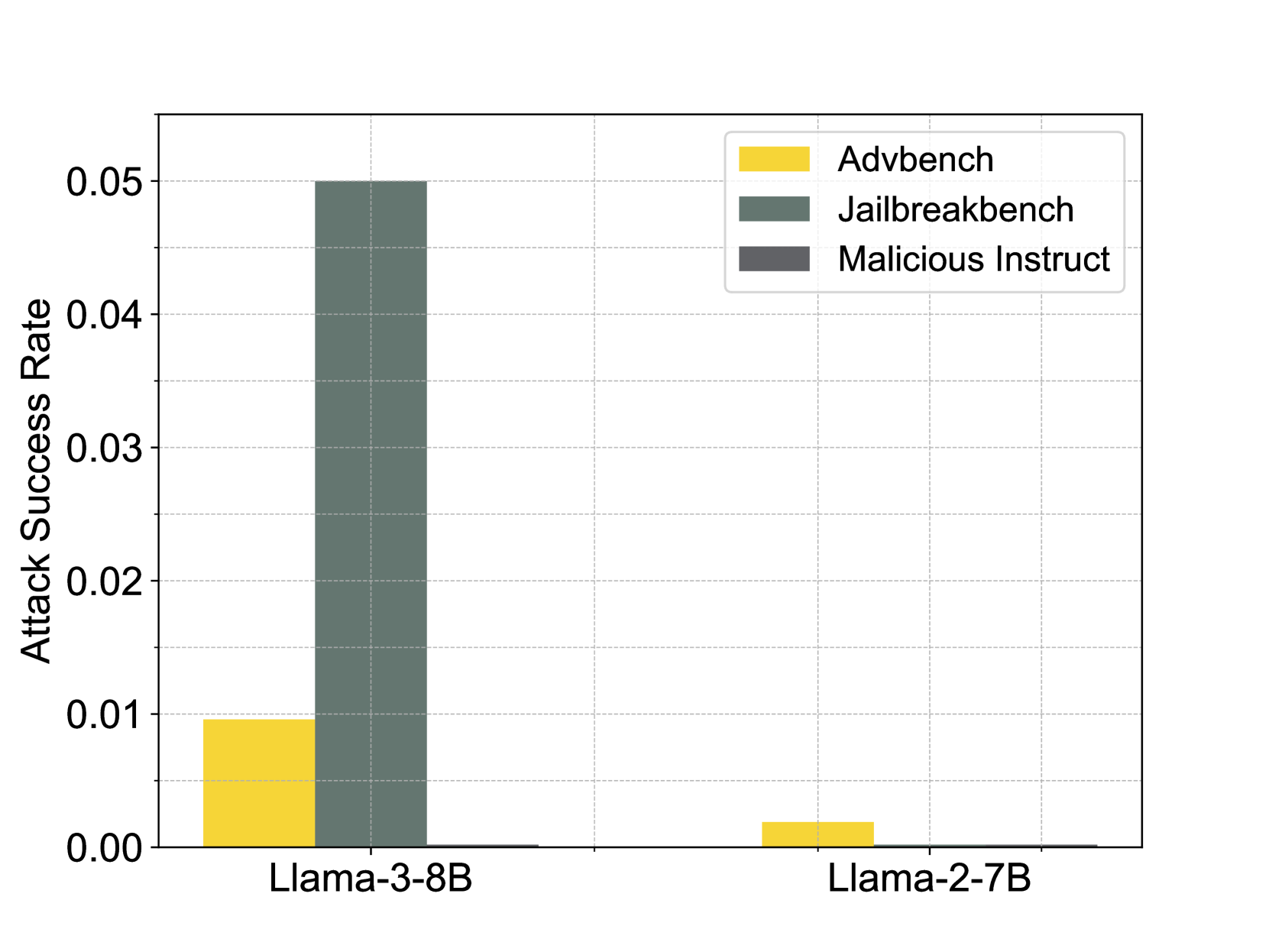

The image is a bar chart comparing the attack success rate of different attack methods (Advbench, Jailbreakbench, and Malicious Instruct) on two language models: Llama-3-8B and Llama-2-7B. The y-axis represents the attack success rate, and the x-axis represents the language models.

### Components/Axes

* **X-axis:** Language Models (Llama-3-8B, Llama-2-7B)

* **Y-axis:** Attack Success Rate (ranging from 0.00 to 0.05)

* Scale markers: 0.00, 0.01, 0.02, 0.03, 0.04, 0.05

* **Legend:** Located in the top-right corner.

* Advbench (Yellow)

* Jailbreakbench (Dark Green)

* Malicious Instruct (Dark Gray)

### Detailed Analysis

* **Llama-3-8B:**

* Advbench (Yellow): Attack success rate is approximately 0.009.

* Jailbreakbench (Dark Green): Attack success rate is approximately 0.05.

* Malicious Instruct (Dark Gray): No bar is present, implying a success rate of 0.00.

* **Llama-2-7B:**

* Advbench (Yellow): Attack success rate is approximately 0.002.

* Jailbreakbench (Dark Green): No bar is present, implying a success rate of 0.00.

* Malicious Instruct (Dark Gray): No bar is present, implying a success rate of 0.00.

### Key Observations

* Jailbreakbench is significantly more successful against Llama-3-8B compared to Advbench.

* Llama-2-7B appears to be more resistant to these attacks, with only Advbench showing a minimal success rate.

* Malicious Instruct has a 0.00 success rate for both models.

### Interpretation

The data suggests that Llama-3-8B is more vulnerable to Jailbreakbench attacks than Llama-2-7B. Llama-2-7B demonstrates a higher level of robustness against the tested attack methods. The absence of bars for Malicious Instruct indicates that this attack was not successful against either model. The difference in vulnerability between the two models could be attributed to variations in their architecture, training data, or implemented security measures.