## Line Chart: Performance of PNN Models Over 20 Epochs

### Overview

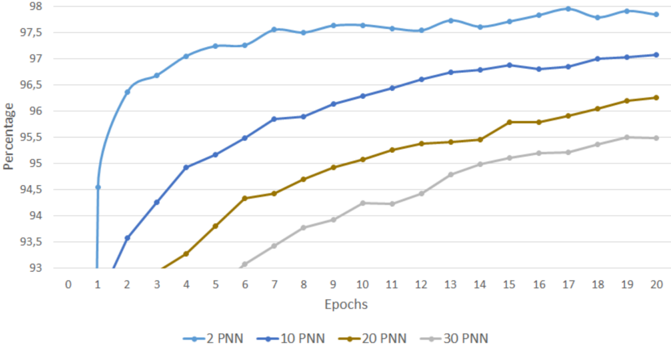

The image is a line chart displaying the training performance, measured as a percentage, of four different Probabilistic Neural Network (PNN) models over the course of 20 training epochs. The chart illustrates how the accuracy or performance metric for each model configuration improves with more training iterations.

### Components/Axes

* **Chart Type:** Multi-line chart.

* **X-Axis (Horizontal):** Labeled "Epochs". It represents the number of training cycles, with major tick marks and labels at every integer from 0 to 20.

* **Y-Axis (Vertical):** Labeled "Percentage". It represents the performance metric, with major tick marks and labels at intervals of 0.5, ranging from 93.0 to 98.0.

* **Legend:** Located at the bottom center of the chart. It contains four entries, each associating a colored line with a model label:

* Light Blue Line: "2 PNN"

* Dark Blue Line: "10 PNN"

* Gold/Brown Line: "20 PNN"

* Grey Line: "30 PNN"

### Detailed Analysis

The chart plots four distinct data series, each showing an upward trend, indicating that performance improves with more epochs for all models.

**1. 2 PNN (Light Blue Line)**

* **Trend:** This line shows the steepest initial ascent and maintains the highest position throughout the chart. It rises sharply from epoch 1 to 5, then continues a more gradual, slightly fluctuating upward climb.

* **Key Data Points (Approximate):**

* Epoch 1: ~94.5%

* Epoch 5: ~97.2%

* Epoch 10: ~97.6%

* Epoch 15: ~97.8%

* Epoch 20: ~97.9%

**2. 10 PNN (Dark Blue Line)**

* **Trend:** This line starts lower than the 2 PNN line but follows a similar, steady upward trajectory with less fluctuation. It consistently performs below the 2 PNN model but above the 20 and 30 PNN models.

* **Key Data Points (Approximate):**

* Epoch 2: ~93.5%

* Epoch 5: ~95.2%

* Epoch 10: ~96.3%

* Epoch 15: ~96.9%

* Epoch 20: ~97.1%

**3. 20 PNN (Gold/Brown Line)**

* **Trend:** This line begins its visible ascent later (around epoch 3) and climbs at a moderate, steady rate. It remains below the 10 PNN line but above the 30 PNN line for the entire duration.

* **Key Data Points (Approximate):**

* Epoch 3: ~93.0%

* Epoch 7: ~94.4%

* Epoch 12: ~95.3%

* Epoch 17: ~95.9%

* Epoch 20: ~96.2%

**4. 30 PNN (Grey Line)**

* **Trend:** This line shows the slowest initial progress, starting its visible climb around epoch 6. It has the lowest performance value at every epoch but demonstrates a consistent, linear upward trend.

* **Key Data Points (Approximate):**

* Epoch 6: ~93.0%

* Epoch 10: ~94.2%

* Epoch 14: ~94.9%

* Epoch 18: ~95.4%

* Epoch 20: ~95.5%

### Key Observations

1. **Performance Hierarchy:** There is a clear and consistent performance hierarchy: 2 PNN > 10 PNN > 20 PNN > 30 PNN. This order does not change at any point after the models begin reporting values.

2. **Convergence Rate:** Simpler models (lower PNN count) not only achieve higher final performance but also converge faster. The 2 PNN model reaches 97% by epoch 5, a level the 10 PNN model only approaches by epoch 20.

3. **Diminishing Returns:** Increasing the PNN count appears to correlate with slower learning and lower final performance within the 20-epoch window shown. The gap between the 2 PNN and 30 PNN lines is substantial (~2.4 percentage points at epoch 20).

4. **Stability:** The 2 PNN line shows minor fluctuations (e.g., a slight dip around epoch 11), while the other lines appear smoother, suggesting more stable but slower learning.

### Interpretation

This chart demonstrates a clear inverse relationship between the complexity of the PNN model (as denoted by the number in its label) and its training efficiency and final performance within a fixed, limited training period (20 epochs).

The data suggests that for this specific task and training setup, simpler PNN architectures are significantly more effective. They learn faster and reach a higher performance plateau. The more complex models (20 PNN, 30 PNN) are not only slower to start learning but also appear to be on a trajectory to converge at a lower performance level.

This could indicate several underlying factors: the simpler models may be better suited to the problem's complexity, avoiding overfitting; the training hyperparameters (like learning rate) may not be optimized for the larger models; or the larger models may require significantly more epochs to reach their potential, which is not captured in this 20-epoch window. The chart provides strong evidence that, within the constraints of this experiment, increasing model complexity beyond a certain point (here, 2 PNN) is detrimental to performance.