## Bar Chart: Q-Anchored vs A-Anchored Performance on Different Datasets for Llama-3-8B and Llama-3-70B

### Overview

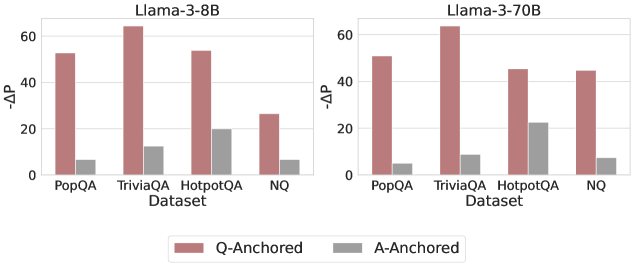

The image presents two bar charts comparing the performance of "Q-Anchored" and "A-Anchored" methods across four datasets (PopQA, TriviaQA, HotpotQA, and NQ) for two language models: Llama-3-8B and Llama-3-70B. The y-axis represents "-ΔP", and the x-axis represents the dataset. The charts visually compare the performance difference between the two anchoring methods for each dataset and model.

### Components/Axes

* **Titles:**

* Left Chart: "Llama-3-8B"

* Right Chart: "Llama-3-70B"

* **Y-axis:**

* Label: "-ΔP"

* Scale: 0, 20, 40, 60

* **X-axis:**

* Label: "Dataset"

* Categories: PopQA, TriviaQA, HotpotQA, NQ

* **Legend:** Located at the bottom of the image.

* Q-Anchored: Represented by a light brown/reddish bar.

* A-Anchored: Represented by a gray bar.

### Detailed Analysis

**Llama-3-8B (Left Chart):**

* **PopQA:**

* Q-Anchored: Approximately 52

* A-Anchored: Approximately 7

* **TriviaQA:**

* Q-Anchored: Approximately 64

* A-Anchored: Approximately 12

* **HotpotQA:**

* Q-Anchored: Approximately 53

* A-Anchored: Approximately 20

* **NQ:**

* Q-Anchored: Approximately 27

* A-Anchored: Approximately 7

**Llama-3-70B (Right Chart):**

* **PopQA:**

* Q-Anchored: Approximately 52

* A-Anchored: Approximately 6

* **TriviaQA:**

* Q-Anchored: Approximately 63

* A-Anchored: Approximately 8

* **HotpotQA:**

* Q-Anchored: Approximately 45

* A-Anchored: Approximately 23

* **NQ:**

* Q-Anchored: Approximately 45

* A-Anchored: Approximately 7

### Key Observations

* In both charts, the "Q-Anchored" method consistently outperforms the "A-Anchored" method across all datasets.

* The "TriviaQA" dataset shows the highest "-ΔP" value for "Q-Anchored" in both Llama-3-8B and Llama-3-70B.

* The "A-Anchored" method shows relatively low "-ΔP" values across all datasets and both models.

* The performance difference between "Q-Anchored" and "A-Anchored" is most pronounced in the "TriviaQA" dataset for both models.

* The Llama-3-70B model shows a slightly different performance profile compared to Llama-3-8B, particularly in the "HotpotQA" and "NQ" datasets, where the "-ΔP" values for "Q-Anchored" are lower than in Llama-3-8B.

### Interpretation

The data suggests that the "Q-Anchored" method is significantly more effective than the "A-Anchored" method for both Llama-3-8B and Llama-3-70B models across the tested datasets. The consistent outperformance of "Q-Anchored" indicates that anchoring the questions leads to better performance in these question-answering tasks. The varying performance across datasets suggests that the effectiveness of the anchoring methods may be influenced by the specific characteristics of each dataset. The differences in performance between Llama-3-8B and Llama-3-70B highlight the impact of model size on the effectiveness of these anchoring methods. The higher values for TriviaQA suggest that the anchoring methods are particularly effective for trivia-style question answering.