\n

## Diagram: Neural Network Layer Connections

### Overview

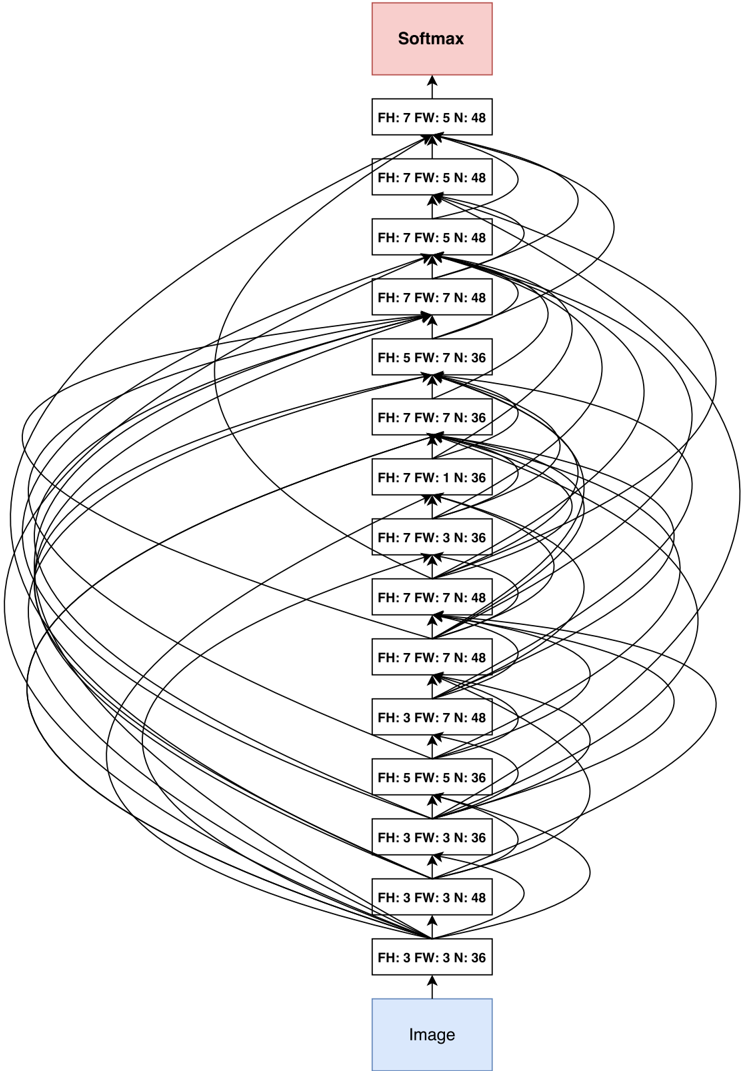

The image depicts a diagram of a neural network layer, specifically showing connections between an "Image" input layer and a "Softmax" output layer, with several intermediate layers labeled with "FH", "FW", and "N" values. The diagram illustrates the flow of information through these layers, represented by curved lines connecting the boxes. The connections appear to be somewhat random and dense, suggesting a fully or densely connected network.

### Components/Axes

The diagram consists of the following components:

* **Image:** A blue rectangular box at the bottom, representing the input layer.

* **Intermediate Layers:** A series of rectangular boxes stacked vertically, each labeled with "FH: [value] FW: [value] N: [value]".

* **Softmax:** A red rectangular box at the top, representing the output layer.

* **Connections:** Curved gray lines connecting the "Image" layer to the intermediate layers, and the intermediate layers to the "Softmax" layer. The lines indicate the flow of information.

The labels on the intermediate layers provide numerical values for FH, FW, and N. These likely represent Feature Height, Feature Width, and Number of features, respectively.

### Detailed Analysis or Content Details

The diagram shows 16 intermediate layers. Here's a breakdown of the FH, FW, and N values for each layer, starting from the bottom:

1. FH: 3 FW: 3 N: 36

2. FH: 3 FW: 3 N: 48

3. FH: 3 FW: 5 N: 36

4. FH: 3 FW: 7 N: 48

5. FH: 5 FW: 5 N: 36

6. FH: 7 FW: 1 N: 36

7. FH: 7 FW: 3 N: 36

8. FH: 7 FW: 5 N: 36

9. FH: 7 FW: 7 N: 48

10. FH: 7 FW: 7 N: 48

11. FH: 7 FW: 5 N: 48

12. FH: 7 FW: 5 N: 48

13. FH: 7 FW: 7 N: 48

14. FH: 5 FW: 7 N: 36

15. FH: 7 FW: 7 N: 48

16. FH: 7 FW: 5 N: 48

The connections are not explicitly labeled with weights or other parameters. The density of connections varies between layers. The connections from the "Image" layer to the intermediate layers are more sparse than the connections between the intermediate layers and the "Softmax" layer.

### Key Observations

* The FH and FW values generally increase as the layers move upwards, suggesting an expansion of feature maps.

* The N values (number of features) vary between 36 and 48.

* The connections appear to be fully connected, but with varying density.

* The diagram does not provide any information about the activation functions used in each layer.

### Interpretation

This diagram represents a portion of a convolutional neural network (CNN) or a similar type of deep learning architecture. The "Image" layer represents the input image, and the "Softmax" layer represents the output probabilities for different classes. The intermediate layers perform feature extraction and transformation.

The FH and FW values indicate the spatial dimensions of the feature maps at each layer. The N value represents the number of feature channels. The increasing FH and FW values suggest that the network is learning to represent the image at different scales.

The dense connections between the intermediate layers and the "Softmax" layer suggest that the network is using a large number of features to make its predictions. The varying density of connections could be due to techniques like dropout or pruning, which are used to reduce overfitting and improve generalization.

The diagram provides a high-level overview of the network architecture but does not provide any information about the specific weights or biases used in each layer. It also does not provide any information about the training process or the performance of the network.